Planet News Roundup

This is a roundup of articles from the openSUSE community listed on planet.opensuse.org.

The community blog feed aggregator lists the featured highlights below from April 3 to 9.

Blogs this week cover the fourth bugfix update to KDE Plasma 6.6, Slimbook’s refreshed Creative ultrabook featuring the AMD Ryzen AI 9 365 with a dedicated AI NPU, and the promotion of Slimbook Days 2026, which the sales help support donations to KDE. Blogs also highlight two new Plasmoids for Plasma 6. One is the Aero Weather weather viewer and the other is Battery Plasmoid Boero. There were also practical tips for openSUSE users on using Rufus in DD mode when writing USB install media.

Here is a summary and links for each post:

Use DD Mode When Creating an openSUSE DVD Image with Rufus

The Geeko Blog warns openSUSE users that writing a DVD image to a USB drive using Rufus in ISO mode can silently skip files, resulting in a broken installer that fails to boot mid-installation. The author discovered this when attempting a fresh install of openSUSE 16.0 on physical hardware and confirmed the issue across multiple machines. Switching Rufus to DD mode resolved the problem entirely, and readers are advised to always use DD mode when creating openSUSE USB media.

Aero Weather Widget – Weather Viewer Plasmoid for Plasma 6 (26)

The KDE Blog presents Aero Weather; it’s a desktop weather viewer widget for KDE Plasma. The plasmoid displays current conditions and a multi-day weather forecast directly on the desktop. It also has support for automatic IP-based location detection or manual coordinates along with customizable font colors.

New Slimbook Creative, Renewing its High-End Model

The KDE Blog covers Slimbook’s 2026 refresh of its Creative ultrabook that features the AMD Ryzen AI 9 365 processor with a dedicated NPU designed for local AI workloads. The updated model also brings improvements in performance, design, personalization, and portability.

Fourth Update of Plasma 6.6

The KDE Blog announces the fourth bugfix update of KDE Plasma 6.6, released on April 7, 2026, continuing the project’s regular maintenance cadence following the feature release. The post recaps the major new features introduced in the full Plasma 6.6 release, including the new Plasma Keyboard on-screen keyboard, OCR text extraction in Spectacle, and a new Plasma Setup configuration wizard. As with all bugfix updates, the release is strongly recommended for all users.

Battery Plasmoid Boero – Visual Plasmoids for Plasma 6 (27)

The KDE Blog presents Battery Plasmoid Boero, which is a widget that provides detailed battery monitoring including charge/discharge graphs and power mode settings. The plasmoid is aimed at laptop users who want more granular control over battery status than the default widget provides. Users interested in energy-efficient computing should visit eco.kde.org.

Interface and Stability Improvements – This Week in Plasma

The KDE Blog translates and summarizes the latest “This Week in Plasma” development report, covering ongoing work on interface refinements and stability fixes headed toward Plasma 6.7. The post highlights improvements across several Plasma components aimed at making the desktop feel more polished and reliable for daily use. This is part of the blog’s ongoing series of Spanish-language translations of Nate Graham’s weekly KDE development updates.

Linux Saloon 194 | News Flight Night

CubicleNate’s Blog recaps episode 194 of the Linux Saloon podcast, which focused on a range of current tech topics including Google’s Android ecosystem changes and sideloading restrictions. Participants also discussed the Claude Code source leak, critical security vulnerabilities in Telegram, and a notable increase in Steam’s reported Linux usage share. Yay!

Japan

Jakub Steiner’s Blog shares a personal travel post about a return trip to Japan. This time focused on Tokyo and a short excursion to Kawaguchiko during cherry blossom season. The post reflects on shooting with a Fuji X-T20 camera rather than relying solely on a smartphone, and includes a link to a full photo gallery on the author’s photo website.

Slimbook Days 2026

The KDE Blog announces the arrival of Slimbook Days 2026, which is a promotional sale period for the GNU/Linux hardware brand happening between April 8 to 12. The post encourages readers to take advantage of the event, noting that Slimbook devices come fully pre-configured for GNU/Linux and come with a portion of each sale supporting the KDE community.

Screenshots and Screen Recording in Plasma 6.6

The KDE Blog examines the screenshot and screen recording improvements in KDE Plasma 6.6, with a particular focus on Spectacle’s new ability to recognize and extract text from captured images using OCR. This addition is highlighted as a significant usability and accessibility improvement and makes it easier to create alt text for visual content.

View more blogs or learn to publish your own on planet.opensuse.org.

Moving to Zola

I've finally gotten around to porting this blog over to Zola. I've been running on Jekyll for years now, after originally conceiving this blog in Middleman (and PHP initially). But time catches up with everything, and the friction of maintaining Ruby dependencies eventually got to me.

The Speed

I can't stress this enough — Zola is fast. Not "for a static site generator" fast. Just fast. My old Jekyll setup needed a good few seconds to rebuild after a change. Zola builds in milliseconds. The entire site rebuilds almost before I can release the key. It's not critical for a site that gets updated 5 times a year, but it's still impressive.

No Dependencies

This is the big one. Every time you leave a project alone for a few months and come back, you know it's not just going to magically work. The gem versions drift, Bundler gets confused, and suddenly you're down a rabbit hole of version conflicts. The only reason all our Jekyll projects were reasonably easy to work with was locking onto Ruby 3.1.2 using rvm. But at some point the layers of backwardism catch up with you.

Zola is a single binary. That's it. No bundle install, no Gemfile, no "works on my machine" prayers. Download, run, done. It even embeds everything — syntax highlighting, image processing, Sass compilation (if you haven't embraced the modern CSS light yet) — all built-in. The site builds the same on any machine with zero setup.

The Heritage

Zola started life as Gutenberg in 2015/2016, a learning project for Rust by Vincent Prouillet. He was using Hugo before, but hated the Go template engine. That spawned Tera, the Jinja2-inspired template engine that Zola uses.

The project got renamed to Zola in 2018 when the name conflicts with Project Gutenberg got too annoying. It's pure Rust, which means it's fast, memory-safe, and ships as a tiny static binary.

Asset Colocation

One thing I've always focused on for this blog architecture wise is the structure — images and media live right alongside the post, not stuffed into some shared /images/ folder somewhere like most Jekyll sites seem to do. Zola calls this "asset colocation," and it's a first-class feature. No plugins needed. Just put your images in the same folder as your index.md, reference them directly, and Zola handles the rest.

This is how I'd already been running things with Jekyll, so the port was refreshingly painless on that front.

The Templating

The main work was porting the templates. It was the main shostopper when Bilal suggested Zola a couple of years ago. I was hoping something with liquid to pop up, but it seems like people running their own blogs is not a Tik Tok trend. Zola uses Tera instead of Liquid. The syntax is similar enough to get by, but there's enough branches in your path to stumble on. The error messages actually make sense though and point you at the problem, which is a refreshing change from debugging broken Liquid includes.

The Improvements

Beyond speed, I've been cleaning up things the old theme dragged along:

- Dark mode without JavaScript: The original Klise theme injected a script to toggle themes. The new setup uses CSS-only theming via custom properties, no flash of wrong theme, no JS required.

- Legibility: I'm getting older, and apparently so are my readers. Font sizes bumped up, contrast dialled in. What looked crisp at 30 looks muddy at 50.

The site's cleaner now, light by default, faster to build, and I don't need to invoke Ruby just to write a blog post. The experience was so damn good, it motivated me to jump at a much larger project I'm hopefully going to post about next.

Linux Saloon 194 | News Flight Night

Japan

Last year we went to Japan to finally visit friends after two decades of planning to. Because they live in Fukuoka, we only ended up visiting Hiroshima, Kyoto and Osaka afterwards. We loved it there and as soon as cheap flights became available, booked another one for Tokio, to be legally allowed to cross off Japan as visited.

Now if I were to book the trip today, I probably wouldn't. It's quite a gamble given the geopolitical situation and Asia running out of oil. But making it back, it's been as good as the first one. Visiting only Tokio with a short trip to Kawaguchiko in the Sakura blooming season worked out great.

At the start of the year, I promised myself I'd shoot my Fuji more. And no, I don't mean the volcano, I mean the X-T20. I haven't exactly kept that promise, usually just falling back on my iPhone instead. Luckily, I didn't chicken out of carrying the extra weight for this trip, and I think it really paid off! I did stick strictly to my 35mm lens, since my desire to haul heavy gear has definitely faded over the years. And honestly, after walking over 120km in just a few days, my back was already reminding me I'm not getting any younger, even with the minimal setup!

While the difference in quality isn't quite visible on Pixelfed or my photo website (I don't post to Instagram anymore), working through the set on a 4K display has been a pleasure. Bigger sensor is a bigger sensor.

Check out more photos on photo.jimmac.eu -- use arrow keys of swipe to navigate the set.

I also managed to get both of my weeklybeats tracks done on the flight so that's a bonus too!

Japan is probably quite difficult to live in, but as a tourist you get so much to feast your eyes on. It's like another planet. I hope to find more time to draw some of the awesome little cars and signs and white tiles and electric cables everywhere.

Planet News Roundup

This is a roundup of articles from the openSUSE community listed on planet.opensuse.org.

The community blog feed aggregator lists the featured highlights below from March 27 to April 2.

Blogs this week highlight the end of the nearly eight-year openSUSE Leap 15 era with Leap 15.6 reaching end of life, the March 2026 Tumbleweed monthly update covering three Plasma 6.6 point releases and kernel advances to 6.19.9, and the switch to systemd-boot as the default bootloader for fresh Tumbleweed installations. Blogs also cover the Claude Code source leak and its supply chain security lessons, syslog-ng benchmarks hitting 7 million events per second, accessibility improvements in Plasma 6.6, progress on Thunderbird’s Linux system tray integration and more.

Here is a summary and links for each post:

FSF Newsletter Roundup – April 2026

Victorhck compiles and translates highlights from the Free Software Foundation’s April 2026 newsletter, which marks the FSF’s 40th anniversary this month. Topics include Discord’s controversial age verification policies, Google’s friction-heavy process for installing unverified Android apps, and payment provider Nexi terminating its contract with the FSFE after the organization refused to hand over donor data without explanation.

Tumbleweed Monthly Update - March 2026

The openSUSE News publishes its March 2026 Tumbleweed monthly summary. The month saw three Plasma 6.6 point releases, kernel updates from 6.19.5 to 6.19.9, Mesa 26.0.2 fixing RDNA 4 visual corruption, and significant CVE attention for FreeRDP, curl, and the kernel.

Aero Weather Widget – Plasmoids for Plasma 6 (26)

The KDE Blog presents Aero Weather, a desktop weather viewer widget for KDE Plasma created by XcurcX. The plasmoid displays current conditions and multi-day forecasts with customizable font colors and supports automatic IP-based location or manual coordinates.

Tahoe Launcher – A macOS-Style Launcher for KDE Plasma – Plasmoids for Plasma 6 (25)

The KDE Blog showcases Tahoe Launcher, which is a minimalist macOS-style application launcher for KDE Plasma featuring a grid layout with transparency and blur effects. The widget is built natively in QML for low resource consumption and allows users to customize the grid dimensions, icon sizes, and search bar design.

Quick Update on the Package Version Tracking Feature in OBS

The Open Build Service Blog shares updates to the package version tracking feature as part of the Foster Collaboration beta program. The API now allows querying upstream and local package versions, and individual packages can opt out of version tracking when upstream monitoring is not relevant.

Closing Out a Roughly 8-Year Era

The openSUSE News announces that the openSUSE Leap 15 series is reaching its end of life after nearly eight years, starting with 15.0 on May 25, 2018. Leap 15.6 will stop receiving maintenance and security updates at the end of this month.

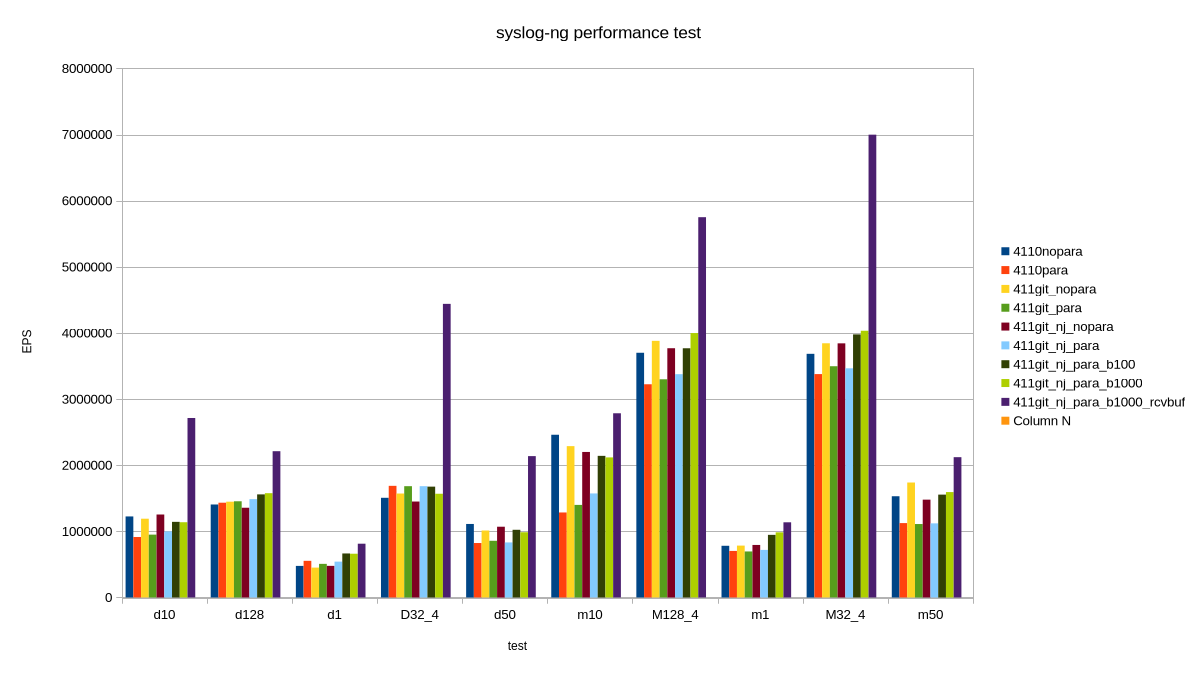

My New Toy: April 1 syslog-ng Performance Tests

Peter Czánik’s Blog presents benchmark results showing syslog-ng reaching 7 million events per second, though he immediately clarifies this is a synthetic lab measurement that does not represent real-world production performance. The post introduces sngbench, his open-source benchmarking tool for comparing syslog-ng performance across architectures and operating systems.

This Month in KDE Linux: March 2026

The KDE Blog summarizes Nate Graham’s March 2026 progress report on Adventures in Linux and KDE. Key improvements include better automatic rollback after failed updates, improved memory management to prevent total system freezes, and easier iPhone/iPad connectivity for photo transfers.

Thunderbird and the System Tray on Linux

Victorhck highlights progress from Thunderbird’s March 2026 development digest regarding the long-requested system tray integration for Linux. Contributor Christophe Henry has been working on a cross-platform approach spanning JavaScript, C++, and Rust to unify unread mail indicators and tray icon behavior.

Automating Test Management with QASE

Zoltán Balogh’s Blog breaks from his usual open-source focus to review QASE, a cloud-based test management tool, finding its API to be its strongest feature. He explains why the open-source world generally lacks dedicated test management tools, which he notes that community-driven projects like openSUSE and Debian tend to crowdsource quality engineering through staged releases rather than formal test plans.

Claude Code Leak: Exposes Half a Million Lines of AI

Alessandro’s Blog analyzes the March 31 incident in which a source map file accidentally published to npm exposed the entire Claude Code codebase, which was roughly 1,900 files and over 512,000 lines of TypeScript. Alessandro frames the incident as a cautionary lesson about build pipeline security and supply chain risks, arguing that the future of AI lies in mastering the orchestration layer around models, not just the models themselves.

My New Toy: Back to High-End Audio

Peter Czánik’s Blog shares how installing software synthesizers and connecting his HP Z2 Mini AI workstation to his HiFi system reminded him of how much better high-end audio sounds compared to the laptop speakers and meeting-oriented headphones he had been using for months. The post is part of his ongoing series about adventures with his AI mini workstation.

KDE Express Episode 71: esLibre2026 – Digital Self-Defense Guide with Enxeñería Sen Fronteiras

The KDE Blog covers episode 71 of the KDE Express podcast featuring Laura Salgueiro Sánchez from Enxeñería Sen Fronteiras promoting a talk at esLibre2026. The session will present a digital self-defense guide aimed at helping anyone improve their online security and recover digital sovereignty without requiring prior cybersecurity knowledge.

What is Better, Windows or Linux? My Experience 1 Year Later

The KDE Blog highlights a video by content creator GCtech sharing his experience after a full year of using Linux as his primary operating system. He praises Linux’s resource efficiency for reviving older hardware, its transparency and security model, while acknowledging gaps in professional software like Adobe and some limitations with AAA gaming.

Accessibility Improvements in Plasma 6.6

The KDE Blog details the accessibility enhancements in Plasma 6.6, including a new grayscale filter joining three existing color blindness correction filters. The magnification feature gains a new tracking mode that keeps the pointer centered on screen, and Slow Keys returns on Wayland to help users with motor difficulties avoid accidental keypresses.

Easy Microphone Sensitivity Adjustment – This Week in Plasma

The KDE Blog translates and covers the latest “This Week in Plasma” development report. Plasma 6.7 gains a microphone test-and-adjust feature, notification portal support for Flatpak apps, and a multi-GPU swapchain implementation in KWin.

openSUSE Tumbleweed Weekly Review – Week 13 of 2026

Victorhck and dimstar report on a slow week for Tumbleweed with only two snapshots (0324 and 0326) reaching the mirrors due to the transition from grub2-bls to systemd-boot as the default bootloader for fresh installations. Notable updates delivered include KDE Plasma 6.6.3, ffmpeg 8.1, FreeRDP 3.24.1, Linux kernel 6.19.9, and qemu 10.2.2. Upcoming changes in the pipeline include GNOME 50, Qt 6.11.0, Mozilla Firefox 149.0, GCC 16, and glibc 2.43.

View more blogs or learn to publish your own on planet.opensuse.org.

Tumbleweed Monthly Update - March 2026

There were several software package updates for openSUSE Tumbleweed during March.

Tumbleweed saw three Plasma 6.6 updates bringing progressive bugfixes to KWin, the system tray, Spectacle, and the Kicker launcher. KDE Frameworks advanced to 6.24.0 with nanosecond-precision timestamps in KIO and a new Kirigami StyleHints API. The Linux kernel moved from 6.19.5 to 6.19.9 with broad fixes across audio, display, and filesystem drivers. Both the Linux Kernel and FreeRDP fixed several Common Vulnerabilities and Exposures, and Mesa 26.0.2 resolved visual corruption on RDNA 4 hardware and a Counter-Strike 2 regression on Intel Arc.

As always, be sure to roll back using snapper if any issues arise.

For more details on the change logs for the month, visit the openSUSE Factory mailing list.

New Features and Enhancements

Plasma 6.6.1, 6.6.2 & Plasma 6.6.3: Version 6.6.3 finished the month with the third update. Application launcher Kicker receives several fixes for the sidebar, icon display, and expanded root list width calculations. The Task Manager now keeps thumbnails properly aligned in horizontal group tooltips. Spectacle resolves a crash on quick region selection and fixes a pixel-off error in the magnifier tool. The system tray sees improved popup placement on Wayland. PowerDevil restores the battery badge for 100 percent charge and syncs the manual inhibition switch with external changes. Plasma 6.6.2 has KWin resolve crashes in DRM output handling, improves mouse tracking with caret-based zoom, and fixes input region gaps in window decorations. The Kicker applet sees refinements in visual search, scrollbar behavior, and hover logic. Spectacle fixes a crash when exporting via KDE Connect, and System Settings now correctly navigates to subcategories from search results. In version 6.6.1, KWin sees the most changes with fixes for corner rounding applying to both decorations and window surfaces, zoom now works correctly on rotated outputs, and software brightness dimming on external screens on screens were enhanced. The tile editor no longer triggers on key repeat, and interactive move-resize no longer unconditionally raises windows. Clipboard and drag-and-drop teardown under XWayland is improved, and*Wine/Proton color management gains better compatibility. The Kicker application launcher for the Plasma Desktop receives multiple fixes for the icon display, layout margins, and search field behavior. The Task Manager corrects tooltip sizing. The digital clock now properly localizes digits, and the media controller fixes premature label truncation. Plasma Network Manager improves icon accuracy for Wi-Fi disabled states and now responds to external configuration changes. Discover improves Flatpak app resolution and exposes proper star count ratings. Powerdevil adds a power level check before executing critical actions that prevent premature shutdowns.

GNOME Control Center 49.5: The Display and Power panels now handle a missing UPower service instead of failing. An infinite loop when switching battery charge modes on systems with multiple batteries was fixed. Sound and Bluetooth device switching regressions are resolved through an updated libgnome-volume-control.

libxml2 2.15.2: A significant version jump that removes the built-in HTTP client and LZMA compression support, and the parser option XML_PARSE_UNZIP is now required to read compressed data. HTML serialization and character encoding handling are brought more in line with the HTML5 specification, and additional accessors for xmlParserCtxt were added for developers. Several previously patched CVEs are now resolved upstream, including fixes for attribute normalization and standalone checks. Python bindings are no longer built as they are scheduled for removal in 2.16, and Schematron support has similarly been dropped.

Xfce 4.20.2: This update covers the screensaver, session manager, and display settings. xfce4-screensaver fixes a wrong conditional in the lock plug, improves theme preview rendering, and switches from pidof to pgrep for more reliable process detection. The overlay window handling is reworked to use a single permanent window, improving device reliability. xfce4-session fixes an idle function and prevents multiple logout dialogs from being created. It also adds gnome-keyring as a Secret portal provider and improves keyboard layout detection on Wayland. xfce4-settings improves display management by checking EDID to detect output list changes, adds a missing condition for new Wayland outputs, and falls back to output name when EDID data is duplicated.

KDE Frameworks 6.24.0: This updates see KIO gain nanosecond-precision timestamps across file operations, improved paste dialogs with proper titles, and refined trash handling. KCodecs overhauls encoding with safer memory management (using unique_ptr) and Kirigami introduces a new StyleHints API to unify theme behavior. Baloo fixes database access mode issues and KTextEditor adds search history clearing and safer clipboard handling.

7-Zip 26.00: The file manager now uses the file name as a secondary sorting key for more intuitive file list ordering, and the benchmark tool supports systems with more than 64 CPU threads. A bug preventing correct extraction of TAR archives containing sparse files is fixed.

KDE Gear 25.12.3: Kdenlive addresses numerous stability and usability issues, including crashes in the curve editor, audio scrubbing with “Pause on Seek” disabled, and provides better handling of multi-stream clips and improved effect management. Itinerary and Kitinerary expand travel support with new extractors for ferry tickets. NeoChat refines room list navigation, fixes emoticon editor layout issues, prevents timeline scrolling during reactions, and resolves a crash. KMail restores proper rendering of plain-text emails and Tokodon fixes alt-text editing and account switching after login failures.

ImageMagick 7.1.2.16 - 7.1.2.18: The image editor update for version 7.1.2.18 improves the reliability of animated image handling by fixing frame delay parsing and resolves a visual artifact where the -dissolve composite operation introduced random noise. Version 7.1.2.17 focused on addressing multiple vulnerabilities and security advisories are resolved along with out-of-band data handling improvements. Version 7.1.2.16 hardens security and adds overflow checks to several image write paths including JXL, PS3, sixel, SGI, and BMP/DIB. It fixes a heap over-read in BilateralBlurImage with even-dimension kernels, a NULL pointer dereference in HEIC NCLX color profile allocation, and a double-free in SVG gradientTransform parsing.

Ruby 4.0.2: This update fixes a YJIT bug. A segfault with argument forwarding combined with splat and positional arguments is resolved, along with a GC crash in String#% and a crash on signal raise. A 20 percent performance regression in Rails related to global allocatable slots and empty pages is addressed. Several Prism parser issues were corrected including misparsing of standalone in pattern matching and the and? predicate being confused with the and keyword.

FreeRDP 3.24.1: This update sees the API comprehensively marked with [[nodiscard]] to surface unchecked function return values. Smartcard support is improved including ECC key handling in PKCS#11 enumeration, proxy support is extended to RFX and NSC graphics modes, and SDL3 multi-monitor scaling is introduced. Numerous memory leaks across connection setup, settings copying, and smartcard paths were resolved.

libavif 1.4.0: This update adds support for Sample Transform schemes from the AVIF 1.2 specification, which enables 16-bit AVIF file handling and grid-based derived image items. Data behind a document for the software handles picture files was made with avifenc, which can now read PNG or JPEG files through stdin via --stdin-format and supports converting JPEG files with Apple-style gain maps. PNG decoding now respects cICP chunks for color information as per the PNG Third Edition specification. Encoding defaults have been refined; AOM_TUNE_IQ is now used for still color samples with libaom v3.13.0+, while AOM_TUNE_PSNR is used for alpha to avoid ringing artifacts from SSIM tuning. Support for libaom versions 2.0.0 and earlier is removed.

Key Package Updates

Linux kernel 6.19.5 - 6.19.9:: The 6.19.9 update improved audio with a speaker pop fix for Star Labs StarFighter hardware and the Btrfs filesystem resolves a space info lock issue during periodic reclaim. NFS3 now correctly returns EISDIR when a create operation encounters a directory alias. The 6.19.8 kernel was dominated by a major batch of AppArmor fixes and multiple CVE-tracked fixes that were backported. The 6.19.7 release receives multiple corrections for CFS/EEVDF scheduler including fixes for zero_vruntime tracking, lag clamping, and slice protection timing. The AMD XDNA accelerator driver resolves several issues including a crash when destroying suspended hardware contexts. The 6.19.6 kernel had fixes led by extensive perf tooling corrections including reference count leaks, srcline printing with inlines, and Zen 5 vendor event definitions for AMD. Btrfs replaces a BUG() call with proper error handling for unexpected delayed ref types, adds fallback to buffered IO for data profiles with duplication, and improves user interrupt handling in btrfs_trim_fs(). The 6.19.5 releases sees Btrfs correct a block_group_tree dirty list corruption and a chunk allocation abort caused by non-consecutive gaps. GFS2 resolves quota handling and an inline data write path. The SMB client fixes a potential use-after-free and double free in smb2_open_file(). A netfilter nf_tables fix adds an abort skip removal flag for set types to address tracked security-relevant issues.

GStreamer 1.28.1: This update includes a new whisper-based speech-to-text transcription element and the speechmatics element now supports detecting audio events like applause, laughter, and music. Reverse playback and gap handling are improved across multiple components. The V4L2 subsystem gains support for AV1 stateful decoding, and CUDA/GL interop copy paths in cudaupload and cudadownload are fixed. WebRTC components gain the ability to specify custom headers for signalling servers and negotiate H.264 profile and level for encoded input. Various memory leaks, build issues, and race conditions were resolved.

curl 8.19.0: This release addresses four security vulnerabilities and provides new features like initial support for MQTTS and fractional values for --limit-rate and --max-filesize. Support for OpenSSL-QUIC was dropped. A potential NULL dereference in Curl_h1_req_parse_read() was fixed along with a potential out-of-bounds read in OpenSSL debug logging. The build now enables NTLM authentication for compatibility with certain Exchange Server endpoints.

systemd 259.3 & 259.5: The 259.5 update had a notable fix and corrected systemd-update-helper from incorrectly skipping systemctl disable during package removal. A new clean-state command is introduced and triggered automatically at the end of any transaction installing unit files. The systemd-container subpackage now requires libarchive instead of tar for archive handling. Additional systemd-update-helper fixes address do_install_units() incorrectly returning an error when no units need preset, and the clean-state command itself is corrected to remove the full state directory rather than just a subdirectory. The 259.3 was a major version upgrade. The libcap dependency was removed entirely, with its system call wrappers reimplemented directly in systemd.

GnuPG 2.5.18: This update adds support for deleting composite secret keys in gpg-agent and fixes armor parsing when no CRC is found. A recent regression in pkdecrypt with TPM RSA keys is resolved, and scdaemon adds support for D-Trust Card 6.1/6.4. The dirmngr key server search now prints all UID records for a key, which fixes a regression dating back to 2015.

Mesa 26.0.2: The release fixes visual corruption on RDNA 4 in DX11/DXVK titles like Mafia III, a GPU hang with PS epilogs and secondary command buffers, and missing L2 cache invalidation with streamout on GFX12. A Counter-Strike 2 visual glitch regression on Intel A770 is resolved. The Panfrost Bifrost compiler fixes a failure from incorrect vectorization and spill placement issues. An OpenGL VRAM memory leak when setting uniform variables is corrected. X11 shared memory attachment checks are added across drisw, EGL, GLX, and Vulkan WSI paths to prevent allocation failures.

GTK3 3.24.52: This update fixes a Firefox crash at gdk_wayland_drag_context_manage_dnd() when a toplevel Wayland surface is missing, and resolves wild strobing in multi-window mode. A refresh rate calculation overflow on 32-bit targets is corrected, and recolored icon images are no longer constantly reloaded. Accessibility events for unfocused GtkTreeView widgets are fixed, and XKB initialization failures on Wayland are now handled more gracefully.

libtpms 0.10.2: This update fixes a memory leak by freeing the KDF context and resolves incorrect IV retrieval when using OpenSSL 3.0 or later. A build fix for compatibility with newer glibc is also included. For Tumbleweed users running TPM-based virtualization with QEMU or swtpm, this is a security-relevant update.

xfsprogs 6.18.0: This update spans three releases. The mkfs.xfs tool gains several improvements including the ability to configure desired maximum atomic write sizes, AG size alignment based on atomic write capabilities, autodetection of log stripe unit for external log devices, and new default features enabled out of the box with a 2025 LTS config file. Zoned filesystem support is refined with fixes for zone capacity checks on sequential zones and improved default maximum open zones. The proto subsystem adds the ability to populate a filesystem directly from a directory. xfs_scrub removes its EXPERIMENTAL warnings and fixes a null pointer crash in scrub_render_ino_descr. Cross-architecture log CRC mismatches between i386 and other architectures are corrected, and libxfs gains support for reproducible filesystems using deterministic time and seed values. Deprecated sysctl knobs and mount options are removed. The Python dependency is also dropped from the main package since the xfs_protofile script is not essential.

Security Updates

Python 3.11.15:

-

CVE-2025-11468: Fixes a header injection flaw in email header folding where long comments with unfoldable characters could allow injecting headers into email messages.

-

CVE-2025-12084: Addresses quadratic complexity that could lead to denial of service when processing deeply nested documents.

-

CVE-2025-6075: Resolves a performance degradation in

os.path.expandvars()when user-controlled values are passed for environment variable expansion. -

CVE-2026-2297: Fixes an issue where CPython’s import hook for legacy .pyc files did not trigger sys.audit handlers and could potentially allow a security monitoring bypass.

bind 9.20.21:

-

CVE-2026-1519: Fixes a flaw that could potentially lead to denial of service.

-

CVE-2026-3104: Addresses a memory leak that could cause unbounded memory growth and an out-of-memory condition.

-

CVE-2026-3119: Resolves an issue where an authenticated query could cause a termination unexpectedly.

-

CVE-2026-3591: Fixes a use-after-return flaw that could allow an attacker to bypass ACL restrictions via crafted DNS requests.

Linux kernel 6.19.8::

-

CVE-2026-23230: Fixes a vulnerability in the ksmbd kernel SMB server.

-

CVE-2026-23220: Addresses an infinite loop caused by next_smb2_rc in ksmbd.

-

CVE-2026-23226: Resolves a missing lock to protect ksmbd channel list.

-

CVE-2026-23228: Fixes a leak of active_num_conn in the ksmbd SMB server.

-

CVE-2025-71231: Addresses an out-of-bounds index in the crypto IAA driver.

-

CVE-2026-23222: Fixes a memory allocation issue in the crypto OMAP driver.

-

CVE-2026-23229: Resolves a missing spinlock protection in the crypto virtio driver.

-

CVE-2025-71237: Fixes a potential block overflow in nilfs2 that could cause corruption.

-

CVE-2025-71230: Addresses an issue where HFS superblock info was not always cleaned up properly.

-

CVE-2025-71229: Resolves an alignment fault in the rtw88 WiFi driver.

-

CVE-2025-71236: Fixes missing validation before freeing resources in the qla2xxx SCSI driver.

-

CVE-2025-71235: Addresses a module unload race condition in the qla2xxx SCSI driver.

-

CVE-2025-71232: Resolves a memory leak in an error path in the qla2xxx SCSI driver.

-

CVE-2026-23225: Fixes an incorrect CID ownership assumption in the scheduler mmcid subsystem.

-

CVE-2026-23221: Addresses a use-after-free in the fsl-mc bus driver override handling.

-

CVE-2026-23224: Resolves a use-after-free in erofs for file-backed mounts.

-

CVE-2026-23223: Fixes a use-after-free in XFS btree block owner checking.

-

CVE-2026-23227: Addresses a missing lock protection in the Exynos VIDI DRM driver.

-

CVE-2025-71233: Resolves an issue with asynchronous sub-group creation in PCI endpoint.

-

CVE-2025-71234: Fixes a slab out-of-bounds access in the rtl8xxxu WiFi driver.

-

CVE-2025-71238: Addresses a double-free in the qla2xxx SCSI driver’s bsg_done handler.

-

CVE-2026-23236: Fixes improper ioctl memory copy in the smscufx framebuffer driver.

-

CVE-2026-23235: Resolves an out-of-bounds access in f2fs sysfs attribute handling.

-

CVE-2026-23234: Addresses a use-after-free in f2fs write end I/O handling.

libtpms 0.10.2:

- CVE-2026-21444: Fixes a flaw in libtpms that weakened encryption and decryption.

LibVNCServer:

-

CVE-2026-32853: Fixes a vulnerability where a crafted message could lead to information disclosure or denial of service.

-

CVE-2026-32854: Addresses an issue where crafted requests could cause a denial of service.

freeipmi 1.6.17:

- CVE-2026-33554: Resolves improper memory handling and data validation that could lead to stack buffer overflows and acceptance of malformed payloads.

nghttp2 1.68.1:

- CVE-2026-27135: Addresses a vulnerability that fixes an assertion failure from missing state validation.

inkscape 7.1.2.15:

-

CVE-2026-24481: Fixes a heap information disclosure when processing malformed PSD files.

-

CVE-2026-25794: Addresses a heap buffer overflow via integer overflow when writing images with large dimensions.

-

CVE-2026-25796: Resolves a memory leak that could be exploited for denial of service.

-

CVE-2026-25637: Fixes a memory leak in the ASHLAR image writer that could lead to denial of service.

-

CVE-2026-25576: Addresses a heap buffer over-read in multiple raw image format handlers potentially disclosing sensitive information.

-

CVE-2026-26983: Fixes a NULL pointer dereference in the MSL interpreter that could cause a crash.

-

CVE-2026-26284: Resolves a use-after-free that could lead to denial of service or code execution.

-

CVE-2026-26283: Addresses an infinite loop in the JPEG encoder that could cause denial of service.

-

CVE-2026-25965: Fixes a path traversal that could allow reading arbitrary files on the system.

-

CVE-2026-25967: Addresses improper encoding or escaping of output that could allow arbitrary command execution.

-

CVE-2026-25989: Fixes an integer overflow in the internal SVG decoder that could cause denial of service.

-

CVE-2026-25968: Resolves a memory leak in coders that write raw pixel data potentially leading to denial of service.

-

CVE-2026-24485: Addresses an out-of-bounds read that could cause a crash.

-

CVE-2026-25985: Fixes unbounded resource allocation in the SVG decoder that could lead to denial of service.

-

CVE-2026-25987: Resolves an integer overflow in the SVG decoder potentially causing denial of service.

-

CVE-2026-25966: Addresses a security policy bypass via fd: pseudo-filenames allowing stdin/stdout access.

-

CVE-2026-25799: Fixes an out-of-bounds read that could disclose memory contents.

-

CVE-2026-25798: Resolves an out-of-bounds read potentially leading to information disclosure or a crash.

-

CVE-2026-25795: Fixes a NULL pointer dereference that could cause a denial of service.

-

CVE-2026-26066: Addresses resource exhaustion when writing IPTCTEXT that could lead to denial of service.

-

CVE-2026-25638: Resolves a memory leak that could be exploited for denial of service.

-

CVE-2026-25797: Fixes a code injection issue that could allow arbitrary code execution.

-

CVE-2026-25897: Addresses a heap buffer overflow in the sun decoder potentially causing a crash.

-

CVE-2026-25970: Resolves a memory leak that could lead to denial of service via image processing.

-

CVE-2026-25982: Fixes a use-after-free that could lead to denial of service or code execution.

-

CVE-2026-25983: Addresses an out-of-bounds read in the PCD coder that could disclose memory contents.

-

CVE-2026-25898: Resolves an out-of-bounds read that could cause a crash or information disclosure.

-

CVE-2026-25971: Fixes a memory leak in the text coder that could lead to denial of service.

-

CVE-2026-25988: Addresses a use-after-free in the meta coder potentially allowing code execution.

-

CVE-2026-25969: Resolves a memory leak that could lead to denial of service via image processing.

-

CVE-2026-25986: Fixes a vulnerability that could lead to denial of service when processing crafted images.

expat 2.7.5:

-

CVE-2026-32776: Fixes a NULL pointer when handling empty external parameter entity content.

-

CVE-2026-32777: Addresses an infinite loop that could lead to denial of service.

-

CVE-2026-32778: Resolves a NULL pointer after an earlier out-of-memory condition.

TigerVNC:

- CVE-2026-34352: Fixes incorrect permissions that could allow other users to observe or manipulate screen contents, or cause a crash.

clamav 1.5.2:

- CVE-2026-20031: Fixes an error handling bug that could crash the program and cause a denial of service.

FreeRDP 3.24.1:

-

CVE-2026-29774: Fixes a client-side heap buffer overflow.

-

CVE-2026-29775: Addresses an off-by-one boundary check in the bitmap cache subsystem that could cause out-of-bounds read/write.

-

CVE-2026-29776: Resolves an integer underflow that could lead to a crash.

-

CVE-2026-31806: Fixes a heap buffer overflow caused by unchecked bitmap dimensions from a malicious server.

-

CVE-2026-31883: Addresses a size_t underflow leading to a heap buffer overflow via the RDPSND channel.

-

CVE-2026-31884: Resolves a division-by-zero in the ADPCM decoders when nBlockAlign is 0, causing a crash.

-

CVE-2026-31885: Fixes an out-of-bounds read in the ADPCM decoders due to missing predictor and step_index bounds checks.

giflib:

- CVE-2026-23868: Fixes a double-free vulnerability from a shallow copy that could lead to memory corruption.

curl 8.19.0:

-

CVE-2026-1965: Fixes bad reuse of HTTP Negotiate connections that could lead to authentication bypass with wrong credentials.

-

CVE-2026-3783: Addresses a token leak when following redirects with netrc credentials.

-

CVE-2026-3784: Resolves wrong proxy connection reuse with different credentials, potentially exposing authenticated sessions.

-

CVE-2026-3805: Fixes a use-after-free in SMB connection reuse that could lead to a crash or potential code execution.

QEMU 10.2.2:

-

CVE-2026-2243: Fixes an out-of-bounds read in QEMU’s VMDK image handling that could lead to information disclosure or denial of service.

-

CVE-2026-3196: Addresses an integer overflow that could allow a malicious guest to cause unbounded memory allocation and denial of service on the host.

udisks2:

-

CVE-2026-26104: Fixes a missing authorization check that allowed unprivileged users to back up LUKS encryption headers and potentially expose sensitive cryptographic metadata.

-

CVE-2026-26103: Addresses a missing authorization check that allowed unprivileged users to restore LUKS encryption headers, which could potentially render encrypted volumes inaccessible.

GVFS 1.58.2:

- CVE-2026-28296: Fixes a CRLF injection flaw in the FTP backend that could allow a remote attacker to inject arbitrary FTP commands via crafted file paths.

python-tornado6

- CVE-2026-31958: Fixes a denial-of-service vulnerability where requests with thousands of parts could cause excessive CPU consumption.

libjxl 0.11.2:

- CVE-2026-1837: Fixes a memory corruption issue when processing crafted grayscale images with LCMS2 that could potentially lead to code execution or information disclosure.

util-linux:

- CVE-2026-3184: Addresses improper hostname canonicalization that could allow bypass of host-based PAM access control rules.

sdbootutil:

- CVE-2026-25701: Fixes an insecure temporary file vulnerability that could allow local users to access private information or manipulate boot configuration data.

ImageMagick 7.1.2.17:

- CVE-2026-32259: Fixes a stack-based buffer overflow when a memory allocation fails that could potentially allow writes past the end of a buffer.

GraphicsMagick:

-

CVE-2026-25799: Provides a fix that could lead to a crash and denial of service.

-

CVE-2026-28690: Fixes a stack buffer overflow vulnerability that could lead to a crash or potential code execution.

-

CVE-2026-30883: Addresses a heap overflow when encoding a PNG image with an extremely large image profile.

libsoup2:

-

CVE-2026-1760: Fixes improper handling of HTTP requests combining certain headers that could lead to HTTP request smuggling and potential denial of service.

-

CVE-2026-1467: Addresses a lack of input sanitization that could lead to unintended or unauthorized HTTP requests.

-

CVE-2026-1539: Resolves proxy authentication credentials being leaked via the Proxy-Authorization header when handling HTTP redirects.

-

CVE-2026-0716: Fixes a flaw in WebSocket frame processing that could cause out-of-bounds memory reads, potentially leading to memory exposure or a crash.

freetype2 2.14.2:

- CVE-2026-23865: Fixes an integer overflow in the FreeType library that could allow an out-of-bounds read when parsing OpenType variable fonts.

exiv2 0.28.8:

-

CVE-2026-25884: Fixes an out-of-bounds read in the CRW image parser when processing crafted image files.

-

CVE-2026-27631: Addresses an integer overflow causing an uncaught exception that could lead to a crash and denial of service.

-

CVE-2026-27596: Resolves an out-of-bounds read in preview handling that could cause a crash when processing crafted image files.

-

CVE-2025-54080: Fixes an out-of-bounds read triggered when writing metadata into a crafted image file, potentially causing a crash.

-

CVE-2025-55304: Addresses quadratic performance in ICC profile parsing that could lead to denial of service.

-

CVE-2025-26623: Resolves a heap buffer overflow when writing metadata into a crafted image file, potentially allowing code execution.

Salt:

- CVE-2026-31958: Fixes a denial-of-service vulnerability where requests could cause excessive CPU consumption.

openexr 3.4.6:

- CVE-2026-27622: Fixes an out-of-bounds write that could potentially lead to code execution when processing crafted EXR files.

Users are advised to update to the latest versions to mitigate these vulnerabilities.

Conclusion

March 2026 was a month defined by refinement and security hardening across the openSUSE Tumbleweed stack. The three Plasma 6.6 point releases demonstrated KDE’s steady cadence of desktop polish, while the kernel’s progression from 6.19.5 to 6.19.9 kept hardware support and filesystem reliability moving forward. Security was a clear theme throughout the month, with FreeRDP, curl, libsoup2, and the kernel itself all receiving significant CVE attention alongside a broad sweep of image processing fixes across GraphicsMagick, ImageMagick, and exiv2. Under the hood, the jump to libxml2 2.15.2 marked a meaningful step forward in web standards alignment, and GStreamer 1.28.1 pushed multimedia capabilities forward with speech-to-text transcription and AV1 decoding support.

Slowroll Arrivals

Please note that these updates also apply to Slowroll and arrive between an average of 5 to 10 days after being released in Tumbleweed snapshot. This monthly approach has been consistent for many months, ensuring stability and timely enhancements for users. Updated packages for Slowroll are regularly published in emails on openSUSE Factory mailing list.

Contributing to openSUSE Tumbleweed

Stay updated with the latest snapshots by subscribing to the openSUSE Factory mailing list. For those Tumbleweed users who want to contribute or want to engage with detailed technological discussions, subscribe to the openSUSE Factory mailing list . The openSUSE team encourages users to continue participating through bug reports, feature suggestions and discussions.

Your contributions and feedback make openSUSE Tumbleweed better with every update. Whether reporting bugs, suggesting features, or participating in community discussions, your involvement is highly valued.

Quick Update on the Package Version Tracking Feature in OBS

Closing Out a Roughly 8-Year Era

The series for openSUSE Leap 15 is coming to an end after nearly eight years of providing a consistent community distribution that’s upgradable to SUSE’s enterprise product. Leap 15.6 will reach End of Life (EOL) at the close of this month closing out an end of an era as it will no longer receive maintenance or security updates going forward.

The Leap 15 journey began it journey on May 25, 2018, when 15.0 was released as a fresh community build on top of SUSE Linux Enterprise 15. It brought a huge variety of new software along with a easy migration to SLE, transactional updates, server roles, scalable cloud images, and more.

What followed was an impressively long run of incremental releases from Leap 15.1 to 15.6 as each stable release aligned with its twin, which is source and binary compatible, and delivered maintenance and security updates to users over several years.

The series far exceeded promises and ultimately spanned nearly eight years of active support. With Leap 15.6 going EOL, users who wish to continue receiving maintenance and security updates should upgrade to Leap 16. Leap 16 is expected to go to 16.6 in Fall 2031 and will have 24 months of support for a point release.

Running an unsupported release means your system will no longer receive patches for vulnerabilities, which poses a real security risk over time. The upgrade path to Leap 16 is the recommended way to stay protected and supported.

You can download openSUSE Leap 16 and use the migration tool to upgrade.

Leap 15.6 itself received nearly 24 months of support, which extended the traditional support period of 18 months by about six months. With Leap 16, users can expect a full 24 months of community support per point release, which is a commitment that reflects the significant effort from maintainers to keep users protected.

Thank you to all the contributors, packagers, and users who made the Leap 15 series such a long-lasting and reliable platform. Here’s to the next chapter with Leap 16!

My new toy: April 1 syslog-ng performance tests

Almost 15 years ago, Balabit had a campaign, stating that syslog-ng could process 650k messages a second. Now I am happy to present 7 million EPS (events per second). Timing the announcement to April 1 is not a coincidence :-)

While the 650k EPS measurement was true, it was misleading. This value was measured right after syslog-ng 3.2 introduced multi-threading, in lab environment, under optimal circumstances, using synthetic log messages. However, there was no fine print explaining this, just the statement that syslog-ng could process 650k EPS. It was fixed after a while, but it took years to recover from the effects of this marketing campaign, and engineers ten years later still had a nervous breakdown when someone mentioned “650k”. Why? Because from that moment, everyone expected syslog-ng to collect logs at that message rate in a production environment with complex configurations. Which was of course not the case.

Fast-forward to today, I’m happy to share that:

syslog-ng can collect logs at 7 million EPS

-

Is this measurement value valid? Yes.

-

Does it apply to real world? No.

-

Does it sound good? Definitely :-)

My latest syslog-ng benchmark results

The tool: sngbench

I love playing with various non-x86 systems. I have various ARM, POWER, MIPS systems at home, and sometimes I access other architectures, like RISC-V remotely. And, of course, not just different architectures, but different operating systems: various Linux distributions, MacOS, FreeBSD, sometimes also other BSD variants. I’m a server guy, and for the past 15+ years: a syslog-ng guy. Sometimes I had access to an exotic system on the other side of the world only for less than an hour, but I almost always tested syslog-ng.

For many years I had a bunch of shell scripts and configs to benchmark syslog-ng performance. Not for real world production loads, but rather for comparing architectures and operating systems. I needed a script which could do measurements with minimal dependencies and do it quickly, in one go. This is how sngbench was born, based on my previous ugly scripts. It has quite a few advantages and shortcomings:

-

Minimal dependencies: bash and syslog-ng

-

No complex setup: everything runs on the same host

-

network bandwidth is not a limiting factor

-

loggenandsyslog-ngprocesses are competing for resources -

Two bundled configurations: a performance tuned and the default syslog-ng.conf from openSUSE with minimal modifications to add a TCP source

-

By default, very short (20 seconds) measurements, so disk I/O is not a limiting factor

-

Many different test scenarios: from a single TCP connection to 4 * 128

Of course this describes just the “factory defaults”. You can easily change the test scenarios and configurations too.

How I reached 7 million EPS, and why it is not relevant

I was testing syslog-ng code which was not yet even merged to the development branch. First, I tested these patches with various settings. Along the way I remembered that Splunk guidelines mention so-rcvbuf tuning also for TCP connections. Previously I only used that for optimizing UDP performance. Now I have done it for TCP. Wonders happened :-)

But, of course, the main question is: can you achieve this performance in production? TL;DR: No.

My tests are run from localhost. Network bandwidth is not an issue. Tests are run in short bursts. This is peak performance; when it comes to writing logs to files or forwarding to a cluster of Splunk or Elasticsearch endpoints around the clock, that would be slower. Also, in my fastest test case, logs came from four different loggen instances, over 32 TCP connections each, at a constant rate. In the real world, logs come in bursts and connections are opened and closed regularly.

Test environment and tests

I used my AI mini workstation with Fedora Linux 44 Beta. First, I took a base line with stock syslog-ng 4.11.0 included in the distribution. Then I used my syslog-ng git snapshot packages for Fedora from https://copr.fedorainfracloud.org/coprs/czanik/syslog-ng-githead/. Initially it also had jemalloc support compiled in. Later I disabled it and purely focused on the yet to be merged parallelize() optimizations from GitHub. I experimented with enabling and disabling parallelize(), adding various batch_size() values, and finally also so-recbuf().

AI in a miniature box :-)

This blog is part of a longer series about my adventures with my new machine and AI. You can reach me to discuss this blog on one of the contacts listed in the upper right corner. You can read the rest of the blogs under the toy tag.

Automating test management with QASE

I gave a proprietary tool a fair chance - and was not disappointed

The views expressed in this post are my own and do not represent the position of my employer. This post is not a commercial endorsement of QASE or any of its products.

I very seldom write about proprietary solutions and software. Not because I have any problem with them, but simply because I usually prefer open source solutions, and during my daily work and hobby projects I use almost exclusively open source software.

Member

Member jimmac

jimmac