Playing with Common Expression Language

Common Expression Language (CEL) is an expression language created by Google. It allows to define constraints that can be used to validate input data.

This language is being used by some open source projects and products, like:

- Google Cloud Certificate Authority Service

- Envoy

- There’s even a Kubernetes Enhancement Proposal that would use CEL to validate Kubernetes’ CRDs.

I’ve been looking at CEL since some time, wondering how hard it would be to find a way to write Kubewarden validation policies using this expression language.

Some weeks ago SUSE Hackweek 21 took place, which gave me some time to play with this idea.

This blog post describes the first step of this journey. Two other blog posts will follow.

Picking a CEL runtime

Currently the only mature implementations of the CEL language are written in Go and C++.

Kubewarden policies are implemented using WebAssembly modules.

The official Go compiler isn’t yet capable of producing WebAssembly modules that can be run outside of the browser. TinyGo, an alternative implementation of the Go compiler, can produce WebAssembly modules targeting the WASI interface. Unfortunately TinyGo doesn’t yet support the whole Go standard library. Hence it cannot be used to compile cel-go.

Because of that, I was left with no other choice than to use the cel-cpp runtime.

C and C++ can be compiled to WebAssembly, so I thought everything would have been fine.

Spoiler alert: this didn’t turn out to be “fine”, but that’s for another blog post.

CEL and protobuf

CEL is built on top of protocol buffer types. That means CEL expects the input data (the one to be validated by the constraint) to be described using a protocol buffer type. In the context of Kubewarden this is a problem.

Some Kubewarden policies focus on a specific Kubernetes resource; for example,

all the ones implementing Pod Security Policies are inspecting only Pod resources.

Others, like the ones looking at labels or annotations attributes, are instead

evaluating any kind of Kubernetes resource.

Forcing a Kubewarden policy author to provide a protocol buffer definition of the object to be evaluated would be painful. Luckily, CEL evaluation libraries are also capable of working against free-form JSON objects.

The grand picture

The long term goal is to have a CEL evaluator program compiled into a WebAssembly module.

At runtime, the CEL evaluator WebAssembly module would be instantiated and would receive as input three objects:

- The validation logic: a CEL constraint

- Policy settings (optional): these would provide a way to tune the constraint. They would be delivered as a JSON object

- The actual object to evaluate: this would be a JSON object

Having set the goals, the first step is to write a C++ program that takes as input a CEL constraint and applies that against a JSON object provided by the user.

There’s going to be no WebAssembly today.

Taking a look at the code

In this section I’ll go through the critical parts of the code. I’ll do that to help other people who might want to make a similar use of cel-cpp.

There’s basically zero documentation about how to use the cel-cpp library. I had to learn how to use it by looking at the excellent test suite. Moreover, the topic of validating a JSON object (instead of a protocol buffer type) isn’t covered by the tests. I just found some tips inside of the GitHub issues and then I had to connect the dots by looking at the protocol buffer documentation and other pieces of cel-cpp.

TL;DR The code of this POC can be found inside of this repository.

Parse the CEL constraint

The program receives a string containing the CEL constraint and has to

use it to create a CelExpression object.

This is pretty straightforward, and is done inside of these lines

of the evaluate.cc file.

As you will notice, cel-cpp makes use of the Abseil

library. A lot of cel-cpp APIs are returning absl::StatusOr

// invoke API

auto parse_status = cel_parser::Parse(constraint);

if (!parse_status.ok())

{

// handle error

std::string errorMsg = absl::StrFormat(

"Cannot parse CEL constraint: %s",

parse_status.status().ToString());

return EvaluationResult(errorMsg);

}

// Obtain the actual result

auto parsed_expr = parse_status.value();

Handle the JSON input

cel-cpp expects the data to be validated to be loaded into a CelValue

object.

As I said before, we want the final program to read a generic JSON object as input data. Because of that, we need to perform a series of transformations.

First of all, we need to convert the JSON data into a protobuf::Value object.

This can be done using the protobuf::util::JsonStringToMessage

function.

This is done by these lines

of code.

Next, we have to convert the protobuf::Value object into a CelValue one.

The cel-cpp library doesn’t offer any helper. As a matter of fact, one of

the oldest open issue of cel-cpp

is exactly about that.

This last conversion is done using a series of helper functions I wrote inside

of the proto_to_cel.cc file.

The code relies on the introspection capabilities of protobuf::Value to

build the correct CelValue.

Evaluate the constraint

Once the CEL expression object has been created, and the JSON data has been converted into a `CelValue, there’s only one last thing to do: evaluate the constraint against the input.

First of all we have to create a CEL Activation object and insert the

CelValue holding the input data into it. This takes just few lines of code.

Finally, we can use the Evaluate method of the CelExpression instance

and look at its result. This is done by these lines of code,

which include the usual pattern that handles absl::StatusOr<T> objects.

The actual result of the evaluation is going to be a CelValue that holds

a boolean type inside of itself.

Building

This project uses the Bazel build system. I never used Bazel before, which proved to be another interesting learning experience.

A recent C++ compiler is required by cel-cpp. You can use either gcc (version 9+) or clang (version 10+). Personally, I’ve been using clag 13.

Building the code can be done in this way:

CC=clang bazel build //main:evaluatorThe final binary can be found under bazel-bin/main/evaluator.

Usage

The program loads a JSON object called request which is then embedded

into a bigger JSON object.

This is the input received by the CEL constraint:

{

"request": < JSON_OBJECT_PROVIDED_BY_THE_USER >

}The idea is to later add another top level key called

settings. This one would be used by the user to tune the behavior of the constraint.

Because of that, the CEL constraint must access the request values by

going through the request. key.

This is easier to explain by using a concrete example:

./bazel-bin/main/evaluator \

--constraint 'request.path == "v1"' \

--request '{ "path": "v1", "token": "admin" }'The CEL constraint is satisfied because the path key of the request

is equal to v1.

On the other hand, this evaluation fails because the constraint is not satisfied:

$ ./bazel-bin/main/evaluator \

--constraint 'request.path == "v1"' \

--request '{ "path": "v2", "token": "admin" }'

The constraint has not been satisfiedThe constraint can be loaded from file. Create a file

named constraint.cel with the following contents:

!(request.ip in ["10.0.1.4", "10.0.1.5", "10.0.1.6"]) &&

((request.path.startsWith("v1") && request.token in ["v1", "v2", "admin"]) ||

(request.path.startsWith("v2") && request.token in ["v2", "admin"]) ||

(request.path.startsWith("/admin") && request.token == "admin" &&

request.ip in ["10.0.1.1", "10.0.1.2", "10.0.1.3"]))Then create a file named request.json with the following contents:

{

"ip": "10.0.1.4",

"path": "v1",

"token": "admin",

}Then run the following command:

./bazel-bin/main/evaluator \

--constraint_file constraint.cel \

--request_file request.jsonThis time the constraint will not be satisfied.

Note: I find the

_symbols inside of the flags a bit weird. But this is what is done by the Abseil flags library that I experimented with. 🤷

Let’s evaluate a different kind of request:

./bazel-bin/main/evaluator \

--constraint_file constraint.cel \

--request '{"ip": "10.0.1.1", "path": "/admin", "token": "admin"}'This time the constraint will be satisfied.

Summary

This has been a stimulating challenge.

Getting back to C++

I didn’t write big chunks of C++ code since a long time! Actually, I never had a chance to look at the latest C++ standards. I gotta say, lots of things changed for the better, but I still prefer to pick other programming languages 😅

Building the universe with Bazel

I had prior experience with autoconf & friends, qmake and cmake, but I

never used Bazel before.

As a newcomer, I found the documentation of Bazel quite good. I appreciated

how easy it is to consume libraries that are using Bazel. I also like how

Bazel can solve the problem of downloading dependencies, something

you had to solve on your own with cmake and similar tools.

The concept of building inside of a sandbox, with all the dependencies vendored, is interesting but can be kinda scary. Try building this project and you will see that Bazel seems to be downloading the whole universe. I’m not kidding, I’ve spotted a Java runtime, a Go compiler plus a lot of other C++ libraries.

Bazel build command gives a nice progress bar. However, the number of tasks to

be done keeps growing during the build process. It kinda reminded me of the old

Windows progress bar!

I gotta say, I regularly have this feeling of “building the universe” with Rust, but Bazel took that to the next level! 🤯

Code spelunking

Finally, I had to do a lot of spelunking inside of different C++ code bases: envoy, protobuf’s c++ implementation, cel-cpp and Abseil to name a few. This kind of activity can be a bit exhausting, but it’s also a great way to learn from the others.

What’s next?

Well, in a couple of weeks I’ll blog about my next step of this journey: building C++ code to standalone WebAssembly!

Now I need to take some deserved vacation time 😊!

⛰️ 🚶👋

Community Work Group Discusses Next Edition

Members of openSUSE had a visitor for a recent Work Group (WG) session that provided the community an update from one of the leaders focusing on the development of the next generation distribution.

SUSE and the openSUSE community have a steering committee and several Work Groups (WG) collectively innovating what is being referred to as the Adaptable Linux Platform (ALP).

SUSE’s Frederic Crozat, who is one of ALP Architects and part of the ALP steering committee, joined in the exchange of ideas and opinions as well as provided some insight to the group about moving technical decisions forward.

The vision is to take step beyond of what SUSE does with modules like in SUSE LInux Enterprise (SLE) 15. This is not really seen on the openSUSE side. On the SLE side, it’s a bit different, but the point is to be more flexible and agile with development. The way to get there is not yet fully decided, but one thing that is certain is containerization is one of the easiest ways to ensure adaptability.

“If people have their own workloads in containers or VMs, the chance of breaking their system is way lower,” openSUSE Leap release manager Lubos Kocman pointed out in the session. “We’ll need to make sure that users can easily search and install ‘workloads’ from trusted parties.”

In some cases, people break their systems by consuming software outside of the distribution repository. Some characterizations of ALP have been referred to as strict use of containers such as flatpaks, but it’s better to reference the items as workload. There were some efforts planned for Hack Week to provide documentation regarding the workload builds.

There was confirmation that there will be no migration path from SLES to ALP, which most think would mean the same for Leap to ALP, but this does not necessarily apply for openSUSE as people are not being stopped from writing upgradeable scripts.

Working on the community edition is planned after the proof of concept, but the community is actively involved with developing the proof of concept. Some points brought up were that the intial build will aim at a build on Intel and then expand later with other architectures.

The community platform is being referred to as openSUSE ALP during its development cycle with SUSE to be concise with planning the next community edition.

YaST Development Report - Chapter 5 of 2022

We have been a bit silent lately in this blog, but there are good reasons for it. As you know, the YaST Team is currently involved in many projects and that implies we had to constantly adapt the way we work. That left us little time for blogging. But now we have reached enough stability and we hope to recover a more predictable and regular cadence of publications.

So let’s start with this post including:

- Some notes about the new scope of the blog

- The announcement of D-Installer 0.4

- A brief introduction to Iguana, a container-capable boot image

- Progress on containerized YaST

- Some Cockpit news

Adjusting the Scope

As you know, SUSE is developing the next generation of the SUSE Linux family under the code-name ALP (Adaptable Linux Platform). If you are following the activity in that front, you also know several so-called work groups has been constituted to work on different areas.

The YaST Team is deeply involved on two of those work groups, the ones named “1:1 System Management” and “Installation / Deployment”. You can read more details about the mission of each group and the technologies we are developing in the wiki page linked at the previous paragraph.

Since this blog is a well-established communication channel with the (open)SUSE users, we decided we will use it to report the progress on all the projects related to those work groups. That goes beyond the scope of YaST itself and even beyond the scope of the YaST Team, since the mentioned work groups also include other SUSE and openSUSE colleages. But we are sure our readers will equally enjoy the content.

D-Installer Reaches Version 0.4

Let’s start with an old acquaintance of this blog. In several previous posts we have already described D-Installer, our effort to expose the power of YaST through a more reusable and modern set of interfaces. We recently reached an important milestone in its development with the first version including a multi-process architecture. In previous versions, the user interface could not respond to user interaction if any of the D-Installer components was busy (reading the repositories metadata, installing packages, etc.). The new D-Installer v0.4 includes the first steps to definitely solve that problem and also other interesting features you can check at this release announcement. There are even a couple of videos to see it in action without risking your systems!

As you can see in the first of those videos, we improved the product selection screen and now D-Installer can download and install Tumbleweed, Leap 15.4 or Leap Micro 5.2.

But a new YaST-related piece of software cannot be really considered as fully released until it is submitted to openSUSE Tumbleweed. We plan to do that in the upcoming days, to ensure future development of D-Installer remains fully integrated with our beloved rolling distribution.

A New Reptile in the Family

Of course, D-Installer needs to run on top of a working Linux system. That could be the openSUSE

LiveCD (as we do to test the prototypes) or some kind of minimal installation media. What if that

minimal system is just a container completely tailored to execute D-Installer? It could work as

long as you have a certain initrd (ie. boot image) with the capability of grabbing and executing

containers. Say hello to

Iguana.

Iguana is at an early stage of development and we cannot guarantee it will keep its current form or name, but it’s already able to boot on a virtual machine (and likely on real hardware) and execute a predefined container. We tried to run a containerized version of D-Installer and it certanly works! We plan to evolve the concept and make Iguana able to orchestrate several containers, which will give us a very flexible tool for installing or fixing a system in all kind of situations.

Progress on Containerized YaST

Just as we are looking into D-Installer as a way to reuse the YaST capabilities for system installation, you know we are also looking into containerization as a way to expand the YaST scope regarding system configuration. And we also have some news in that front.

First of all, we adapted more YaST modules to make them work from a container. As a result, you can now access all this functionality:

- Configuration of timezone, keyboard layout and language

- Management of software and repositories

- Firewall setup

- Configuration of iSCSI devices (iSCSI LIO target)

- Management of system services

- Inspection of the systemd journal

- Management of users and groups

- Printers configuration

- Administration of DNS server

We are working on adapting more YaST modules as we write this. We hope to soon add boot loader or Kdump configuration to the portfolio. Maybe even the YaST Partitioner if everything goes fine.

On the other hand, we restructured the container images to rely on SLE BCI, which results on smaller and better supported images. But that was just a first step, we are now actively working on reducing the size of the current images even further. Stay tuned for more news and some numbers.

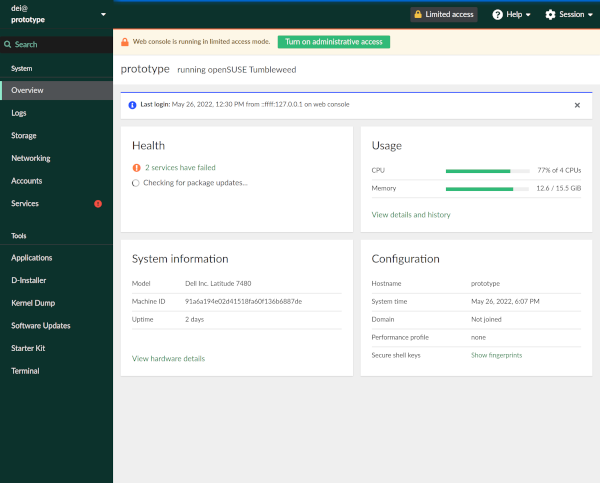

Cockpit with a Green Touch

Although using a containerized version of YaST will always be an option. Is expected that the main tool for performing interactive administration of an individual ALP host will be Cockpit. So we are also investing on improving the Cockpit experience on (open)SUSE systems. And nothing gives a better experience that a properly green user interface!

We improved the theming support for Cockpit (in an unofficial way, not adopted by upstream) and used

that new support to make it look better thanks to the new

cockpit-suse-theme package.

We took the opportunity to update the version of all Cockpit packages available at openSUSE Tumbleweed. So if you are a Tumbleweed user, it’s maybe a good time to give a first try to Cockpit!

I’ll Be Back!

As mentioned at the beginning of this post, we want to get back to the good habit of posting regular status updates, although they will not longer be so focused around (Auto)YaST. In exchange you will get news about Cockpit, D-Installer, Iguana and containers. So stay tuned for more fun!

MicroOS Desktop Use to Help with ALP Feedback

Participants from the openSUSE community working on the upcoming release of the Adaptable Linux Platform (ALP) encourage people to try openSUSE MicroOS Desktop to gain user perspectives on its applicability.

Users are encouraged to try out MicroOS Desktop by installing it and using it on a laptop or workstation for a week or so. By doing this, users develop a frame of reference for how ALP can progress; the community wants to gain feedback about what users think about ALP’s usability, how it fits user workflows and more. The community would like to see critiques and evaluations that work for users. People are encouraged to send feedback to the ALP-community-wg mailing list.

The temporary use of the MicroOS Desktop will help developers assess how to move forward with ALP’s Proof of Concept (PoC).

Currently MicroOS Desktop has both GNOME and KDE’s Plasma as an option.

ALP’s PoC does not currently have a Desktop requirement, but one is expected to land in a snapshot shortly after the PoC.

Several workgroups (WG) are involved and engineers are aiming for an initial PoC release of ALP in the fall. There are also plans to to do an ALP Workshop and Install Fest (as a hybrid in-person/virtual event) during the openSUSE.Asia Summit 2022 if the ALP PoC release becomes available before the summit, which is on Sept. 30 and Oct. 1.

Discussions related to flatpaks and how they will fit into the wider ALP ecosystem alongside RPMs and other packages continue to be discussed among community members and stakeholders.

SCM Bridge support for the SCM/CI integration

openSUSE Tumbleweed – Review of the week 2022/28

Dear Tumbleweed users and hackers,

During this week we managed to release a snapshot every day. Granted, some were relatively small, but overall, there were some nice updates in the 7 snapshots published (0708…0714).

The most relevant changes were:

- Mozilla Firefox 102.0.1

- KDE Frameworks 5.96.0

- KDE Gear 22.04.3

- KDE Plasma 5.25.3

- Salt: fixed runtime with python-pyzmq update

- GNOME 42.3

- systemd 251.2

- GCC 12.1.1

- libvirt 8.5.0

- openSSL 1.1.1q (CVE-2022-2097, boo#1201099)

- Linux kernel: simpledrm has been re-enabled

- Perl 5.36.0

The queue is not very long, but that can change overnight. Currently, the things staged include:

- Linux kernel 5.18.11

- MicroOS Desktop “GNOME” reaches RC quality

- Pipewire 0.3.55 + patch: fixes an issue seen when switching TTY, when coming back sometimes has no active audio devices (boo#1201349)

Making unsorted lookups in Calc fast

The VLOOKUP spreadsheet function by default requires the searched data to be sorted, and in that case it performs a fast binary search. If the data is not sorted (for example if it would be impractical to have the data that way), it is possible to explicitly tell VLOOKUP that the data is not sorted, in which case Calc did a linear one-by-one lookup. And there are other functions such as COUNTIF or SUMIF that essentially do a lookup too, and those cannot even be told that the data is sorted and so they processed the data linearly. With large spreadsheets this can actually take a noticeably long time. Bugreports such as tdf#139444, tdf#144777 or tdf#146546 say operations in such spreadsheets take minutes to complete, or even "freeze".

I wanted to do something about those for quite a while, as with the right idea making those much faster should be actually fairly simple. And the simple idea I had was to let Calc to sort the data first and then use fast binary search. These documents usually do lookups in the same fixed range of cells, so the linear search in the same unsorted data was rather a waste when done repeatedly. Surely Calc should be able to sort the data just once, cache it and then use that cached sort order repeatedly. In fact VLOOKUP already had a cache for results of lookup in the same area, used when doing lookup in the same row but different columns.

I finally found the time to do something about this when SUSE filled a bug to Collabora about their internal documents freezing on load and then crashing after 10 minutes. The LO thread pool class has a 10 minutes timeout as a safety measure after which it aborts, and the large number of lookups in the documents actually managed to exceed that timeout. So I can't actually say how slow it was before :), but I can quote Gerald Pfeifer from SUSE reporting the final numbers:

| Before | After |

|---|---|

| 76m:56s CPU time (crash) | 9s CPU time (6s clock time) |

| 126m:23s CPU time (crash) | 25s CPU time (15s clock time) |

| 160m+ CPU time (crash) | 38s CPU time (23s clock time) |

| 8m:56s CPU time (8m:56s clock time) | 14s CPU time (5s clock time) |

This work is available in LibreOffice 7.4, and the TDF bugreports show similar improvements. In fact tdf#144777, titled "countifs() in Calc is slower than Excel's countifs()", now has a final comment saying that MS Office 2021 can do a specific document in 26s and LO 7.4 can do it in 2s. Good enough, I guess :).

The syslog-ng insider 2022-07: RHEL 9; disk-buffer; Microsoft Linux;

The July syslog-ng newsletter is now on-line:

- RHEL 9 syslog-ng news

- How does the syslog-ng disk-buffer work?

- Installing syslog-ng on Microsoft Linux

It is available at https://www.syslog-ng.com/community/b/blog/posts/the-syslog-ng-insider-2022-06-rhel-9-disk-buffer-microsoft-linux

syslog-ng logo

openqa: asset download request but no domains passlisted

Symptom

When posting a job using , you see an error message of the following kind:

$ openqa-cli api -X POST isos ...

403 Forbidden

Asset download requested but no domains passlisted! Set download_domains.

Solution

My Favorite IT Security Event: Pass the SALT

“Pass the SALT” (PTS) is a small IT security conference in Lille, France. It has less participants than speakers at the RSA conference. I gave talks at both events. RSA is a lot more prestigious event, but I still prefer PTS. Why?

Small Is Beautiful

As you could guess from my introduction, PTS is a small event. It is run by volunteers. It is also a free event thanks to sponsors. The small size has many advantages. There are not many parallel tracks competing for your attention. There is a main track and a workshop track. No need for buzzwords, for loud marketing of talks, as most people will be there anyway. Instead of attention seeking, speakers can focus on technical content.

The focus of PTS is open source security software. Which is a nice coincidence, as I work on two open source software projects. Sudo is definitely security focused, it lets you control access to your hosts and log access. While syslog-ng is not strictly security focused, it is also often used by infosec. Commercial software and services are of course mentioned by speakers while introducing themselves, but the focus is open source.

Small also means that there is a much stronger feeling of community than at larger events. The speaker’s dinner is fantastic. Not just because of the food served, but also because you can talk to many like-minded people who are experts in their fields. There are always some old friends, but new people as well. The various breaks and the social event also gave us lots of possibilities to discuss not just security, but also Life, the Universe and Everything :-) I always feel a bit lost when there are hundreds or thousands of people around me. However, at PTS I always feel comfortable. Of course, as a strong introvert, I still regularly need some time alone. The conference is in a beautiful environment, so it is easy to take a quick walk and recharge before the next block of talks starts.

Small also means much more and much better feedback after talks. With many parallel tracks most people are running to the next talks, once your talk is over. With just one track, even if there were two more talks without a break after my talk, people came to me to discuss sudo and syslog-ng in the breaks. The latest major version of sudo, 1.9.0, incorporated many of the feedback I received at Pass the SALT in 2019. This kind of in-depth discussions with users are almost completely missing at larger events.

sudo logs for blue teamers

My talk at PTS combined the two software projects I am working on. The primary focus was on the very latest sudo features that arrived in minor versions after the 1.9.0 release. Many of these are logging related, so I also included syslog-ng and demonstrated how you can work with sudo logs in syslog-ng. Based on the feedback I received at the conference, it is much easier to work with sudo logs, or JSON logs in general using syslog-ng than with most other logging software.

You can watch my talk at https://passthesalt.ubicast.tv/videos/sudo-logs-for-blue-teamers/.

However, if you are like me and hate videos, here is a blog covering most of the things I talked about at the conference: https://www.sudo.ws/posts/2022/05/sudo-for-blue-teams-how-to-control-and-log-better/

Sudo logo

Some of my favorite talks

Every talk was really interesting, but of course not all of them were relevant to me. Below I collected some of my favorite talks from the conference:

CryptPad is an end-to-end encrypted collaboration solution. It provides many of the features of Google and Microsoft cloud tools, but it is fully open source and data is stored encrypted securely. With something like this I’d probably trust the cloud more to store my data, now I rather store sensitive data locally… https://cfp.pass-the-salt.org/pts2022/talk/LPMHUA/

I used containers when they were still called “FreeBSD jail” :-) So, I love the technology. Fedora is one of the pioneers of containerization on Linux. They have multiple operating systems based on a minimal read-only Fedora Linux that can be extended using containers. There are specific distributions targeting everything from IoT through desktops to servers. https://cfp.pass-the-salt.org/pts2022/talk/MTLGWL/

sslh is something I’m planning on trying. It allows servicing SSH and HTTPS from the same port. Many places block access to port 22, or even limit access to HTTPS. Using sslh can help in this situation. https://cfp.pass-the-salt.org/pts2022/talk/XTBQ73/

I have been using Suricata for many years. It is not really my job, I only use it out of curiosity, but it’s still a lot of fun. Especially because Suricata produces JSON formatted log messages, and syslog-ng is pretty good at working with JSON formatted logs. You can read my blog about working with Suricata logs in syslog-ng at https://www.syslog-ng.com/community/b/blog/posts/analyze-your-suricata-logs-in-real-time-using-syslog-ng. At the conference I participated both a talk and a workshop on Suricata: https://cfp.pass-the-salt.org/pts2022/talk/AGLDYH/ and https://cfp.pass-the-salt.org/pts2022/talk/BNNNQX/

As usual, one of my favorite talks came from Xavier Mertens. One of his earlier talks inspired the in-list() function of syslog-ng. This year, he talked about Cyberchef, a tool used to decode exotic data formats. Luckily, it’s not that often that I would need anything like this, but sometimes it could come in handy when trying to figure out what is hiding in my e-mails. https://cfp.pass-the-salt.org/pts2022/talk/8NDEN8/

Summary

I hope to be back next year again :-)

Member

Member

DimStar

DimStar