My gdk-pixbuf braindump

I want to write a braindump on the stuff that I remember from gdk-pixbuf's history. There is some talk about replacing it with something newer; hopefully this history will show some things that worked, some that didn't, and why.

The beginnings

Gdk-pixbuf started as a replacement for Imlib, the image loading and rendering library that GNOME used in its earliest versions. Imlib came from the Enlightenment project; it provided an easy API around the idiosyncratic libungif, libjpeg, libpng, etc., and it maintained decoded images in memory with a uniform representation. Imlib also worked as an image cache for the Enlightenment window manager, which made memory management very inconvenient for GNOME.

Imlib worked well as a "just load me an image" library. It showed that a small, uniform API to load various image formats into a common representation was desirable. And in those days, hiding all the complexities of displaying images in X was very important indeed.

The initial API

Gdk-pixbuf replaced Imlib, and added two important features: reference counting for image data, and support for an alpha channel.

Gdk-pixbuf appeared with support for RGB(A) images. And although in

theory it was possible to grow the API to support other

representations, GdkColorspace never acquired anything other than

GDK_COLORSPACE_RGB, and the bits_per_sample argument to some

functions only ever supported being 8. The presence or absence of an alpha

channel was done with a gboolean argument in conjunction with that

single GDK_COLORSPACE_RGB value; we didn't have something like

cairo_format_t which actually specifies the pixel format in single

enum values.

While all the code in gdk-pixbuf carefully checks that those conditions are met — RGBA at 8 bits per channel —, some applications inadvertently assume that that is the only possible case, and would get into trouble really fast if gdk-pixbuf ever started returning pixbufs with different color spaces or depths.

One can still see the battle between bilevel-alpha vs. continuous-alpha in this enum:

typedef enum

{

GDK_PIXBUF_ALPHA_BILEVEL,

GDK_PIXBUF_ALPHA_FULL

} GdkPixbufAlphaMode;

Fortunately, only the "render this pixbuf with alpha to an Xlib drawable" functions take values of this type: before the Xrender days, it was a Big Deal to draw an image with alpha to an X window, and applications often opted to use a bitmask instead, even if they had jagged edges as a result.

Pixel formats

The only pixel format that ever got implemented was unpremultiplied RGBA on all platforms. Back then I didn't understand premultiplied alpha! Also, the GIMP followed that scheme, and copying it seemed like the easiest thing.

After gdk-pixbuf, libart also copied that pixel format, I think.

But later we got Cairo, Pixman, and all the Xrender stack. These prefer premultiplied ARGB. Moreover, Cairo prefers it if each pixel is actually a 32-bit value, with the ARGB values inside it in platform-endian order. So if you look at a memory dump, a Cairo pixel looks like BGRA on a little-endian box, while it looks like ARGB on a big-endian box.

Every time we paint a GdkPixbuf to a cairo_t, there is a

conversion from unpremultiplied RGBA to premultiplied, platform-endian

ARGB. I talked a bit about this in Reducing the number of image

copies in GNOME.

The loading API

The public loading API in gdk-pixbuf, and its relationship to loader plug-ins, evolved in interesting ways.

At first the public API and loaders only implemented load_from_file:

you gave the library a FILE * and it gave you back a GdkPixbuf.

Back then we didn't have a robust MIME sniffing framework in the form

of a library, so gdk-pixbuf got its own. This lives in the

mostly-obsolete GdkPixbufFormat machinery; it

even has its own little language for sniffing file headers!

Nowadays we do most MIME sniffing with GIO.

After the intial load_from_file API... I think we got progressive

loading first, and animation support aftewards.

Progressive loading

This where the calling program feeds chunks of bytes to the library,

and at the end a fully-formed GdkPixbuf comes out, instead of having

a single "read a whole file" operation.

We conflated this with a way to get updates on how the image area gets modified as the data gets parsed. I think we wanted to support the case of a web browser, which downloads images slowly over the network, and gradually displays them as they are downloaded. In 1998, images downloading slowly over the network was a real concern!

It took a lot of very careful work to convert the image loaders, which parsed a whole file at a time, into loaders that could maintain some state between each time that they got handed an extra bit of buffer.

It also sounded easy to implement the progressive updating API by simply emitting a signal that said, "this rectangular area got updated from the last read". It could handle the case of reading whole scanlines, or a few pixels, or even area-based updates for progressive JPEGs and PNGs.

The internal API for the image format loaders still keeps a

distinction between the "load a whole file" API and the "load an image

in chunks". Not all loaders got redone to simply just use the second

one: io-jpeg.c still implements loading whole files by calling the

corresponding libjpeg functions. I think it could remove that code

and use the progressive loading functions instead.

Animations

Animations: we followed the GIF model for animations, in which each frame overlays the previous one, and there's a delay set between each frame. This is not a video file; it's a hacky flipbook.

However, animations presented the problem that the whole gdk-pixbuf API was meant for static images, and now we needed to support multi-frame images as well.

We defined the "correct" way to use the gdk-pixbuf library as to

actually try to load an animation, and then see if it is a

single-frame image, in which case you can just get a GdkPixbuf for

the only frame and use it.

Or, if you got an animation, that would be a GdkPixbufAnimation

object, from which you could ask for an iterator to get each frame as

a separate GdkPixbuf.

However, the progressive updating API never got extended to really

support animations. So, we have awkward functions like

gdk_pixbuf_animation_iter_on_currently_loading_frame() instead.

Necessary accretion

Gdk-pixbuf got support for saving just a few formats: JPEG, PNG, TIFF, ICO, and some of the formats that are implemented with the Windows-native loaders.

Over time gdk-pixbuf got support for preserving some metadata-ish chunks from formats that provide it: DPI, color profiles, image comments, hotspots for cursors/icons...

While an image is being loaded with the progressive loaders, there is a clunky way to specify that one doesn't want the actual size of the image, but another size instead. The loader can handle that situation itself, hopefully if an image format actually embeds different sizes in it. Or if not, the main loading code will rescale the full loaded image into the size specified by the application.

Historical cruft

GdkPixdata - a way to embed binary image data in executables, with a

funky encoding. Nowadays it's just easier to directly store a PNG or

JPEG or whatever in a GResource.

contrib/gdk-pixbuf-xlib - to deal with old-style X drawables.

Hopefully mostly unused now, but there's a good number of mostly old,

third-party software that still uses gdk-pixbuf as an image loader and

renderer to X drawables.

gdk-pixbuf-transform.h - Gdk-pixbuf had some very high-quality

scaling functions, which the original versions of EOG used for the

core of the image viewer. Nowadays Cairo is the preferred way of

doing this, since it not only does scaling, but general affine

transformations as well. Did you know that

gdk_pixbuf_composite_color takes 17 arguments, and it can composite

an image with alpha on top of a checkerboard? Yes, that used to be

the core of EOG.

Debatable historical cruft

gdk_pixbuf_get_pixels(). This lets the program look into the actual

pixels of a loaded pixbuf, and modify them. Gdk-pixbuf just did not

have a concept of immutability.

Back in GNOME 1.x / 2.x, when it was fashionable to put icons beside menu items, or in toolbar buttons, applications would load their icon images, and modify them in various ways before setting them onto the corresponding widgets. Some things they did: load a colorful icon, desaturate it for "insensitive" command buttons or menu items, or simulate desaturation by compositing a 1x1-pixel checkerboard on the icon image. Or lighten the icon and set it as the "prelight" one onto widgets.

The concept of "decode an image and just give me the pixels" is of course useful. Image viewers, image processing programs, and all those, of course need this functionality.

However, these days GTK would prefer to have a way to decode an image, and ship it as fast as possible ot the GPU, without intermediaries. There is all sorts of awkward machinery in the GTK widgets that can consume either an icon from an icon theme, or a user-supplied image, or one of the various schemes for providing icons that GTK has acquired over the years.

It is interesting to note that gdk_pixbuf_get_pixels() was available

pretty much since the beginning, but it was only until much later that

we got gdk_pixbuf_get_pixels_with_length(), the "give me the guchar

* buffer and also its length" function, so that calling code has a

chance of actually checking for buffer overruns. (... and it is one

of the broken "give me a length" functions that returns a guint

rather than a gsize. There is a better

gdk_pixbuf_get_byte_length() which actually returns a gsize,

though.)

Problems with mutable pixbufs

The main problem is that as things are right now, we have no flexibility in changing the internal representation of image data to make it better for current idioms: GPU-specific pixel formats may not be unpremultiplied RGBA data.

We have no API to say, "this pixbuf has been modified", akin to

cairo_surface_mark_dirty(): once an application calls

gdk_pixbuf_get_pixels(), gdk-pixbuf or GTK have to assume that the

data will be changed and they have to re-run the pipeline to send

the image to the GPU (format conversions? caching? creating a

texture?).

Also, ever since the beginnings of the gdk-pixbuf API, we had a way to

create pixbufs from arbitrary user-supplied RGBA buffers: the

gdk_pixbuf_new_from_data functions. One problem with this scheme is

that memory management of the buffer is up to the calling application,

so the resulting pixbuf isn't free to handle those resources as it

pleases.

A relatively recent addition is gdk_pixbuf_new_from_bytes(), which

takes a GBytes buffer instead of a random guchar *. When a pixbuf

is created that way, it is assumed to be immutable, since a GBytes

is basically a shared reference into a byte buffer, and it's just

easier to think of it as immutable. (Nothing in C actually enforces

immutability, but the API indicates that convention.)

Internally, GdkPixbuf actually prefers to be created from a

GBytes. It will downgrade itself to a guchar * buffer if

something calls the old gdk_pixbuf_get_pixels(); in the best case,

that will just take ownership of the internal buffer from the

GBytes (if the GBytes has a single reference count); in the worst

case, it will copy the buffer from the GBytes and retain ownership

of that copy. In either case, when the pixbuf downgrades itself to

pixels, it is assumed that the calling application will modify the

pixel data.

What would immutable pixbufs look like?

I mentioned this a bit in "Reducing Copies". The loaders in gdk-pixbuf would create immutable pixbufs, with an internal representation that is friendly to GPUs. In the proposed scheme, that internal representation would be a Cairo image surface; it can be something else if GTK/GDK eventually prefer a different way of shipping image data into the toolkit.

Those pixbufs would be immutable. In true C fashion we can call it

undefined behavior to change the pixel data (say, an app could request

gimme_the_cairo_surface and tweak it, but that would not be

supported).

I think we could also have a "just give me the pixels" API, and a

"create a pixbuf from these pixels" one, but those would be one-time

conversions at the edge of the API. Internally, the pixel data that

actually lives inside a GdkPixbuf would remain immutable, in some

preferred representation, which is not necessarily what the

application sees.

What worked well

A small API to load multiple image formats, and paint the images easily to the screen, while handling most of the X awkwardness semi-automatically, was very useful!

A way to get and modify pixel data: applications clearly like doing this. We can formalize it as an application-side thing only, and keep the internal representation immutable and in a format that can evolve according to the needs of the internal API.

Pluggable loaders, up to a point. Gdk-pixbuf doesn't support all the image formats in the world out of the box, but it is relatively easy for third-parties to provide loaders that, once installed, are automatically usable for all applications.

What didn't work well

Having effectively two pixel formats supported, and nothing else: gdk-pixbuf does packed RGB and unpremultiplied RGBA, and that's it. This isn't completely terrible: applications which really want to know about indexed or grayscale images, or high bit-depth ones, are probably specialized enough that they can afford to have their own custom loaders with all the functionality they need.

Pluggable loaders, up to a point. While it is relatively easy to create third-party loaders, installation is awkward from a system's perspective: one has to run the script to regenerate the loader cache, there are more shared libraries running around, and the loaders are not sandboxed by default.

I'm not sure if it's worthwhile to let any application read "any" image format if gdk-pixbuf supports it. If your word processor lets you paste an image into the document... do you want it to use gdk-pixbuf's limited view of things and include a high bit-depth image with its probably inadequate conversions? Or would you rather do some processing by hand to ensure that the image looks as good as it can, in the format that your word processor actually supports? I don't know.

The API for animations is very awkward. We don't even support APNG... but honestly I don't recall actually seeing one of those in the wild.

The progressive loading API is awkward. The "feed some bytes into the loader" part is mostly okay; the "notify me about changes to the pixel data" is questionable nowadays. Web browsers don't use it; they implement their own loaders. Even EOG doesn't use it.

I think most code that actually connects to GdkPixbufLoader's

signals only uses the size-prepared signal — the one that gets

emitted soon after reading the image headers, when the loader gets to

know the dimensions of the image. Apps sometimes use this to say,

"this image is W*H pixels in size", but don't actually decode the

rest of the image.

The gdk-pixbuf model of static images, or GIF animations, doesn't work well for multi-page TIFFs. I'm not sure if this is actualy a problem. Again, applications with actual needs for multi-page TIFFs are probably specialized enough that they will want a full-featured TIFF loader of their own.

Awkward architectures

Thumbnailers

The thumbnailing system has slowly been moving towards a model where we actually have thumbnailers specific to each file format, instead of just assuming that we can dump any image into a gdk-pixbuf loader.

If we take this all the way, we would be able to remove some weird code in, for example, the JPEG pixbuf loader. Right now it supports loading images at a size that the calling code requests, not only at the "natural" size of the JPEG. The thumbnailer can say, "I want to load this JPEG at 128x128 pixels" or whatever, and in theory the JPEG loader will do the minimal amount of work required to do that. It's not 100% clear to me if this is actually working as intended, or if we downscale the whole image anyway.

We had a distinction between in-process and out-of-process thumbnailers, and it had to do with the way pixbuf loaders are used; I'm not sure if they are all out-of-process and sandboxed now.

Non-raster data

There is a gdk-pixbuf loader for SVG images which uses librsvg

internally, but only in a very basic way: it simply loads the SVG at

its preferred size. Librsvg jumps through some hoops to compute a

"preferred size" for SVGs, as not all of them actually indicate one.

The SVG model would rather have the renderer say that the SVG is to be

inserted into a rectangle of certain width/height, and

scaled/positioned inside the rectangle according to some other

parameters (i.e. like one would put it inside an HTML document, with a

preserveAspectRatio attribute and all that). GNOME applications

historically operated with a different model, one of "load me an

image, I'll scale it to whatever size, and paint it".

This gdk-pixbuf loader for SVG files gets used for the SVG thumbnailer, or more accurately, the "throw random images into a gdk-pixbuf loader" thumbnailer. It may be better/cleaner to have a specific thumbnailer for SVGs instead.

Even EOG, our by-default image viewer, doesn't use the gdk-pixbuf loader for SVGs: it actually special-cases them and uses librsvg directly, to be able to load an SVG once and re-render it at different sizes if one changes the zoom factor, for example.

GTK reads its SVG icons... without using librsvg... by assuming that

librsvg installed its gdk-pixbuf loader, so it loads them as any

normal raster image. This kind of dirty, but I can't quite pinpoint

why. I'm sure it would be convenient for icon themes to ship a single

SVG with tons of icons, and some metadata on their ids, so that GTK

could pick them out of the SVG file with rsvg_render_cairo_sub() or

something. Right now icon theme authors are responsible for splitting

out those huge SVGs into many little ones, one for each icon, and I

don't think that's their favorite thing in the world to do :)

Exotic raster data

High bit-depth images... would you expect EOG to be able to load them? Certainly; maybe not with all the fancy conversions from a real RAW photo editor. But maybe this can be done as EOG-specific plugins, rather than as low in the platform as the gdk-pixbuf loaders?

(Same thing for thumbnailing high bit-depth images: the loading code should just provide its own thumbnailer program for those.)

Non-image metadata

The gdk_pixbuf_set_option / gdk_pixbuf_get_option family of

functions is so that pixbuf loaders can set key/value pairs of strings

onto a pixbuf. Loaders use this for comment blocks, or ICC profiles

for color calibration, or DPI information for images that have it, or

EXIF data from photos. It is up to applications to actually use this

information.

It's a bit uncomfortable that gdk-pixbuf makes no promises about the

kind of raster data it gives to the caller: right now it is raw

RGB(A) data that is not gamma-corrected nor in any particular color

space. It is up to the caller to see if the pixbuf has an ICC profile

attached to it as an option. Effectively, this means that

applications don't know if they are getting SRGB, or linear RGB, or

what... unless they specifically care to look.

The gdk-pixbuf API could probably make promises: if you call this function you will get SRGB data; if you call this other function, you'll get the raw RGBA data and we'll tell you its colorspace/gamma/etc.

The various set_option / get_option pairs are also usable by the

gdk-pixbuf saving code (up to now we have just talked about

loaders). I don't know enough about how applications use the saving

code in gdk-pixbuf... the thumbnailers use it to save PNGs or JPEGs,

but other apps? No idea.

What I would like to see

Immutable pixbufs in a useful format. I've started work on

this in a merge request; the internal code is now ready

to take in different internal representations of pixel data. My goal

is to make Cairo image surfaces the preferred, immutable, internal

representation. This would give us a

gdk_pixbuf_get_cairo_surface(), which pretty much everything that

needs one reimplements by hand.

Find places that assume mutable pixbufs. To gradually deprecate

mutable pixbufs, I think we would need to audit applications and

libraries to find places that cause GdkPixbuf structures to degrade

into mutable ones: basically, find callers of

gdk_pixbuf_get_pixels() and related functions, see what they do, and

reimplement them differently. Maybe they don't need to tint icons by

hand anymore? Maybe they don't need icons anymore, given our

changing UI paradigms? Maybe they are using gdk-pixbuf as an image

loader only?

Reconsider the loading-updates API. Do we need the

GdkPixbufLoader::area-updated signal at all? Does anything break

if we just... not emit it, or just emit it once at the end of the

loading process? (Caveat: keeping it unchanged more or less means

that "immutable pixbufs" as loaded by gdk-pixbuf actually mutate while

being loaded, and this mutation is exposed to applications.)

Sandboxed loaders. While these days gdk-pixbuf loaders prefer the progressive feed-it-bytes API, sandboxed loaders would maybe prefer a read-a-whole-file approach. I don't know enough about memfd or how sandboxes pass data around to know how either would work.

Move loaders to Rust. Yes, really. Loaders are

security-sensitive, and while we do need to sandbox them, it would

certainly be better to do them in a memory-safe language. There are

already pure Rust-based image loaders: JPEG,

PNG, TIFF, GIF, ICO.

I have no idea how featureful they are. We can certainly try them

with gdk-pixbuf's own suite of test images. We can modify them to add

hooks for things like a size-prepared notification, if they don't

already have a way to read "just the image headers".

Rust makes it very easy to plug in micro-benchmarks, fuzz testing, and other modern amenities. These would be perfect for improving the loaders.

I started sketching a Rust backend for gdk-pixbuf

loaders some months ago, but there's nothing useful

yet. One mismatch between gdk-pixbuf's model for loaders, and the

existing Rust codecs, is that Rust codecs generally take something

that implements the Read trait: a blocking API to read bytes from

abstract sources; it's a pull API. The gdk-pixbuf model is a push

API: the calling code creates a loader object, and then pushes bytes

into it. The gdk-pixbuf convenience functions that take a

GInputStream basically do this:

loader = gdk_pixbuf_loader_new (...);

while (more_bytes) {

n_read = g_input_stream_read (stream, buffer, ...);

gdk_pixbuf_loader_write(loader, buffer, n_read, ...);

}

gdk_pixbuf_loader_close (loader);

However, this cannot be flipped around easily. We could probably use a second thread (easy, safe to do in Rust) to make the reader/decoder thread block while the main thread pushes bytes into it.

Also, I don't know how the Rust bindings for GIO present things like

GInputStream and friends, with our nice async cancellables and all

that.

Deprecate animations? Move that code to EOG, just so one can look at memes in it? Do any "real apps" actually use GIF animations for their UI?

Formalize promises around returned color profiles, gamma, etc. As mentioned above: have an "easy API" that returns SRGB, and a "raw API" that returns the ARGB data from the image, plus info on its ICC profile, gamma, or any other info needed to turn this into a "good enough to be universal" representation. (I think all the Apple APIs that pass colors around do so with an ICC profile attached, which seems... pretty much necessary for correctness.)

Remove the internal MIME-sniffing machinery. And just use GIO's.

Deprecate the crufty/old APIs in gdk-pixbuf.

Scaling/transformation, compositing, GdkPixdata,

gdk-pixbuf-csource, all those. Pixel crunching can be done by

Cairo; the others are better done with GResource these days.

Figure out if we want blessed codecs; fix thumbnailers. Link those loaders statically, unconditionally. Exotic formats can go in their own custom thumbnailers. Figure out if we want sandboxed loaders for everything, or just for user-side images (not ones read from the trusted system installation).

Have GTK4 communicate clearly about its drawing model. I think we are having a disconnect between the GUI chrome, which is CSS/GPU friendly, and graphical content generated by applications, which by default right now is done via Cairo. And having Cairo as a to-screen and to-printer API is certainly very convenient! You Wouldn't Print a GUI, but certainly you would print a displayed document.

It would also be useful for GTK4 to actually define what its preferred image format is if it wants to ship it to the GPU with as little work as possible. Maybe it's a Cairo image surface? Maybe something else?

Conclusion

We seem to change imaging models every ten years or so. Xlib, then Xrender with Cairo, then GPUs and CSS-based drawing for widgets. We've gone from trusted data on your local machine, to potentially malicious data that rains from the Internet. Gdk-pixbuf has spanned all of these periods so far, and it is due for a big change.

My open source career

- You have to start somewhere: My first patch

- You need a community to support you: 15 years of KDE

- Don’t do it for the money: Don’t sell free software cheap

openSUSE.Asia Summit

openSUSE.Asia Summit is an annual conference organized since 2014 every time in a different Asian city. Although it is a really successful event, which plays a really important role in spreading openSUSE all around the world, it is not an event everybody in openSUSE knows about. Because of that I would like to tell you about my experience attending the last openSUSE.Asia Summit, which took place on August 10-12 in Taipei, Taiwan.

Picture by COSCUP under CC BY-SA from https://flic.kr/p/2ay7hBD

openSUSE.Asia Summit 2018

This year, openSUSE.Asia was co-hosted with COSCUP, a major open source conference held annually in Taiwan since 2006, and GNOME.Asia. It was an incredible good organized conference with a really involved community and a lot of volunteers.

Community day

On August 11, we had the openSUSE community day at the SUSE office. We had lunch together and those who like beer (not me :stuck_out_tongue_winking_eye:) could try the openSUSE taiwanese beer. We also had some nice conversations. I especially liked the proposals during the discussion with the Board session to get both more students and mentors involved in the mentoring in Asia and to solve the translations problems. In the evening, we joined the COSCUP and GNOME community for the welcome party.

Talks

On Saturday and Sunday was when the conference itself took place. Specially the first day it was really crowded (with 1400 people registered! :scream:) Both days and from early in the morning, there were several talks simultaneously, some of them in Chinese, about a wide range of FOSS topics. Appart from the opening and closing, I gave a talk about mentoring and how I started in open source. I am really happy that in the room there were a lot of young people, many of them not involved (yet) in openSUSE. I hope I managed to inspire some of them! :wink: In fact the conference was full of inspiring talks, remarkably:

-

The keynote by Audrey Tang, Taiwan’s Digital Minister, full of quotes to reflect on: When we see internet of things, let’s make it an internet of beings.

-

We are openSUSE.Asia emotive talk by Sunny from Beijing, in which she explained how her desire to spread open source in Asia took her to organise the first openSUSE.Asia Summit.

-

“The bright future of SUSE and openSUSE” by Ralf Flaxa, SUSE’s president of engineering, which surprised me because of being really different to other of his talks I have attended.

-

Simon motivating talk about his experience in open source, packaging and the board activities.

Pictures by COSCUP under CC BY-SA from https://flic.kr/p/29sJhHq and https://flic.kr/p/LPGjaF respectively

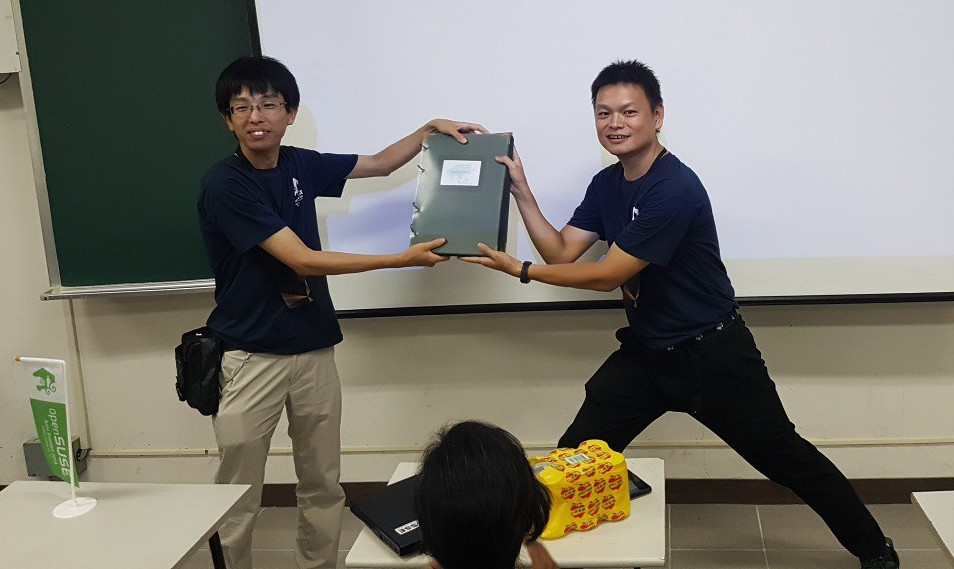

Apart from the talks, we had the BoF sessions on Saturday evening, in which we could get together surrounded by A LOT of pizza. In the session, there was a presentation of the two proposals for next year openSUSE.Asia Summit: India, with a really well prepared proposal, and Indonesia, whose team organized the biggest openSUSE.Asia Summit in 2016. There was also the exchange of the openSUSE Asia photo album, preserving the tradition that every organizing team should add some more pages to this album. It is getting heavy! :muscle:

One day tour

The conference ended with another tradition, the one day tour for speaker and organizers. We went to the National Palace Museum, to the Taipei 101 tower and to eat dumplings and other typical food. It was a great opportunity to get together in a different and fun environment.

See you next year

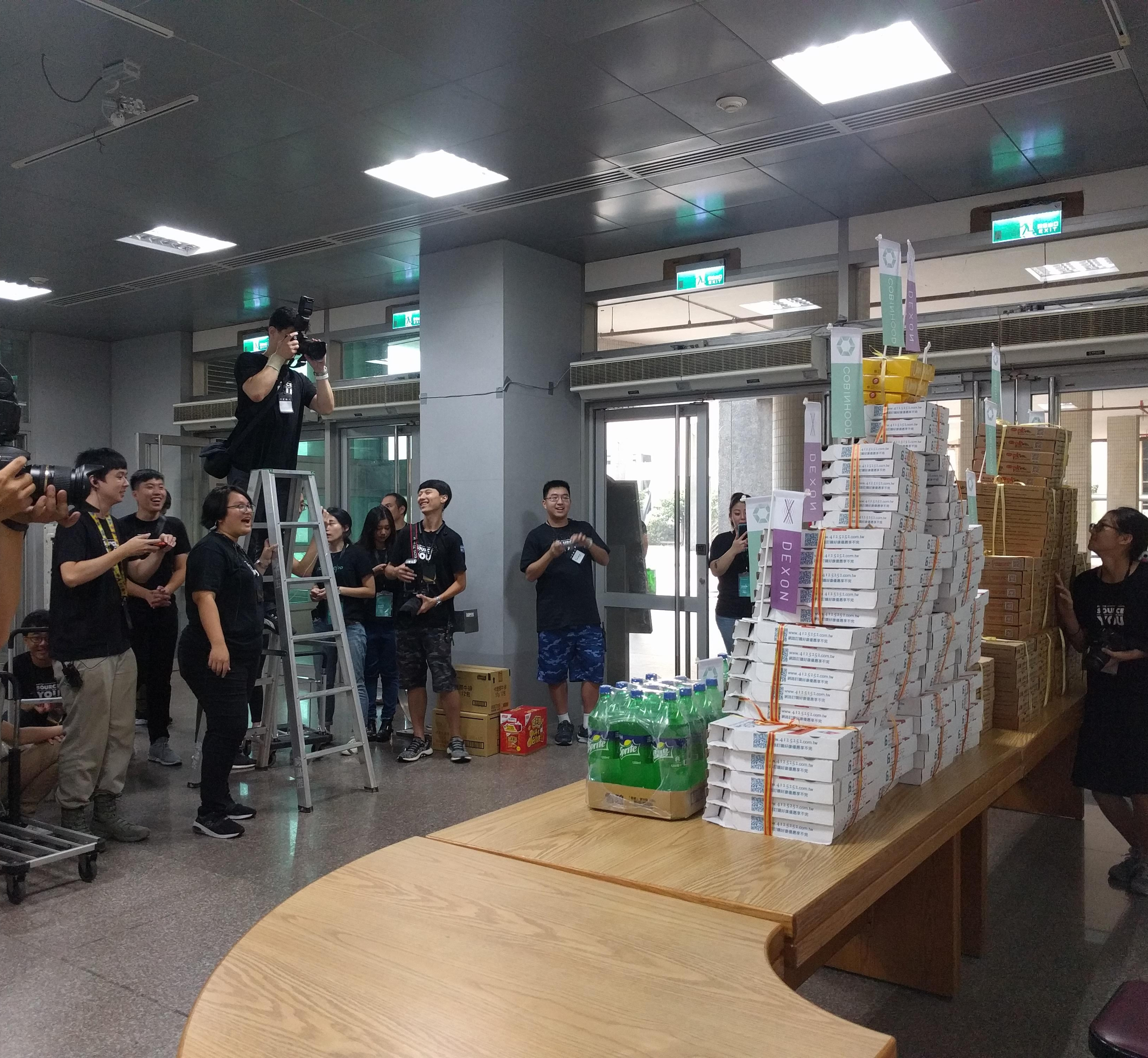

As you see, we had a lot of fun at openSUSE.Asia Summit 2018. From a more personal point of view, I enjoyed a lot meeting our Chinese mentors I normally write emails to. I had also the chance to meet some members of the GNOME board, exchange with them experiences, problems and solutions, and discuss ways in which both communities can keep collaborating in the future. The conference was full of fun moments like seeing a pyramid of pizza (and a hundred of people making pictures to it) and being amused by someone wearing a Geeko costume with 35 degrees and 70% humidity. :hotsprings:

Geeko’s picture by COSCUP under CC BY-SA from https://flic.kr/p/2atN6KE

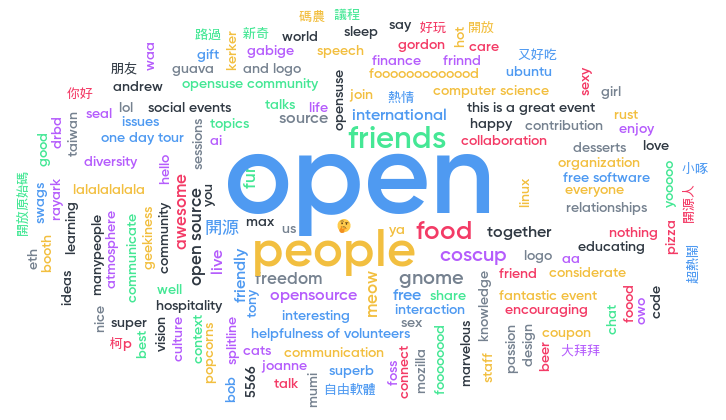

To finish, this is what attendees said to liked the most about the conference in the closing session:

See you next year!

About me

My name is Ana and I am mentor and organization admin for openSUSE at GSoC, openSUSE Board member, Open Build Service Frontend Engineer at SUSE and open source contributor in projects inside and outside openSUSE.

Linux ACL Masks Fun

So I am working on a Nextcloud package at the moment. Something that does not have such brain dead default permissions as the docs give you. To give all users who need access to the files, I used ACLs. This worked quite fine. Until during one update … suddenly all my files got executable permissions.

I ran rpm --setperms nextcloud to reset the permissions of all files and then I reran my nextcloud-fix-permissions. Nothing. All was good again. During the next update … broken again. I fixed the permissions manually by removing the x bit and then rerunning my script … bugged again. Ok time to dig.

Review of the HP Pavilion Power 580-146nd

Because of the incredible bargains during the Steam summer / winter sales, I have acquired 42 games over the last 6 years. My old openSUSE system was never able to play these games in a decent manner. Intel HD Graphics 4000 will only get you so far… I have looked into building a new AMD PC, which would allow me to run Linux / Steam games at high settings and 1080p. Recently I noticed a promotion for the HP Pavilion Power 580 desktop, equipped with a Ryzen 5 1400 processor and with a Radeon RX 580 graphics card. Heavily reduced in price. So I took the plunge and got myself a brand new desktop PC.

Design and hardware

The case of the HP Pavilion Power desktop looks pretty good. Its a very small case: 36.4 cm in height, 16.5 cm in width and 37.8 cm in depth. The front has some sharp triangular edges that make the desktop pleasant to look at.

One issue with the case is airflow. There are 2 side vents in this case. The fan in the back of the case blows in cool air. This is used by the processor cooler and hot air will immediately move out of the case. Which means the CPU is adequately cooled. The graphics card also gets cool air from below, but dispenses this air to an area where it cannot easily get out of the case. It needs to find its way out towards the top side vent located far to the left. It would have been better if there was an air vent directly in between the graphics card and the power supply.

Specifications and benchmarks

The specifications:

- AMD Ryzen 5 1400 CPU

- AMD Radeon RX 580 GPU

- 16 GB DDR4 RAM

- 128 GB M.2 SSD

- 1 TB 7200rpm HDD

- 1 x HDMI port

- 3 x Display port

- 1 x Type C USB 3.0 port

- 3 x Type A USB 3.0 port

- 2 x Type A USB 2.0 port

- 1 x DVD re-writer

- 1 x SD card reader

- 2 x Audio-in jack

- 1 x Audio-out jack

- 1x Microphone jack

- 1 x LAN port

- 1 x 300W 80 plus bronze PSU

On the front side, there is a DVD re-writer, a SD-card reader, an USB 3.0 type-C port, an USB 3.0 type-A port and a headphone jack. So this case is very well equipped from the front to handle all your media. The backside of the case is less prepared for the job. It has 2 USB 3.0 ports and 2 USB 2.0 ports. It has the regular ports for audio in, audio out and microphone. The graphics card provides 1 HDMI port and 3 display ports. And it has an Ethernet port. I think that there are not enough USB ports on the backside. I have a wired keyboard and mouse that take up the two USB 2.0 ports. Which leaves me with 2 USB 3.0 ports for everything else. To combat this issue, I have connected a TP-Link UH700. Which provides me with 7 additional USB 3.0 ports.

The power supply is a 300W and its 80 plus bronze rated. It is sufficient, but I would be more comfortable with a 450W or a 550W unit. That would give the machine a bit of headroom.

For benchmarks, I always look at the benchmark scores on the websites: cpubenchmark.net and videocardbenchmark.net. In the table below I compare the HP Pavilion Power 580-146nd with my previous PC, the Zotac ZBOX Sphere OI520. The CPU of my new desktop is 2,5 times faster then the CPU in my previous PC. The GPU is a whopping 18 times faster. So that means that this new PC will provide me with a big increase in overall performance.

|

Zotac ZBOX Sphere OI520 |

HP Pavilion Power 580-146nd |

|

|

Benchmark score CPU |

3287 |

8410 |

|

Benchmark score GPU |

455 |

8402 |

Installing openSUSE

I have wiped Windows from the system and installed openSUSE Leap 15. The installation went without a problem. However, there were some annoyances to resolve directly after installation.

The biggest one is sound. I hit an issue where the speakers ‘pop’ every time that a new video (YouTube) or new audio file (Amarok) started playing. This loud ‘pop’ was very annoying and not good for my speakers. I am not the first person to encounter this issue, as it was also posted on the openSUSE forms. The proposed solution by John Winchester resolved this issue for me. I now only hear this ‘pop’ during the startup of my PC.

Another issue is that my Bluetooth adapter is not recognized. I have installed the bluez-auto-enable-devices and bluez-firmware and bluez-tools. If I run the command “sudo rfkill list all”, it does find the Bluetooth adapter and indicates that it is not Soft blocked and not Hard blocked. But according to the KDE Plasma 5 desktop applet, no Bluetooth adapters have been found.

Furthermore the amdgpu-pro driver is not yet available for openSUSE Leap 15. The open source AMD driver is performing okay. But I did hit an issue where I cannot get the display to turn on again, a few hours after it was (automatically) switched off. This could very well be related to the graphics driver. I have now configured my PC to suspend after 3 hours. When I restart the PC from this suspended state, I don’t encounter this problem.

Gaming

This is the area where my new PC excels! I can run all games on very high settings and get frame rates higher than 60 Frames per Second. I would have expected some higher frame rates from the open source games that I tested. Some of these games are not very graphically intensive. But even with the RX 580, the frame rates are just good, but not great. The exception is Xonotic, a super fun FPS that not only looks great, but shows very high frame rates. And I must say that SuperTuxKart looks amazing with all settings at the highest level (6). I have listed the frame rates that I see on average below:

- OpenArena – 90 FPS

- Urbanterror – 125 FPS

- Xonotic – 90 to 200 FPS

- SuperTuxKart – 60 FPS

- SpeedDreams – 25 to 150 FPS

I can now play games on Steam that I couldn’t play before. I play these games on very high settings and 1080p. The frame rates are good, certainly considering the advanced graphics of these games. I have listed the frame rates that I see on average below:

- Rise of the Tomb Raider – 50 FPS

- BioShock Infinity – 90 FPS

- Half Life 2 – 90 to 120 FPS

- Road Redemption – 60 FPS

- Euro Truck Simulator 2 – 60 FPS

Multitasking

The HP Pavilion Power 580-146nd is a great multitasking machine. For fun, I tried opening LibreOffice Writer, LibreOffice Draw, Darktable, Amarok, Dolphin, Dragon Player, GIMP and Steam at the same time while playing music in Amarok. Programs opened instantly and the CPU cores / threads used very little of the available CPU power. In the future, I will use this desktop for testing various Linux distributions on Virtualbox, for developing photo’s with Darktable and for making movies with Kdenlive. So I will use a bit more of the available power. For ‘normal’ desktop usage this machine has plenty of power to spare.

Conclusion

The HP Pavilion Power 580-146nd packs a lot of power for a price that is hard to match when building your own PC. However, it might surprise you that I would not recommend this exact machine to others. The reason is that Nvidia cards are still better supported on openSUSE Leap 15. I feel that for most people, the HP Pavilion Power 580-037nd would be the better choice. This machine features an Intel i5-7400 CPU, a Nvidia GeForce GTX 1060 GPU and has 8 GB of RAM. The pricing is very comparable. And the outside of the machine is the same.

For me personally, this machine was absolutely the right choice. I am very interested in the AMD Ryzen CPU’s. I also like AMD’s strategy to develop an open source driver for its GPU’s (amdgpu) for the Linux kernel. I was looking for an AMD machine and this HP Pavilion Power 580-146nd fits the bill and then some.

Published on: 3 September 2018

Dive into ErLang

I recently had a chance to learn Erlang. In the beginning, it was quite hard to find a good book or tutorial that gave a clear idea of the language and the best practices. Hoping this blog can help someone who wants to get started.

As I began, it started to strike me that modern languages like Go, Ruby, Javascript have parts which have some similarity with Erlang. The parts include concurrency aspects w.r.t. passing messages using channels in Golang, the way functions return results of last executed expression in Ruby and first class functions in Golang/Javascript.

Erlang’s history revolves around telecom industry. It has been known for concurrency using light-weight processes, fault-tolerance, hot loading of code in production environments etc. The Open Telecom Platform (OTP) is another key aspect of Erlang which provides the framework for distributed computing and all other aspects mentioned above.

Some key points to keep in mind,

- Values are immutable

- Assignment operator (=) and functions work based on pattern matching

- No iterative statements like for, while, do..while.. etc. recursive functions serve the purpose

- Lists, Tuples (records – a hack using tuples) are very important data structures

- If, Case.. Of.. are the conditional blocks

- Guards are additional pattern matching clauses used with functions and Case.. Of..

- Every expression should return a value and last expression in a function automatically returns the result

- Functions are first class citizens

- Usage of punctuations ‘; , .’ etc. (one can relate this to indentation requirements in python)

Lets gets started with some code samples,

% Execute this from erlang shell. erl is the command

> N = 10.

10

%% The above statement compares N with 10 and binds N with 10 if its unbound. If its already bound with some other value, exception is thrown.%% Tuple

> Point = {5, 6}

{5,6}%% List

> L = [2, 5, 6, 7].

[2, 5, 6, 7]%% Extracting Head and Tail from List is key for List processing/transformations

> [H|T] = [2, 5, 6, 7].

>H.

2

>T.

[5, 6, 7]%% List comprehensions

> Even = [E || E <- L, E rem 2 =:= 0].

[2,6]

Lets take a look at the simple functions,

helloworld () -> hello_world.

The simple function will return hello_world, a constant string – atom. Lets have a look at a recursive function,

%% Factorial of N

factorial (N) when N =:= 0 -> 1;

factorial (N) -> N * factorial(N-1).%% Tail recursion

tail_factorial (N) -> tail_factorial(N, 1).

tail_factorial (0, Acc) -> Acc;

tail_factorial (N, Acc) -> tail_factorial (N-1, Acc * N).

The factorial functions demonstrate how Erlang does pattern matching on function parameters, usage of Guard (‘when’ clause), punctuations. We could have also written the statement as ‘factorial(0) -> 1;’ .

The second version tail_factorial demonstrates the optimized version using tail recursion to simulate the iterative method. In this method Erlang would remove the previous stack frames using Last Call Optimization (LCO). It is important to understand both techniques as recursion is used quite extensively.

Erlang has the following data types – atom, number, boolean (based on atom), strings and binary data. atom’s occupy more space and its better to using binary data type for strings of larger sizes.

Other builtin data structures are queues, ordsets, sets, gb_trees . Error, Throw, Exit, Try.. Of .. Catch statements provide the exception handling capabilities.

The most interesting part of the language is about spawning light weight processes and passing messages between them,

– module (dolphin-server).%% API– export ([dolphin_handler/0]).dolphin_handler() ->receivedo_a_flip ->io:format (“How about no ? ~n”);fish ->

io:format (“So long and thanks for the fish! ~n”);_ ->io:format (“we’re smarter than you humans~n”);

end,dolphin_handler().%% From shell, compile dolphin-server module>c(‘dolphin-server’).

%% Spawns a new process

> Dolphin = spawn (‘dolphin-server’, dolphin_handler, []).<0.124.0> %% process id of the newly spawned process

%% Now start passing messages!!

> Dolphin ! fish.

So long and thanks for the fish!>Dolphin ! “blah blah”we’re smarter than you humans

Open Telecom Platform (OTP)

- gen_server – for implementing server side piece in client/server

- supervisor – for implementing a supervisor in a supervisor tree. It takes care of the details regarding restarting of processes, fault-tolerance etc.

- gen_event – for implementing event handling functionality

- gen_statem – for implementing state machines

https://learnyousomeerlang.com/contents is one of the best online books for understanding how to use Erlang, best practices while building an application which is production ready. Its good to take pauses and move with book as its quite exhaustive, but wonderfully written

Debugging an Rc reference leak in Rust

The bug that caused two brown-paper-bag released in librsvg — because it was leaking all the SVG nodes — has been interesting.

Memory leaks in Rust? Isn't it supposed to prevent that?

Well, yeah, but the leaks were caused by the C side of things, and by

unsafe code in Rust, which does not prevent leaks.

The first part of the bug was easy: C code started calling a

function implemented in Rust, which returns a newly-acquired reference

to an SVG node. The old code simply got a pointer to the node,

without acquiring a reference. The new code was forgetting to

rsvg_node_unref(). No biggie.

The second part of the bug was trickier to find. The C code

was apparently calling all the functions to unref nodes as

appropriate, and even calling the rsvg_tree_free() function in the

end; this is the "free the whole SVG tree" function.

There are these types:

// We take a pointer to this and expose it as an opaque pointer to C

pub enum RsvgTree {}

// This is the real structure we care about

pub struct Tree {

// This is the Rc that was getting leaked

pub root: Rc<Node>,

...

}

Tree is the real struct that holds the root of the SVG tree and some

other data. Each node is an Rc<Node>; the root node was getting

leaked (... and all the children, recursively) because its reference

count never went down from 1.

RsvgTree is just an empty type. The code does an unsafe cast of

*const Tree as *const RsvgTree in order to expose a raw pointer to

the C code.

The rsvg_tree_free() function, callable from C, looked like this:

#[no_mangle]

pub extern "C" fn rsvg_tree_free(tree: *mut RsvgTree) {

if !tree.is_null() {

let _ = unsafe { Box::from_raw(tree) };

// ^ this returns a Box<RsvgTree> which is an empty type!

}

}

When we call Box::from_raw() on a *mut RsvgTree, it gives us back

a Box<RsvgTree>... which is a box of a zero-sized type. So, the program

frees zero memory when the box gets dropped.

The code was missing this cast:

let tree = unsafe { &mut *(tree as *mut Tree) };

// ^ this cast to the actual type inside the Box

let _ = unsafe { Box::from_raw(tree) };

So, tree as *mut Tree gives us a value which will cause

Box::from_raw() to return a Box<Tree>, which is what we intended.

Dropping the box will drop the Tree, reduce the last reference count

on the root node, and free all the nodes recursively.

Monitoring an Rc<T>'s reference count in gdb

So, how does one set a gdb watchpoint on the reference count?

First I set a breakpoint on a function which I knew would get passed

the Rc<Node> I care about:

(gdb) b <rsvg_internals::structure::NodeSvg as rsvg_internals::node::NodeTrait>::set_atts

Breakpoint 3 at 0x7ffff71f3aaa: file rsvg_internals/src/structure.rs, line 131.

(gdb) c

Continuing.

Thread 1 "rsvg-convert" hit Breakpoint 3, <rsvg_internals::structure::NodeSvg as rsvg_internals::node::NodeTrait>::set_atts (self=0x646c60, node=0x64c890, pbag=0x64c820) at rsvg_internals/src/structure.rs:131

(gdb) p node

$5 = (alloc::rc::Rc<rsvg_internals::node::Node> *) 0x64c890

Okay, node is a reference to an Rc<Node>. What's inside?

(gdb) p *node

$6 = {ptr = {pointer = {__0 = 0x625800}}, phantom = {<No data fields>}}

Why, a pointer to the actual contents of the Rc. Look inside

again:

(gdb) p *node.ptr.pointer.__0

$9 = {strong = {value = {value = 3}}, weak = {value = {value = 1}}, ... and lots of extra crap ...

Aha! There are the strong and weak reference counts. So, set a

watchpoint on the strong reference count:

(gdb) set $ptr = &node.ptr.pointer.__0.strong.value.value

(gdb) watch *$ptr

Hardware watchpoint 4: *$ptr

Continue running the program until the reference count changes:

(gdb) continue

Thread 1 "rsvg-convert" hit Hardware watchpoint 4: *$ptr

Old value = 3

New value = 2

At this point I can print a stack trace and see if it makes sense, check that the refs/unrefs are matched, etc.

TL;DR: dig into the Rc<T> until you find the reference count, and

watch it. It's wrapped in several layers of Rust-y types; NonNull

pointers, an RcBox for the actual container of the refcount plus the

object it's wrapping, and Cells for the refcount values. Just dig

until you reach the refcount values and they are there.

So, how did I find the missing cast?

Using that gdb recipe, I watched the reference count of the toplevel SVG node change until the program exited. When the program terminated, the reference count was 1 — it should have dropped to 0 if there was no memory leak.

The last place where the toplevel node loses a reference is in

rsvg_tree_free(). I ran the program again and checked if that

function was being called; it was being called correctly. So I knew

that the problem must lie in that function. After a little

head-scratching, I found the missing cast. Other functions of the

form rsvg_tree_whatever() had that cast, but rsvg_tree_free() was

missing it.

I think Rust now has better facilities to tag structs that are exposed

as raw pointers to extern code, to avoid this kind of perilous

casting. We'll see.

In the meantime, apologies for the buggy releases!

What ails GHashTable?

I promised a closer look at GHashTable and ways to improve it; here's that look and another batch of benchmarks to boot.

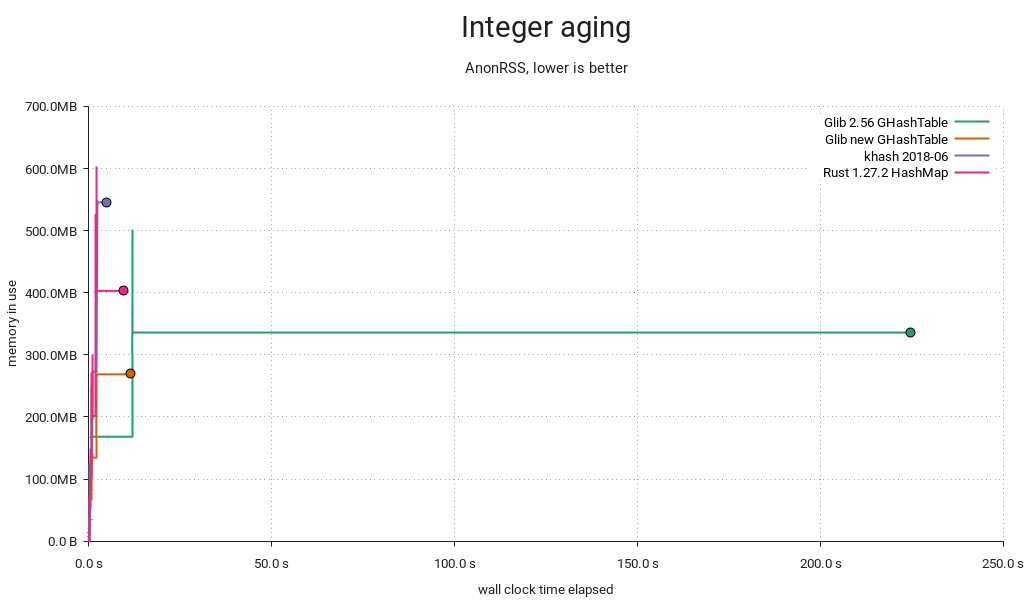

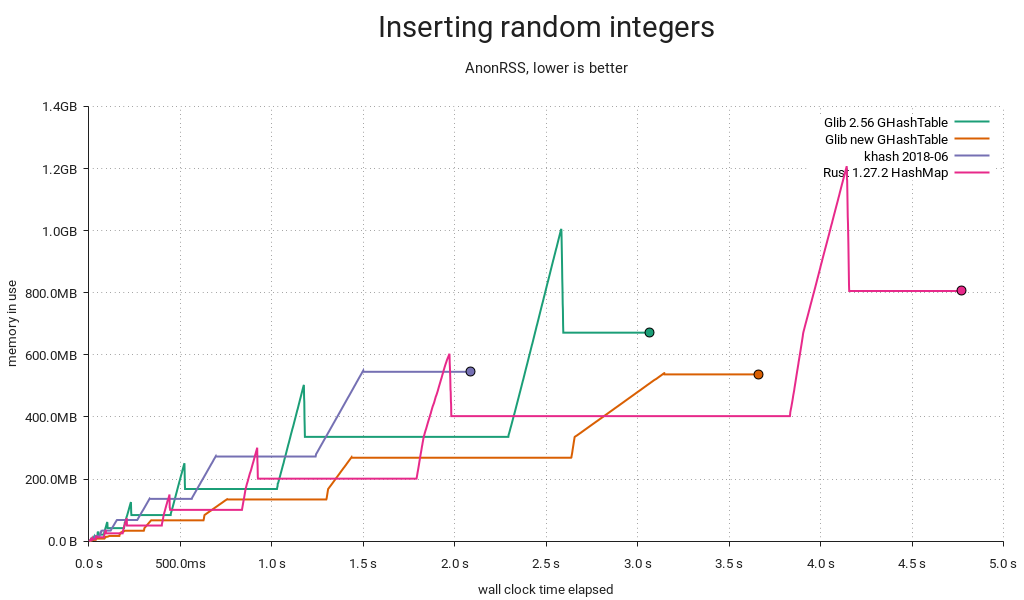

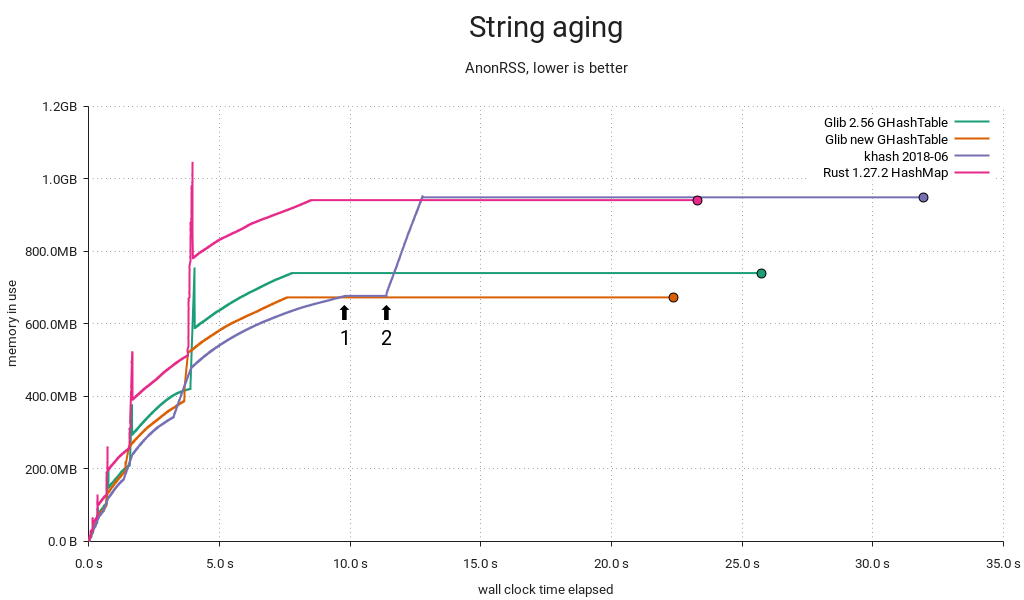

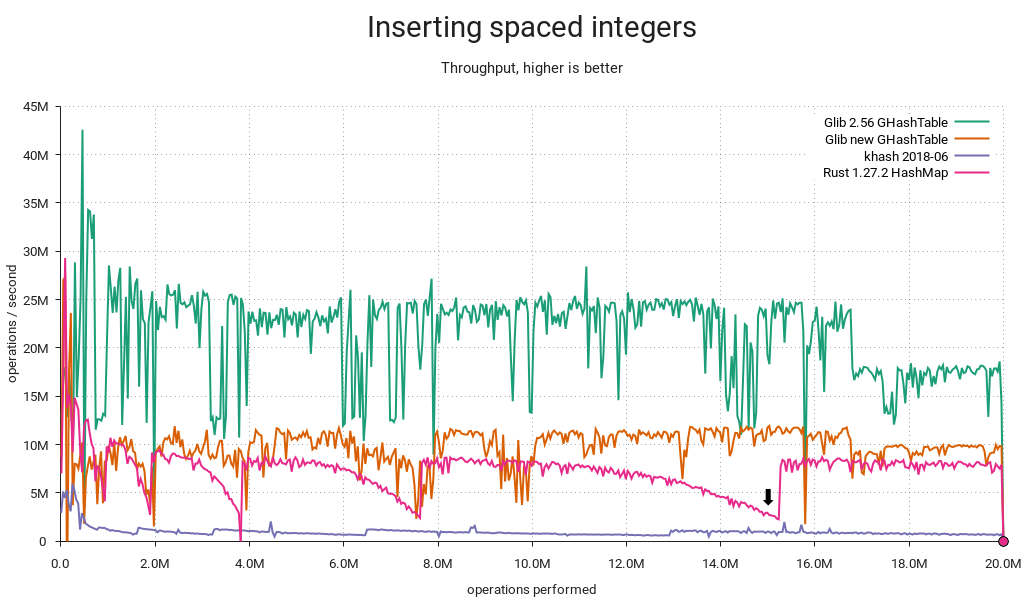

This time around I've dropped most of the other tables from the plots, keeping only khash and adding results from my GLib branch and Rust's HashMap, the latter thanks to a pull request from Josh Stone. These tables have closely comparable performance and therefore provide a good reference. Besides, every table tested previously is either generally slower or more memory-hungry (or both), and including them would compress the interesting parts of the plot.

I'll try to be brief this time¹. For more background, check out my previous post.

Bad distribution

First and foremost, the distribution was terrible with densely populated integer keyspaces. That's taken care of with a small prime multiplier post-hash.

Peak memory waste

Previously, we'd resize by allocating new arrays and reinserting the entries, then freeing the old arrays. We now realloc() and rearrange the entries in place, lowering peak memory use by about a third. This can prevent going into swap or even crashing out on a memory-constrained system.

Overall memory waste

If you've got a sharp eye, you'll notice that overall memory consumption is lower now too. Whereas the old implementation always made space for 64-bit keys and values, the new one will allocate 32 bits when possible and switch to bigger entries on demand. In the above test, the keys are integers in the range [0 .. 2³²-1], reducing memory consumption by 20% overall. If values had been in [0 .. 2³²-1] too, the reduction would've amounted to 40%. A caveat though — negative integers (e.g. from GINT_TO_POINTER()) still require 64 bits due to two's complement/sign extension.

Load factor mistargeting

When GHashTable is subjected to churn/aging, it will accumulate tombstones, and eventually the sum of entries and tombstones will eclipse the maximum load, resulting in a cleanup. Since a cleanup is just a reinsertion in place, it's handled similarly to a resize, and we take the opportunity to pick a better size when this happens. Unfortunately, the grow threshold was set at .5 if the table got filled up the rest of the way by tombstones, resulting in post-grow load factors as low as .25. That's equal to the shrink threshold, so with a little (bad) luck it'd shrink back immediately afterwards.

I changed the threshold to .75, so the load factor intervals (not counting tombstones) now look like this:

- <.25 → shrink immediately → .5

- [.25 .. .75] → no change

- [.75 .. .9375] → grow on cleanup → [.375 .. .46875]

- >.9375 → grow immediately → .46875

This seems like a more reasonable stable range with less opportunity for fluctuation and waste, and there's still lots of headroom for tombstones, so cleanups aren't too frequent.

But it's slower now?

In some cases, yes — can't be helped. It's well worth it, though. And sometimes it's faster:

This particular run uses less memory than before, which is puzzling at first, since keys and values are both pointers. A look at the test's smaps reveals the cause:

01199000-08fb8000 rw-p 00000000 00:00 0 [heap]

The heap happened to be mapped in the lower 4GiB range, and GHashTable can now store 32-bit entries efficiently. That means pointers too.

I caught khash doing something interesting in this benchmark. Some time after the aging cycle has started (1), it initiates a tombstone cleanup. In this case it decides to grow the table simultaneously, starting at (2). This could be an example of the kind of load factor mistargeting I mentioned above — certainly it would have a very low load factor for the remainder of the test.

Robin Hood to the rescue?

Short answer: No. Long answer:

Rust uses Robin Hood probing, and the linear shifts required for insertion and deletion start to get expensive as the table fills up. It also came in last in a lookup-heavy load I ran. On the other hand it avoids tombstones, so there's no need for periodic cleanups, and deletions will make it progressively faster instead of slower. GHashTable's quadratic probing seems to hold a slight edge, albeit workload-dependent and, well, slight. In any case, I couldn't find a compelling reason to switch.

What about attack resistance?

The improved GHashTable is much more resistant to accidental misbehavior. However, it wouldn't be too hard to mount a deliberate attack resulting in critically poor performance². That's what makes Rust's HashMap so interesting; it gets its attack resistance from SipHash, and if these benchmarks are anything to go by, it still performs really well overall. It's only slightly slower and adds a reasonable 4 bytes of overhead per item relative to GHashTable, presumably because it's storing 64-bit SipHash hashes vs. GHashTable's 32-bit spit and glue.

I think we'd do well to adopt SipHash, but unfortunately GHashTable can't support keyed hash functions without either backwards-incompatible API changes or a hacky scheme where we detect applications using the stock g_str_hash() etc. hashers and silently replace them with calls to corresponding keyed functions. For new code we could have something like g_hash_table_new_keyed() accepting e.g. GKeyedHasher.

A better option might be to add a brand new implementation and call it say, GHashMap — but we'd be duplicating functionality, and existing applications would need source code changes to see any benefit.

¹ Hey, at least I tried.

² If you can demo this on my GLib branch, I'll send you a beer dogecoin nice postcard as thanks. Bonus points if you do it with g_str_hash().

What Stable Kernel Should I Use

I get a lot of questions about people asking me about what stable kernel should they be using for their product/device/laptop/server/etc. all the time. Especially given the now-extended length of time that some kernels are being supported by me and others, this isn’t always a very obvious thing to determine. So this post is an attempt to write down my opinions on the matter. Of course, you are free to use what ever kernel version you want, but here’s what I recommend.

Announcing repo-checker for all

Adapted from announcement to opensuse-factory mailing list:

Ever since the deployment of the new repository checker, or repo-checker as you may be familiar, for Factory last year there have been a variety of requests (like this one opensuse-packaging) to utilize the tool locally. With the large amount of recent work done to handle arbitrary repository setups, instead of being tied to the staging workflow, this is now possible. This means devel projects, home projects, and openSUSE:Maintenance can also make use of the tool.

The tool is provided as an rpm package and is included in Leap and Tumbleweed, but to make use of this recent work version 20180821.fa39e68 or later is needed. Currently, that is only available from openSUSE:Tools, but will be in Tumbleweed shortly. See the openSUSE-release-tools README for installation instructions. The desired package in this case is openSUSE-release-tools-repo-checker.

A project has been prepared with an intentionally uninstallable package for demonstration. The following command can be used to review a project and print installation issues detected.

$ osrt-repo-checker --debug --dry project_only home:jberry:repo-checker

[D] no main-repo defined for home:jberry:repo-checker

[D] found chain to openSUSE:Factory/snapshot via openSUSE_Tumbleweed

[I] checking home:jberry:repo-checker/openSUSE_Tumbleweed@9a77541[2]

[I] mirroring home:jberry:repo-checker/openSUSE_Tumbleweed/x86_64

[I] mirroring openSUSE:Factory/snapshot/x86_64

[I] install check: start (ignore:False, whitelist:0, parse:False, no_filter:False)

[I] install check: failed

9a77541

## openSUSE_Tumbleweed/x86_64

### [install check & file conflicts](/package/view_file/home:jberry:repo-checker/00Meta/repo_checker.openSUSE_Tumbleweed)

<pre>

can't install uninstallable-monster-17-5.1.x86_64:

nothing provides uninstallable-monster-child needed by uninstallable-monster-17-5.1.x86_64

</pre>

Note that the tool automatically selected the openSUSE_Tumbleweed repository since it builds against openSUSE:Factory/snapshot. The tool will default to selecting the first repository chain that builds against the afore mentioned or openSUSE:Factory/standard, but can be configured to use any repository.

All OSRT tools can be configured either locally or remotely via an OBS attribute with the local config taking priority. The local config is placed in the osc config file (either ~/.oscrc or ~/.config/osc/oscrc depending on your setup). Add a new section for the project in question (ex. [home:jberry:repo-checker]) and place the configuration in that section. The remote config is placed in the OSRT:Config attribute on the OBS project in question. For example, the demonstration project has configured the architecture whitelist. The format is the same for both locations.

To indicate the desired repository for review use the main-repo option. For example, one could set it as follows in the demonstration project.

main-repo = openSUSE_Leap_42.3

The benefit of the remote config is that it will apply to anyone using the tools instead of just your local run.

As mentioned above the list of architectures reviewed can also be controlled. For example, limiting to x86_64 and i586 can be done as follows.

repo_checker-arch-whitelist = x86_64 i586

There are several options available (see the code), but the only other one likely of interest is the no filter option (repo_checker-no-filter). The no filter option forces all problems to be included in the report instead of only those from the top layer in the repository stack. If one wanted to resolve all the problems in openSUSE:Factory a project with such fixes could be created and reviewed with the no filter option set to True in order to see what problems remain.

Do note that a local cache of rpm headers will be created in ~/.cache/opensuse-repo-checker which will take just over 2G for openSUSE:Factory/snapshot for x86_64 alone. You can delete the cache whenever, but be aware the disk space will be used.

Enjoy!

Member

Member federico1

federico1

~n”);

~n”);