openSUSE in 2022 | Continues to be Awesome

openSUSE Tumbleweed – Review of the week 2022/35

Dear Tumbleweed users and hackers,

We all knew the day would come – and after 26 daily snapshots were released, 0830 wanted to break that. It turned out that libxml 2.10.x is not entirely ABI compatible with the previous 2.9.x we had in the tree (depending on configure parameters given; IMHO such symbols should have a specific version for those options, allowing to require feature sets). Snapshot 0831 is a very large one: as we checked in glibc during this week, coupled with this ABI break, we decided to let OBS decide what all needs a rebuild (with glibc which usually is everything). In total, we released 5 snapshots this week (0826, 0827, 0828, 0829, and 0831).

The most relevant changes in these snapshots were:

- Meson 0.63.1

- Shadow 4.12.3

- Grub2: revert to a version from 2 months ago without the various TPM patches: they turned out to cause a lot of trouble (and are being further reworked in the devel project)

- Mozilla Firefox 104.0

- Mozilla Thunderbird 102.2

- glibc 2.36

- Make: Add support for job server using named pipes

The staging projects are testing these upgrades and changes:

- Linux kernel 5.19.6

- util-linux 2.38.1: this also brings a massive package layout change, which will probably take some time to settle. It’s part of the distro bootstrap and we have to be careful to not blow it out of proportion

- Mozilla Thunderbird 102.2.1

- GCC 12.2

- fmt 9.0: Breaks ceph and zxing-cpp

- libxml 2.10.2: breaks xmlsec1

- gpgme 1.18: breaks libreoffice

- libxslt 1.1.36: breaks daps

Tumbleweed Continues Release Streak

Tumbleweed’s continuous daily release streak has reached an astounding 26 snapshots.

The streak of openSUSE’s rolling release continued this week and packages like glibc, ibus, Mozilla Firefox and sudo all received updates.

Will the streak continue beyond snapshot 20220829? Users should know soon.

Snapshot 20220829 provided package updates for AppArmor and libapparmor3.0.7. The new versions fixed the setuptools-version detection in buildpath.py. The Man pages for Japanese made some improvements with the man-pages-ja 20220815 update. The tree 2.0.3 update provided multiple fixes for .gitignore functionality and fixed a couple segfaults.

The 20220828 snapshot had ten packages updated. Among the updated packages were ibus 1.5.27, which enabled an ibus restart in GNOME desktop and disabled XKB engines in Plasma Wayland. The update of webkit2gtk3 2.36.7 fixed several crashes and rendering issues as well as addressed a Common Vulnerabilities and Exposure related to Apple’s use of the package. The Python web framework and asynchronous networking library python-tornado6 6.2 enabled SSL certificate verification and hostname checks by default and its Continuous Integration has moved from Travis and Appveyor to Github Actions. Another package to update in the snapshot was font handler libXfont2 2.0.6. The new version fixed some spelling and wording issues. It also fix comments to reflect the removal of legacy Operating System/2 support.

A new major version of the Mozilla Firefox browser arrived in snapshot 20220827. Firefox 104.0 addressed multiple CVEs to include an address bar spoofing to disguise a URL; another fixed an exploit that showed evidence of memory corruption and the possibility to running arbitrary code. The update of the GNU C Library added major new features; glibc 2.36 added process_madvise and process_mrelease functions. Support for the DT_RELR relocation format was added and socket connection fsopen and many other sorting features were added. VMware’s open-vm-tools 12.1.0 package, which enables several features to better manage seamless user interactions with guests, fixed a vulnerability that allowed for local privilege escalation; it also had a fix for the build of the ContainerInfo plugin for a 32-bit Linux release. A few RubyGems like rubygem-faraday-net_http 3.0.0, rubygem-parser 3.1.2.1 and rubygem-rubocop 1.35.1 were also updated in the snapshot.

A total of three packages were updated in snapshot 20220826. The simple PIN- or passphrase-secure reader pinentry updated to 1.2.1; the package improved accessibility and fixed the handling of an error during initialization. The package update also made sure an entered PIN is always cleared from memory. Its graphical user interface pinentry-gui was also updated to the 1.2.1 version. The shadow package, which converts UNIX password files to the shadow password format, updated to version 4.12.3. It fixed a 9-year-old CVE. CVE-2013-4235 fixed the time-of-check time-of-use race condition when copying and removing directory trees. The package also updated and fixed some Spanish and French translations.

A minor update to sudo 1.9.11p3 arrived in snapshot 20220825. The update fixed a crash in the Python module with Python 3.9.10 on some systems and AppArmor integration was made available for Linux, so a sudoers rule can now specify an APPARMOR_PROFILE option to run a command confined by the named AppArmor profile. The sudo package also fixed a regression introduced in 1.9.11p1 that caused a warning when logging to sudo_logsrvd if the command returned no output. That regression was never released in a Tumbleweed snapshot. An update of the open-source disk encryption package cryptsetup updated to 2.5.0. This new version removed cryptsetup-reencrypt tool from the project and move reencryption to an already existing cryptsetup reencrypt command. Other packages to update in the snapshot were gnome-bluetooth 42.3, device memory enabler ndctl 74, yast2-tune 4.5.1 and more.

Search API Endpoints, Fully Documented

Happy birthday, Linux! Here are 6 Linux origin stories

The 31st birthday of the Linux #kernel was yesterday. For this occasion some opensource.com contributors (including me) shared how we got started with Linux. Lots of nice memories :-)

The article is available at https://opensource.com/article/22/8/linux-birthday-origin-stories

flower

openSUSE Tumbleweed – Review of the week 2022/34

Dear Tumbleweed users and hackers,

This week, Tumbleweed made the impossible possible: we have published 8 daily snapshots in just 7 days. Of course, the timing was a bit on our side: the snapshot that started building yesterday went so quickly through build and QA that it already managed to be published. In any case, the daily streak continued all along (now at 22 – a new all-time record).

The snapshots were numbered 0818 through 0825 and contained these changes:

- debuginfod: The package now recommends debuginfod-profile, which contains the configuration and URL to the server. Your gdb experience should be much smoother. If bandwidth is an issue when debugging, you can uninstall/block debuginfod-profile.

- timezone 2022c: We’re coming close to the dates where countries switch summer to winter time, and as usual, some countries change their rules

- KDE Gear 22.08.0

- Linux kernel 5.19.2

- Systemd 251.4

- Xen 4.16.2

- Mesa 22.1.5 & 22.1.7

- Boost 1.80

In stagings, we are busy preparing these updates and changes:

- Meson 0.63.1 (0826+)

- Shadow 4.12.3 (0826+)

- Mozilla Firefox 104.0 & Thunderbird 102.2

- Glibc 2.36: all build issues have been resolved. Final spring towards openQA results

- fmt 9.0: Breaks ceph and zxing-cpp

- Make: Add support for job server using named pipes. A few packages have been observed not to be parallel build compatible with this

Mesa, Git, Gear, More Update in Tumbleweed

This was another full week of openSUSE Tumbleweed snapshots.

The rolling release continues fastforwarding daily with new versions of software.

The most recent snapshot is 20220824 and it updated the Linux Bluetooth protocol bluez 5.65; the package fixed a few Advanced Audio Distribution Profile issues and added experimental support for ISO sockets. The 4.16.2 version of hypervisor xen dropped several patches contained in the new tarball including a CLFLUSH work around for AMD x86. The new xen update fixes a few Common Vulnerabilities and Exposures. There were a few updates related to Vulkan like an update of shaderc 2022.2, which added support for 16b-bit-types in High-Level Shader Language. There were also updates to vulkan-loader and vulkan-tools 1.3.224.0. The OpenGL and OpenGL for Embedded Systems shader glslang 11.11.0 added OpSource support and avoids a double-free in functions clone for vulkan in relaxed mode.

Mesa and its drivers package updated to version 22.1.7 in snapshot 20220823. The 3d graphics library’s new version had fixes and cleanups all over the tree; most of the fixes were for the Zink driver that emits Vulkan Application Programming Interface calls. Several YaST packages updated in the snapshot. There were some adjustments made with yast2-storage-ng 4.5.8 to adapt to new types of mounts by libstorage-ng, and yast2-network 4.5.5 added a class to generate the configuration needed for Fibre Channel over Ethernet. Other packages to update in the snapshot were transactional-update 4.0.1, autoyast2 4.5.3 and many other libraries.

Snapshot 20220822 updated a half dozen packages. An update of aws-cli 1.25.55 fixed a regression that made setting configuration values for a new profile problematic. The package also received several API changes from a jump of a few minor versions. The xfce4-panel 4.16.5 fixed a critical warning when starting on a disconnected device and updated .gitignore. Xfce’s desktop manager xfdesktop 4.16.1 removed an unused function call and allocates memory after error processing. Other packages to update were lttng-ust 2.13.3, python-matplotlib 3.5.3 and python-pbr 5.10.0

Mesa had its first update of the week to version 22.1.5 in snapshot 20220821. Mesa dropped a warning for unhandled hwconfig keys. The SDL2 2.24.0 update added a number of functions relating to input devices such as keyboards and joysticks. It also added support for the NVIDIA Shield Controller. The other two packages to update were libevdev 1.13.0 and libtirpc 1.3.3, which fixed a Denial of Service vulnerability.

Snapshot 20220820 provided an update of ImageMagick 7.1.0.46 and its changelog says it moved building the dependencies to a separate file and eliminated a compiler warning. The update of bind 9.18.6 fixed some crashes that occurred when a configured forwarder sent a broken response, and a fix was made with the dnssec-policy. Linux Kernel 5.19.2 updated in the snapshot and most of the code was related to Advanced Linux Sound Architecture additions and fixes for KVM. The systemd 251.4 update dropped conflicted headers and a patch was added to resolve conflicts with glibc 2.36. A few Python Package Index updates were also made available with the snapshot.

Of the three snapshots to begin the week, which were 20220819, 20220818 and 20220817, a few packages of the many stood out. Among the packages to update were KDE Gear 22.08.0, which allows you to sort files also by file extension with Dolphin. KWrite added another interesting new feature that now supports tabs and screen splitting so you can open several documents at the same time. GTK4 4.7.2 added audio support to the ffmpeg backend and fixed handling of touchpad hold events. Text editor vim 9.0.0224 redraw flags that are not named specifically and it fixed the splitting of a line that may duplicate virtual text. Multiple bug fixes that were developer visible were fixed in the git 2.37.2 update. The version control handler improved git p4 non-ASCII support. Other packages to update were a 12.1.1 gcc12 git version, ncurses 6.3.20220813, rsync 3.2.5 and timezone 2022c, which updates that fact that Iran will no longer observes Daylight Saving Time after 2022.

ALP Aims to Balance Past, Present with Future

The openSUSE Project has been discussing technical aspects for the Adaptable Linux Platform (ALP) on the development mailing list.

An email titled x86_64 architecture level requirements, x86-64-v2 for openSUSE Factory kicked off a discussion acknowledging challenges possessed by instructional sets for different subsets of the x86-64 architecture. Four defined levels of the x86-64 architecture are categorized as x86-64-v1, x86-64-v2, x86-64-v3 and x86-64-v4. The newer micro-architectures after 86-64-v2 allow for greater performance advantages and are present in many of the newer hardware on the market.

All these architectures exist within the code stream of openSUSE Factory, which are targeted for specific builds and distributions. For example, openSUSE Tumbleweed is a customized build blueprint of all the code functioning together that leads to a well tested release of a snapshot for the rolling release distribution. Another would be the super stable openSUSE Leap release, which is based on years of building toward a mature target that was designed to bring uniformity among Leap and SUSE Linux Enterprise.

Transitioning to the next long-term cycle of development, SUSE’s Adaptable Linux Platform and openSUSE’s ALP are presented with an architectural divergence as enterprise builds for future hardware and community builds for past, present and future uses.

SUSE’s aim with its Adaptable Linux Platform is to build a new immutable-base operating system for enhanced application-layer features and container orchestration on newer hardware. The prototype that is expected soon will have x86-64-v3 as a baseline.

A proposal within the email thread suggests moving Factory from x86-64-v1 to x86-64-v2, which appears to be the consensus among 80 plus comments. Whatever is decided, the transitioning for ALP to move to x86-64-vX will be based on the decision by the community made for Factory. However, this is not all regarding x86-64-vX. openSUSE’s ALP builds confront building for users of newer and older machines. While SUSE’s ALP target is specific to v3, the community will not be letting users down and aims to support hardware on the community side. The same immutable-base operating system is expected to be amplified both on arm and RISC-V for new hardware as the architectures expand. The community version will use the same architectural availability that’s available in the Factory code stream. Rebuilds of SUSE’s ALP may be necessary for openSUSE’s architectural needs. However, once the prototype is released, the release team plans to run tests and gather comparative data to understand the performance differences of v2 and v3. There is a desire to support a migration path.

7 sudo myths debunked

Whether attending conferences or reading blogs, I often hear several misconceptions about sudo. Most of these misconceptions focus on security, flexibility, and central management. In this article, I will debunk some of these myths.

Many misconceptions likely arise because users know only the basic functionality of sudo. The sudoers file, by default, has only two rules: The root user, and members of the administrative wheel group, can do practically anything using sudo. There are barely any limits, and optional features are not enabled at all. Even this setup is better than sharing the root password, as you can usually follow who did what on your systems using the logs. However, learning some of the lesser-known old and new features gives you much more control and visibility on your systems.

In my latest opensource.com article, I debunk seven of the sudo myths: https://opensource.com/article/22/8/debunk-sudo-myths

YaST Development Report - Chapter 7 of 2022

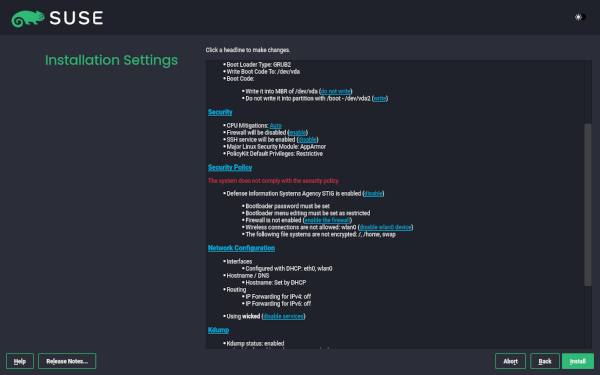

Lately in this blog we have been covering mostly developments in the area of ALP (Adaptable Linux Platform), although we never stopped improving how YaST works on our currently maintained systems. In this report we will start by taking a look to an installer feature we plan to release soon for openSUSE Tumbleweed and also as a maintenance update for the current versions of Leap and SUSE Linux Enterprise. Of course, that will not stop us from offering you a sneak peek about the mid-term future. The whole menu includes:

- Presenting the new mechanism to validate security policies during installation

- An announcement of the official YaST container images

- Some reports about the current state on ALP of both Cockpit and YaST

- An update on the development of Iguana and D-Installer

Security Policies in the Installer

We all know there is a series of good practices that must be observed when installing and administering any computer system in order to minimize the security hazards. In some cases, those good practices are formalized into a so-called security policy that defines the guidelines that must be observed in order for a given system to be accepted in a secure environment. In that regard, the DISA (Defense Information Systems Agency) and SUSE have authored a STIG (Secure Technical Implementation Guide) that describes how to harden a SUSE Linux Enterprise system.

The STIG is a long list of rules, each containing description, detection of problems and how to remediate problems on a per rule basis. There are even some tools to automate the detection and remediation of many of the problems in an already installed systems. But some aspects are very hard to correct if they are not properly set during the installation process of the operating system, like the need of encrypting all the relevant filesystems or honoring certain restrictions in how the devices are formatted and the mount points are defined.

So we are actively working on adding the concept of security policies to both the interactive installation and AutoYaST. It is still a work in progress and we will offer a more detailed review of the feature when it’s ready to hit the repositories.

Official YaST Container Images

On other news, you know we have been working on making possible to execute YaST as a container. So far, it was necessary to execute a script in order to use the containerized version of YaST. It was even available as a package on openSUSE Tumbleweed. But now, with the recent advances on ALP and its concept of so-called workloads, we found a better way to distribute the YaST containers.

We have now three “official” containers for YaST. available at the repository SUSE:ALP:Workloads. Although the repository, as the name suggests, is supposed to provide containerized workloads to be executed on top of ALP, we have decided it will be the official source for containerized YaST no matter if you execute it on top of ALP, the latest SLE, the latest Leap or openSUSE Tumbleweed. It should work the same in all cases.

So from now on, you can execute a containerized YaST anywhere by doing:

podman container runlabel run

registry.opensuse.org/suse/alp/workloads/tumbleweed_containerfiles/suse/alp/workloads/yast-mgmt-ncurses:latest

Replace “ncurses” by “qt” or “web” to enjoy the alternative versions.

The route is a bit redundant and maybe it will change in the future, but that’s out of the control of the YaST Team. In any case, we will always use the official repository for ALP workloads.

For more details, see the full

announcement

at the archive of the yast-devel mailing list.

Additionally, we are also proud of remarking that those container images include the YaST Kdump and YaST Bootloader modules. We recently adapted them to work containerized, which we consider an important milestone because it implies libstorage-ng is now able to perform a system analysis of the running host system from a container.

YaST on ALP

As you know, the main motivation to containerize YaST was making it available to ALP users. But ALP is a new concept in Linux distributions with an innovative approach to some areas of system management. So we didn’t expect YaST, containerized or not, to just work smooth out-of-the-box on top of ALP. Thus, we are doing a continuous evaluation of the situation in the early previews of ALP to detect the areas of YaST (or even ALP itself) that need fixing and to decide where to put the focus on every given moment.

The good news is that most things seems to work in the non-transactional variant of ALP. We only detected small problems that we are now tracking and will fix step by step as time permits.

But on transactional ALP systems, which likely will be the default version in most scenarios, we

have a very visible problem with software installation. YaST does not use static RPM dependencies

to enforce beforehand the installation of the tools it relies on. Instead, YaST installs any needed

software on demand during its execution. That’s a problem in a transactional system, as defined by

ALP or openSUSE MicroOS. On the bright side, we checked that

working-around the software installation problem, most of the other functionality works just fine

also in a transactional ALP system with the only exception of the previously mentioned YaST Kdump

and YaST Bootloader. Although those two modules work fine containerized in any non-transactional

system, we still need to find a way for them in situations in which /boot is not directly

writeable.

In short, things look relatively promising for YaST on ALP, but we still need to do a lot of adjustments here and there. We will keep you informed of the progress.

What About Cockpit?

As already mentioned, ALP is an innovative system and that not only affects YaST. Cockpit, the default platform for 1:1 system administration on ALP, also struggles with some of the particularities of the first prototypes of ALP, especially in its transactional flavor.

Thus, we are also investing quite some time testing all the Cockpit functionality on ALP and documenting which parts need tweaking… or even rethinking the Cockpit or the ALP approaches to some topics. As with the YaST case, we are already working on some aspects and we will keep you informed as long as we solve every existing problem.

Moving Forward with Iguana and D-Installer

Talking about ALP, you know we are using it as an opportunity to rethink how the (open)SUSE systems may be installed in the future on real hardware with complex requirements in terms of storage technologies, network setup, etc. In that regard, we keep developing D-Installer and Iguana.

In the case of the former, most recent news are about the internal architecture. You may remember from previous reports that we are trying to make D-Installer as modular as possible with separate processes to handle software management, creation of users, internationalization, etc. To make that mechanism more powerful, we defined now a D-Bus API so the different processes can interact with the user from a centralized user interface. See more details in the corresponding pull request.

In the case of Iguana we are focusing of expanding its scope (eg. making it useful in the

context of

Saltboot)

and documentation. The Iguana Orchestrator

(iguana-workflow) now comes with some examples for

possible uses like running D-Installer as a set of two separate containers, one for the back-end and

another for the web interface. If you want to run you own experiments, installing the

iguana package from OBS will

install a kernel and iguana-initrd, which then can be used for PXE boot or for direct kernel boot

in virtual machines.

More to come

As you can see, we are actively working on many areas. So we hope to have all kind of news for you in the next report. Meanwhile, we hope you got enough to keep you interested. Keep having a lot of fun!

Member

Member Futureboy

Futureboy