API Design Matters

I was reading a very interesting article called "API Design Matters" with the subtitle "Bad application programming interfaces plague software engineering. How can we get things right?". Very cool stuff.

In OpenOffice.org we have an API "plague" too: The ODF import/export is based on the "UNO-API" and so is the OOXML import for Writer. And developers hate these APIs.

So the question is why do developers hate the "UNO-API"? And the obvious --- but wrong answer --- is: "I hate the UNO-API because of UNO". Don't get me wrong here: This is neither about pro or contra UNO. But the statement that "UNO is the problem of the ODF import/export and OOXML import problems" is wrong. It's not UNO per se, but its the design of the API.

[In case you're wondering what "UNO" is: UNO=COM ;-) So UNO is OpenOffice.org's way of COM.]

And just to be sure I do not offend the wrong people: The UNO-API was not designed to be used in the import/export filters. It was designed to be the API for "OpenOffice.org BASIC" developers, i.e. it was designed to provide a similar API to what VBA developers have in Microsoft Office. It was never designed to be used for import/export filters.

The problem was the decision to base the import/export code on such a high-level API! And we suffer from this decision until now!

Anyway. How can we fix this?

a) We claim the current API is the best mankind can do and print T-Shirts with 1000 years of OOo experience.

b) We claim UNO and abstraction is the problem and use the internal legacy APIs, so that we never get a chance to refactor the internal legacy stuff since we're creating even more dependencies.

c) We come up with a better API.

Option a) was demonstrated at the OpenOffice.org conference in Beijing. [Does anybody have a picture of the T-Shirt?]

Option b) is the straightforward approach. E.g. in Writer the “.DOC”, “.RTF”, .”HTML” filters are based on the internal “Core” APIs. So lets use these APIs instead of the UNO-APIs.

Whats wrong with the approach? The problem is that these internal APIs do not abstract from the underlying implementation at all. Repeat: The internal APIs do not abstract from the underlying implementation at all.

Does this answer the question why using the internal APIs is the wrong approach? Obviously *not* having an abstraction between your core implementation details and your import/exports filters is ... [offensive language detected ;-)].

Option c) only has one problem: How should the API look like?

I have some ideas here, but before posting them maybe there are some strong believes out there?

How to build MonoDevelop with Visual Studio

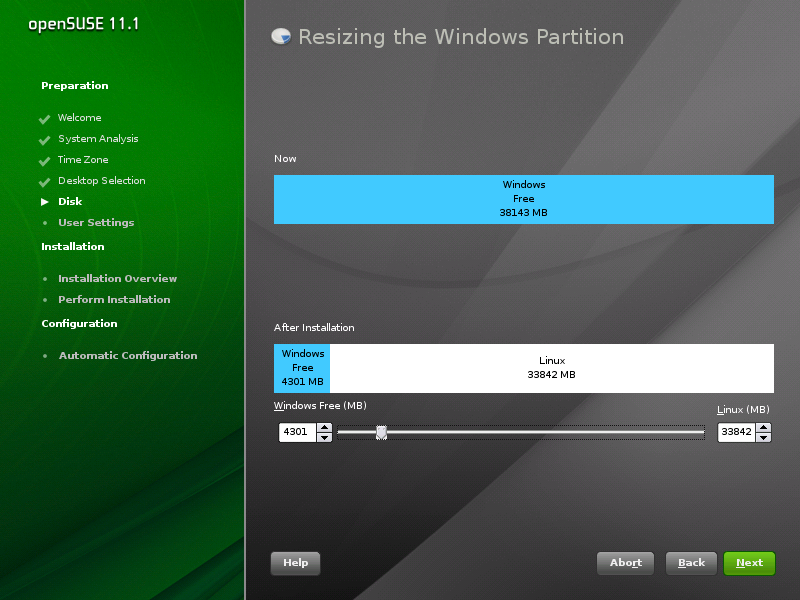

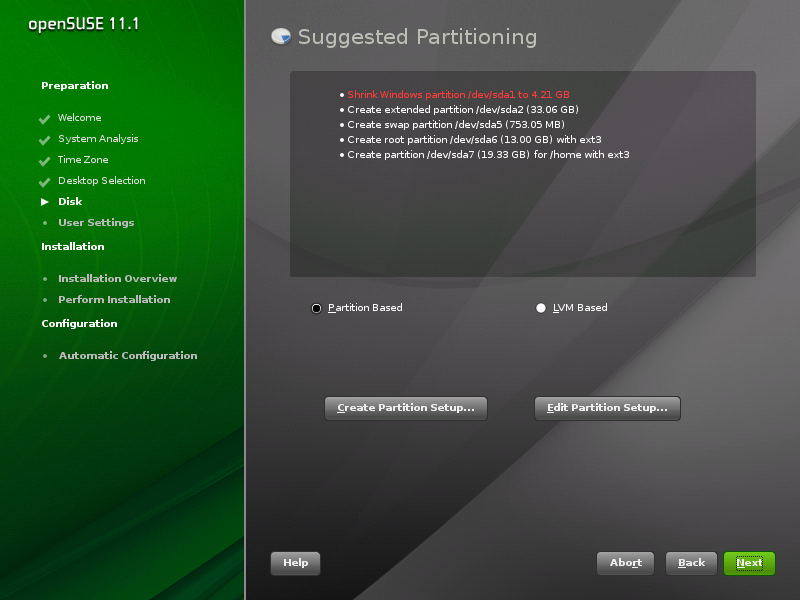

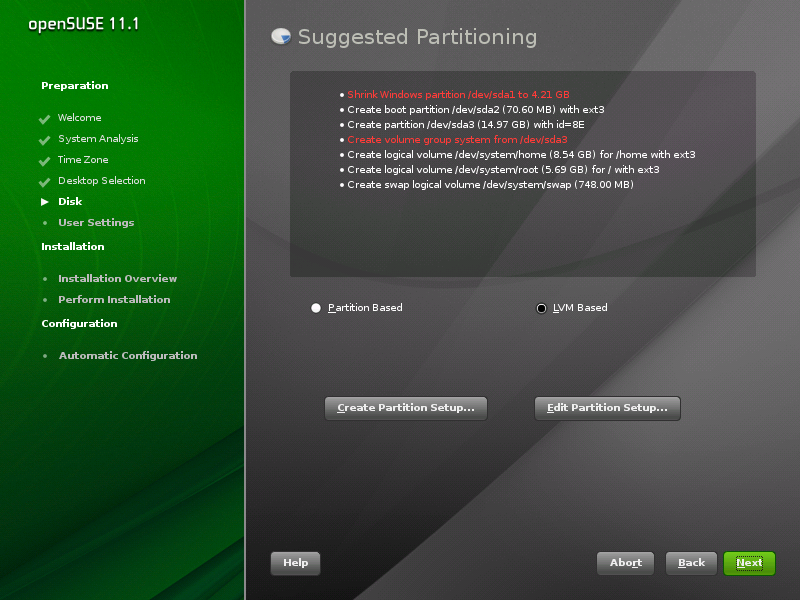

Installation: Resizing Windows before proposing Linux partitions

While “selling” openSUSE to a friend of mine, I tried to explain him all the steps of the installation and all the configuration options which I had changed. He was not any geek and it was his first time seeing Linux.

While most of the installation did not need much explanations, I definitely spent most of the time on partitioning. Not that initial proposal was not fine, unless one has special requirements, but there is one elementar input, which even newbies may want to set: How to split disk between Windows and Linux. The installation proposal works just fine, but if one needs to keep more or less space for Windows than proposed and does not have any skills, he is doomed – and so would have been he.

There is a graphical dialog for resizing of the windows partition, but, sadly, there is no way to resize Windows and propose Linux partitions in the remaining disk space – which is something that would help new users a lot, they can say “I want 30% of my disk for Windows and the rest for Linux”.

Inserting additional dialog before proposing disk configuration was not that hard as I was afraid of — and, even more surprisingly, it also works in combination with LVM (see the images).

You can see it with 11.2 Milestone 2, where it is not enabled by default; to enable it, boot with start_shell=1 on kernel command line and uncomment the

<module>

<label>Disk</label>

<name>resize_dialog</name>

<enable_back>yes</enable_back>

<enable_next>yes</enable_next>

</module>

part of /control.xml.

What do you think of it? Is it worth it? Any ideas for improvement?

On KDE4.3

We are almost one month far away from the kde4.3 release. Yesterday the 4.3 rc1 was tagged and due to excellent work of our colleagues in OBS we have it already.

Personally, I would have liked it to go a little bit further away in terms of usability, we will speak about that later. This release will mark the break with 3.5. I see no real reason for not using kde 4.3. All the functionality that people were crying after from 3.5 is finally up and running (a lot of time better) in KDE 4.3.

What more would you like then, people may ask.

I would like to be able to give a kde4 desktop to a secretary to use and to a teenager who is into Web2.0.

- a features consistent kontact, a kalendar that actually can be used in a real life environment, avoiding silly design inconsistencies like you can spell check your popup notes but you cannot do it for your notebooks entries.

- a kopete that actually supports video/audio features of protocols.

- a rock solid kblogger may help.

- a way to synchronize mobile devices.

and the last

- adding more work on making existing features working perfect rather than adding new ones.

Alin

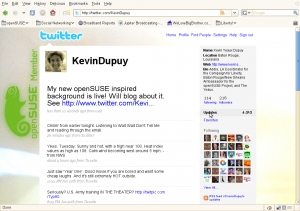

SUSE Out Your Tweets!

Thanks to Raul Libório from the openSUSE Marketing Team, you can trick out your Twitter background with your openSUSE Project affiliation (member, ambassador, user). Check out the openSUSE Social Network marketing page to get yours (look under “Sidebars for Twitter”.

You can add the logo itself, or use a program like The GIMP or Inkscape to add the logo to your current background (much like mine, below)

How to NOT replace your HTPC

Finally, there is a neat BluRay player LG BD 370 that plays Matroska container as well, which is the de-facto standard for fansubbed Animes nowadays. And it is reasonably cheap (<200€).

So I bought one and stress-tested it this weekend. Long story short, I'm unsure yet whether I will return it to the store or not. Because it doesn't exactly play everything - and yet I still somewhat like it

.

.Positive things first:

- Fast bootup - the system is ready in something like 10 seconds.

- Good BluRay performance - I don't have too many BluRays yet, but the ones I tested worked decently. It is the fastest BluRay player I have seen so far - but I still hate the BluRay engineers for choosing java as the menu language.

- Does play and upconvert DVDs.

- Does do 1080p@24 (untested), no issues at all with HDCP handshake, even on output device change and resolution switching with a cheap 15m HDMI cable.

- Very good user interface - I miss buttons for 10s instant forward/backward skipping, otherwise it's clean, neat, and easy to use.

- Never crashed on the approx. 50 disks I tested.

- Trivial and fast firmware update over ethernet.

- YouTube client - about the most useless feature IMHO.

- No decoding artifacts, unless it had warned you that this might be possible.

- Very good and accurate format description.

- Able to switch between >2 audio streams and subtitles on the fly.

- Does play matroska, avi, mp4, mov, mpg containers without problems. I have a single avi that doesn't play, but it's probably broken.

- Does play most(!) subtitles, including embedded ass and external srt files.

- Does play ac3, dts, mp3, aac audio streams.

- Does play most(!) h264, some(!)divx, all xvid video streams.

The last points already indicates the major issues:

- Has a hard limit of 720x576 for DX50 fourcc - this is unbelievable ridiculous, as XVID works without limits, and both are actually the same format.

- h264 is only supported upto level 4.1 - many fansubs use level 5.0 or higher, in order to use more reference frames. The player warns if the level is higher than 4.1, but if less then 9 reference frames are used at 720p (limit at level 4.1), it will play fine. Unfortunately, this isn't the case for half the fansubs I have.

- Newlines in subtitles are rendered as '\n'. Luckily, they almost break lines early enough when they are too long (few characters missing).

- Some subtitles aren't rendering, not sure why.

- Doesn't play m2p, ogm containers.

- Doesn't decode ogg vorbis.

- As with all commercial players I know so far: Doesn't allow to fix A/V synchronization. I have a DVD with audio lagging 500ms behind video (Ghost in the Shell, early pressing), and one with one audio stream being too early, and one too late (Fucking Åmål). Fansubs sometimes lag audio by up to +/-300ms, dunno why.

- There is a region-free patch for DVD, but only few tests of it can be found on the net. Don't know whether this works for all firmware versions, but somehow I doubt so.

- Switches 50fps - 60fps all the time (system menus are running at 50fps only - probably European model issue only). With the long HDMI switching times this is getting on your nerves.

- Doesn't use embedded fonts in ass subtitles.

- Doesn't decode flac.

- dts recoding would be a nice feature for aac audio, but doesn't work at all (tested on sp/dif only). Either I get pcm(!) two channel, or dts mono(!) without(!) sound.

- Doesn't detect VCD and SVCD.

- Does do 1080p@24 only on DVD and BluRay. Don't care too much, my projector doesn't accept 24fps, but is very good at inverse telecine. So I don't notice that.

I also noticed too late that the BD 370 doesn't only support no UPNP - but also no playback of files via SMB, NFS, or any other network means, just YouTube. Sigh. But well, the BD 390 will be available in Europe shortly as well, and that should include UPNP. Because of this I haven't even started to test audio file playback.

So I have a lot of issues with quite a number of disks I tested. And cannot use it neither for local network playback nor for Apple movie trailers. Still I'm unsure whether to return it or not, because the disks it plays it plays almost perfectly, with a nice user experience. At least I will have to keep my HTPC in addition to it for now.

Sigh.

New MonoDevelop installer for Windows

- Performance of the text editor greatly improved, thanks to a new text rendering logic cooked by Mike Krüeger.

- The debugger is now more reliable, it properly handles enum values, and it now has an 'immediate' console.

- The NUnit add-in now works.

- Version Control now has a new Create Patch command, thanks to Levi Bard.

- A new C# formatter, with support for per-project/solution formatting options.

- MD now logs debug and error output to a file located in your AppData/MonoDevelop/log.txt, so if you get a crash or something you may find some info there.

- Many other bug fixes.

open source xml editor in sight

Six years ago I was involved with an early predecessor of the openfate feature tracker. I had extended docbook sgml with a few feature tracking tags and it rendered nicely. We stored it in cvs and jointly hacked on the document. It never really got off the ground though, because there was no open source xml editor for Linux beyond emacs.

xml is great: It’s a simple, human- and machine readable serialization. And xml sucks because of all these ankle brackets. You need a tool to edit it.

Now yesterday I’m getting this mail:

Subject: ANN: Serna Free XML Editor Goes Open Source Soon! Help Us Build the Community!

From: Syntext Customer Service <XXXXX@syntext.com>

To: Susanne.Oberhauser@XXXXX

Date: 2009-06-17 17:11:26Dear Susanne Oberhauser,

We are happy to tell you that our Serna Free XML Editor is going to be open-source software soon! Serna is a powerful and easy-to-use WYSIWYG XML editor based on open standards, which works on Windows, Linux, Mac OS X, and Sun Solaris/SPARC.

We love Serna and wish to share our passion with anyone who wants to make it better. Our mission is to make XML accessible to everyone, and we believe that open-source Serna could enable much more users and companies to adopt XML technology.

It goes on about spreading the news and supporting the transition from just cost free to open source.

I got this mail because I’ve tried Serna five years ago, on the quest for a decent Linux xml editor. Back then it just rendered xml to xsl-fo with xslt, and then you edit the document in that rendered view, as if it was a word document. Serna came with docbook and a few toy examples like a simple time tracking sheet. Meanwhile they’ve added python scripting, dita support, an “xsl bricks” library to quickly creaty your own xslt transforms for your own document schemes, and the tool gathers the data from different sources with xinclude or dita conref and stores the data back to them and on the screen you just happily edit your one single unified document view.

I just hesitated to build an infrastructure around it because it was prorietary. I hate vendor lock-in. And now they want to open source serna!!

If this comes true, serna rocks the boat. It’s as simple as that. With the python scripting Serna is more than an xml editor: it actually is a very rich xml gui application platform, with one definition for print and editing, with wysiwyg editing in the print ‘pre’view. I dare to anticipate this is no less than one of the coolest things that ever happened to the Linux desktop… Once Serna is open source it will be so much simpler to create xml based applications. I guess I’m dead excited

Serna, I whish you happy trails on your open source endeavour!!

S.

An Update

My other baby (my seven year old laptop, Lazarus) is losing its memory. One of the memory slots decided to give up the ghost, so it now only has half a gig of RAM. One gig was already on the low side of comfortable, so now it's extremely frustrating to use. At least I'm running Linux on it and not Windows, since I generally find Windows likes to have around double the amount of RAM that Linux likes. It'd be a good excuse to put Lazarus in the grave one final time and get a nice, fast, new computer. But I can't afford a new computer and a new baby. Well, I could, but I just got a new 10-22mm lens for my camera and I want a new Line 6 box for my guitar, so I might just have to struggle on. Oh well, I'm in Canada for the next five weeks, so I don't have to worry about it at the moment.

alin

alin