Устойчивые имена сетевых интерфейсов

Считается, что подобный подход дает больше информации, чем прибитие гвоздями имен интерфейсов eth? к MAC-адресам в 70-persistent-net.rules. Однако, не обходится без недостатков. Например, при запуске системы под гипервизором VMWare ESXi, единственный сетевой интерфейс у меня называется eno16777728. Устройства, определяемые в DTB для одноплатных компьютеров на базе ARM, не поддерживаются схемой именования и имеют обычные имена eth?. А при подключении USB устройств, типа мобильных телефонов или модемов, схема именования генерирует скорее неустойчивые имена, потому-что в имя входит расположение устройства на шине USB, которое изменится при следующем подключении, таким образом придется настраивать интерфейс заново, потому-что предыдущие сохраненные настройки будут относиться к интерфейсу с другим именем.

К счастью, udev заполняет переменную ID_NET_NAME_MAC, представляющую имя интерфейса, основанное исключительно на его MAC-адресе. По-умолчанию, эта переменная не используется, но можно поменять стандартное поведение для USB-устройств. Один способ - через настройку udev, второй - используя systemd.link, файл, используемый systemd, для конфигурации сетевых интерфейсов.

Создадим файл /etc/systemd/network/90-usb.link следуя инструкции:

[Match] Path=*-usb-* [Link] NamePolicy=macЮнит-файл состоит из двух секций: [Match] для описания устройств к которым он относится и [Link] для описания того, что с ними делать. В примере выше мы просим systemd использовать политику именования основанную на MAC-адресах для всех устройств подключенных через USB. После выполнения systemctl daemon-reload можно подключить устройство и увидеть его интерфейс с именем enx112233445566. Достаточно длинное, но уникальное (в известных пределах) и не изменится при следующем подключении устройства.

Кроме изменения имени через systemd.link можно настроить WOL, MTU, ограничить скорость на интерфейсе, назначить устройству другой MAC-адрес.

Developing developers: From end-user to developer

Building a Community

Building a community can be challenging, but we've learned a few things from our experience in Greece. Here’s a quick guide based on what worked for us.

People Over Products

While a cool product is great (no matter which one), it’s the people who truly matter. It’s crucial to help potential volunteers understand why they should join a community. Is it because they want to support FLOSS to make the world a better place? Because it’s fun? Because they value the benefits of open source? Or because they simply enjoy helping others? Understanding the motivations behind joining or forming a community is key to sustaining it.

Growing Your Community: Attend Events

One of the most effective ways to grow your community is to attend events. The ideal scenario would be a developer visiting your booth and immediately deciding to join the team. Unfortunately, that’s highly unlikely—maybe a 1% chance (or even less). Most developers already have their favorite distribution or project and are not easily swayed.

Instead, target events where you can meet end users. These are usually computer science students who primarily use Windows or everyday users whose computers are mainly for social media or office tasks. Why focus on them? Because they can take on tasks that developers often avoid—tasks like:

- Junior Jobs List: Create a list of simple, well-defined tasks that newcomers can easily pick up.

- Bug Reporting: Encourage users to report bugs through platforms like Bugzilla, while developers respond politely and guide users on how to provide relevant information.

- Documentation: Developers often dislike writing documentation. End users can fill this gap by documenting how to use and troubleshoot the software.

- Translation: Encourage volunteers to make content accessible and clear, especially when developers use technical language.

- Promotion and Engagement: Focus on community engagement rather than traditional marketing. Spread the word and build awareness because even the best project is useless if no one knows about it.

Building a Supportive Environment

People join communities not just because of the product but because of the people within the community. Once they’re part of it, they stay because they feel welcome and valued.

Managing volunteers can be tricky since there’s no hierarchy to enforce tasks. If someone takes credit for the community's efforts or doesn’t acknowledge the contributions of others, it can drive members away and ultimately dissolve the community.

Keeping the Community Alive

The reality is that you can’t keep a community together indefinitely. Even in the best-case scenario, people grow and evolve. Their available time becomes limited as personal life and careers take priority. The best way to sustain the community is to continually onboard new people before the core members inevitably move on.

From End User to Developer

Transitioning from an end user to a developer is the natural progression for community members who become deeply involved. It’s nearly impossible for an existing developer from, say, Fedora to suddenly switch to developing exclusively for openSUSE. Instead, communities should focus on nurturing new developers from within.

Start by engaging end users with simple tasks. As they grow more curious, they’ll gradually take on more challenging roles—like fixing bugs or learning to package software. Eventually, they become developers themselves. Even if they stop promoting the project, the community has gained a new developer—a net positive.

Final Thoughts

Developing developers is crucial for long-term sustainability. Help end users grow by providing guidance and opportunities to learn. In the end, a thriving community is one that continually supports its members in their journey from end user to developer.

Go's net/context and http.Handler

context package to the standard library and uses it in the

net/http *http.Request type. The background info here may still be

helpful, but I wrote a follow-up post that revisits things for Go 1.7

and beyond.

The golang.org/x/net/context package (hereafter referred as

net/context although it’s not yet in the standard library) is a

wonderful tool for the Go programmer’s toolkit. The blog post that

introduced it shows how useful it is

when dealing with external services and the need to cancel requests,

set deadlines, and send along request-scoped key/value data.

The request-scoped key/value data also makes it very appealing as a

means of passing data around through middleware and handlers in Go web

servers. Most Go web frameworks have their own concept of context,

although none yet use net/context directly.

Questions about using net/context for this kind of server-side

context keep popping up on the /r/golang

subreddit

and the Gopher’s Slack

community.

Having recently ported a fairly large API surface from Martini to

http.ServeMux and net/context, I hope this post can answer those

questions.

About http.Handler

The basic unit in Go’s HTTP server is its http.Handler

interface, which is defined as:

type Handler interface {

ServeHTTP(ResponseWriter, *Request)

}

http.ResponseWriter is another simple

interface and

http.Request is a struct that contains data corresponding to the

HTTP request, things like

URL, headers, body if any, etc.

Notably, there’s no way to pass anything like a context.Context

here.

About context.Context

Much more detail about contexts can be found in the introductory blog post, but the main aspect I want to call attention to in this post is that contexts are derived from other contexts. Context values become arranged as a tree, and you only have access to values set on your context or one of its ancestor nodes.

For example, let’s take context.Background() as the root of the

tree, and derive a new context by attaching the content of the

X-Request-ID HTTP header.

type key int

const requestIDKey key = 0

func newContextWithRequestID(ctx context.Context, req *http.Request) context.Context {

return context.WithValue(ctx, requestIDKey, req.Header.Get("X-Request-ID"))

}

func requestIDFromContext(ctx context.Context) string {

return ctx.Value(requestIDKey).(string)

}

ctx := context.Background()

ctx = newContextWithRequestID(ctx, req)

This derived context is the one we would then pass to the next layer of the system. Perhaps that would create its own contexts with values, deadlines, or timeouts, or it could extract values we previously stored.

Approaches

So, without direct support for net/context in the standard library,

we have to find another way to get a context.Context into our

handlers.

There are three basic approaches:

- Use a global request-to-context mapping

- Create a

http.ResponseWriterwrapper struct - Create your own handler types

Let’s examine each.

Global request-to-context mapping

In this approach we create a global map of requests to contexts, and

wrap our handlers in a middleware that handles the lifetime of the

context associated with a request. This is the approach taken by

Gorilla’s context

package, although with its

own context type rather than net/context.

Because every HTTP request is processed in its own goroutine and Go’s

maps are not safe for concurrent access for performance reasons, it is

crucial that we protect all map accesses with a sync.Mutex. This

also introduces lock contention among concurrently processed requests.

Depending on your application and workload, this could become a

bottleneck.

In general, though, this approach works well for Gorilla’s context, because its context value is simply a map of key/value pairs. Our context is arranged like a tree, and it’s important that the map always hold a reference to the leaf. This places a burden on the programmer to manually update the pointer’s value as new contexts are derived.

An example usage might look like this:

var cmap = map[*http.Request]*context.Context{}

var cmapLock sync.Mutex

// Note that we are returning a pointer to the context, not the

// context itself.

func contextFromRequest(req *http.Request) *context.Context {

cmapLock.Lock()

defer cmapLock.Unlock()

return cmap[req]

}

// Necessary wrapper around all handlers. Must be the first middleware.

func contextHandler(ctx context.Context, h http.Handler) http.Handler {

return http.HandlerFunc(func(rw http.ResponseWriter, req *http.Request) {

ctx2 := ctx // make a copy of the root context reference

cmapLock.Lock()

cmap[req] = &ctx2

cmapLock.Unlock()

h.ServeHTTP(rw, req)

cmapLock.Lock()

delete(cmap, req)

cmapLock.Unlock()

})

}

func middleware(h http.Handler) http.Handler {

return http.HandlerFunc(func(rw http.ResponseWriter, req *http.Request) {

ctxp := contextFromRequest(req)

*ctxp = newContextWithRequestID(*ctxp, req)

h.ServeHTTP(rw, req)

})

}

func handler(rw http.ResponseWriter, req *http.Request) {

ctxp := contextFromRequest(req)

reqID := requestIDFromContext(*ctxp)

fmt.Fprintf(rw, "Hello request ID %s\n", reqID)

}

func main() {

h := contextHandler(context.Background(), middleware(http.HandlerFunc(handler)))

http.ListenAndServe(":8080", h)

}

Dereferencing the context pointer and updating it by hand is ugly, tedious and error-prone, which is why I don’t recommend this approach.

context.Context here. It’s not

necessary, but if you don’t use a pointer you must modify the

underlying map any time you derive a new context. Doing so greatly

increases the lock contention problem, because you must now lock

around the map any time you update the context for a request.

http.ResponseWriter wrapper struct

In this approach we create a new struct type that embeds an existing

http.ResponseWriter and attaches additional functionality to it.

This approach is often used by Go web frameworks to do things like

capturing the status code for the purpose of logging it later. Like

the first approach, you’ll need to wrap handlers in a middleware that

wraps the http.ResponseWriter and passes it into subsequent

middleware and your handler.

type contextResponseWriter struct {

http.ResponseWriter

ctx context.Context

}

func contextHandler(ctx context.Context, h http.Handler) http.Handler {

return http.HandlerFunc(func(rw http.ResponseWriter, req *http.Request) {

crw := &contextResponseWriter{rw, ctx}

h.ServeHTTP(crw, req)

})

}

func middleware(h http.Handler) http.Handler {

return http.HandlerFunc(func(rw http.ResponseWriter, req *http.Request) {

crw := rw.(*contextResponseWriter)

crw.ctx = newContextWithRequestID(crw.ctx, req)

h.ServeHTTP(rw, req)

})

}

func handler(rw http.ResponseWriter, req *http.Request) {

crw := rw.(*contextResponseWriter)

reqID := requestIDFromContext(crw.ctx)

fmt.Fprintf(rw, "Hello request ID %s\n", reqID)

}

func main() {

h := contextHandler(context.Background(), middleware(http.HandlerFunc(handler)))

http.ListenAndServe(":8080", h)

}

This approach just feels dirty to me. The context is associated with

the request, not the response, so sticking it on http.ResponseWriter

feels out of place. The ResponseWriter’s purpose is simply to give

handlers a way to write data to the output socket.

Piggybacking on http.ResponseWriter requires a type assertion to

your wrapper struct type before you can access the context. The

details of this can be hidden away in a safe helper function, but it

doesn’t hide the fact that the runtime assertion is necessary.

There is also another hidden downside. There is a concrete value

(with a type internal to package net/http) underlying the

http.ResponseWriter that is passed into your handler. That value

also implements additional interfaces from the net/http package. If

you simply wrap http.ResponseWriter, your wrapper will not be

implementing these additional interfaces.

You must implement these interfaces with wrapper functions if you hope

to match the base http.ResponseWriter’s functionality. In some

cases, like http.Flusher, this is easy with a simple conditional

type assertion:

func (crw *contextResponseWriter) Flush() {

if f, ok := crw.ResponseWriter.(http.Flusher); ok {

f.Flush()

}

}

However, http.CloseNotifier is quite a bit harder. Its

definition contains a

method that returns a <-chan bool. That channel has certain

semantics that existing code likely depends

upon1. We have a couple different options

here, none of them good:

-

Ignore the interface and don’t implement it, making the functionality unavailable even if the underlying

http.ResponseWritersupports it. -

Implement the interface and wrap to the underlying implementation. But what if the underlying

http.ResponseWriterdoes not support this interface? We can’t guarantee the proper semantics of the API.

These are just two interfaces that the standard library implements

today. This approach is not future-proof, because additional

interfaces may be added to the standard library and implemented

internally within net/http.

I don’t recommend this approach because of the interface issue, but if you’re ok with ignoring them, this is probably the simplest to implement.

Custom context handler types

In this approach, we eschew http.Handler for a new type of our own

creation. This has obvious downsides: you cannot use existing de

facto middleware or handlers without wrappers. Ultimately, though, I

think this is the cleanest way to pass a context.Context around.

Let’s create a new ContextHandler type, following in the model of

http.Handler. We’ll also create an analog to http.HandlerFunc.

type ContextHandler interface {

ServeHTTPContext(context.Context, http.ResponseWriter, *http.Request)

}

type ContextHandlerFunc func(context.Context, http.ResponseWriter, *http.Request)

func (h ContextHandlerFunc) ServeHTTPContext(ctx context.Context, rw http.ResponseWriter, req *http.Request) {

h(ctx, rw, req)

}

Middleware can now derive new contexts from the one passed to the handler, and pass them onto the next middleware or handler in the chain.

func middleware(h ContextHandler) ContextHandler {

return ContextHandlerFunc(func(ctx context.Context, rw http.ResponseWriter, req *http.Request) {

ctx = newContextWithRequestID(ctx, req)

h.ServeHTTPContext(ctx, rw, req)

})

}

The final context handler has access to all of the request data set by middleware above it.

func handler(ctx context.Context, rw http.ResponseWriter, req *http.Request) {

reqID := requestIDFromContext(ctx)

fmt.Fprintf(rw, "Hello request ID %s\n", reqID)

}

The last trick is converting our ContextHandler into something that

is http.Handler compatible, so we can use it anywhere standard

handlers are used.

type ContextAdapter struct{

ctx context.Context

handler ContextHandler

}

func (ca *ContextAdapter) ServeHTTP(rw http.ResponseWriter, req *http.Request) {

ca.handler.ServeHTTPContext(ca.ctx, rw, req)

}

func main() {

h := &ContextAdapter{

ctx: context.Background(),

handler: middleware(ContextHandlerFunc(handler)),

}

http.ListenAndServe(":8080", h)

}

The ContextAdapter type also allows us to use existing

http.Handler middleware, as long as they run before it does.

Existing logging, panic recovery, and form validation middleware

should all continue to work great with our context handlers plus our

adapter.

This is my preferred method for integrating net/context with my

server. I recently converted an approximately 30-route server from

Martini to this method, and things are working great. The code is

much cleaner, easier to follow, and performs better. This API service

does both HTTP basic and OAuth authentication, passing along client

and user information via contexts. It extracts request IDs that are

passed across to other services via contexts. Context-aware

middleware handles setting CORS headers, handling OPTIONS requests,

recovering from panics by returning JSON-encoded errors, logging

request and response info, and recording statsd metrics.

Give it a try and let me know on Twitter how it goes. Maybe it’ll become the foundation for the next Go web framework. 😀

-

“CloseNotify returns a channel that receives a single value when the client connection has gone way” ↩︎

Livro - Androides sonham com ovelhas elétricas?

Eliminar archivos durante la visualización en Mplayer

Bueno, esto es una pequeña ayuda de escritorio especialmente útil cuando tienes que manejar decenas ó centenas de vídeos en batería.

Periódicamente salgo al monte a recoger los vídeos/imágenes de las cámaras de fototrampeo que tengo repartidas. Depende del tiempo que pase entre visitas, de la configuración de las cámaras, de lo animado que esté el monte, etc.. pero habitualmente me suelo traer de vuelta en el teléfono tranquilamente 200 o 300 vídeos de 10-30 segundos. Toca visionarlos tranquilamente en el ordenador, por si se me hubiese escapado algo y clasificar/desechar el material. Normalmente descarto casi el 70%-80% de los vídeos que se graban.

La rutina de trabajo sería “por defecto” algo como: abrir la carpeta contenedora, reproducirlos en batería, memorizar nombre, volver a la carpeta, borrar, continuar con la lista de reproducción. Gracias a MPLAYER podemos hacer esto tan fácil como: reproducir lista de vídeos, borrar vídeo, pasar al siguiente.

MPLAYER, es un reproductor de vídeos/audio que dispone en su configuración de un modo esclavo que es tremendamente útil. Este modo esclavo (slave) permite a otras aplicaciones/scripts interactuar con la reproducción en curso de MPLAYER. El reproductor “escucha” este archivo y ejecuta los comandos recibidos tal y como haría desde su propia interfaz. Esto permitiría por ejemplo pausar/silenciar una película cuando se reciba un correo electrónico, o como en nuestro caso borrar el archivo que se esté reproduciendo tan solo como pulsar la tecla DEL.

Activar modo esclavo

Lo primero es activar el modo esclavo en MPLAYER. Para esto editamos (o creamos si no existe) el archivo config ubicado en la carpeta local de Mplayer ( ~/.mplayer/config ).

Añadimos las siguientes dos líneas: slave=1

input=file=/home/tu-usuario/.mplayer/tuberia

A continuación abrimos un terminal y creamos la tuberíamkfifo /home/tu-usuario/.mplayer/tuberia

Con esto basta para que cada vez que se inicie MPLAYER permanezca “a la escucha” de este archivo. Ahora durante la ejecución de una película podríamos pararla escribiendo en un terminal:echo "pause" > /home/tu-usuario/.mplayer/tuberia

Asignar función a la tecla

Edita el archivo de MPLAYER acceso rápidos de teclado ( /home/tu-usuario/.mplayer/input.conf ) y añade la línea:DEL run /home/tu-usuario/.mplayer/borrarActual

Script de eliminación de archivo en reproducción

Ahora creas el script que se ejecutará cada vez que pulsemos la tecla DEL durante la reproducción de un video/audio en MPLAYER, crea un archivo de texto en /home/tu-usuario/.mplayer/borrarActual con el siguiente contenido:#!/bin/sh

mplayerPID=$(pidof mplayer)

if [ "$(echo ${mplayerPID}|wc -w)" -ne 1 ] ; then exit 1; fi

IFS=$'\n'

for archivo in $(lsof -p ${mplayerPID} -Fn | grep -i -E -w 'avi|mp4|mp3|mov|mpg|ogg|3gp' | sed 's/^n//g') ; do

tuberia="/home/tu-usuario/.mplayer/tuberia"

if test -w "${archivo}" ; then

if [ -p "$tuberia" ]; then

echo "pt_step 1" > "$tuberia"

else

mkfifo "$tuberia"

fi

kioclient move "${archivo}" trash://

fi

done

Haz ejecutable el archivo con chmod +x /home/tu-usuario/borrarActual

El script busca el PID de mplayer en ejecución (sale si no hay solo 1 PID). Con este PID averiguamos la ruta del archivo en reproducción. Pasa al siguiente de la lista y mueve el archivo a la papelera (mejor que hacer RM, por si metemos la pata).

openSUSE Regular Release - The Future is Unwritten, let's write it

The recording of my presentation from openSUSE conference 2015 is live.

During the session I talk about the success of Tumbleweed and how its the best choice for anyone who wants their Linux with the latest and greatest software

I then go on to talk about our openSUSE Regular Release, where I propose using the recently released SUSE Linux Enterprise sources as an opportunity to build a Stable Linux release that covers the needs of more conservative users as successfully as Tumbleweed covers the needs of those who want new versions of everything, regularly

Please enjoy the video and join the discussion on the opensuse-project@opensuse.org mailinglist

Server buildin into libgreattao and tao-network-client

In this entry I would describe network server, which were buildin into liobgreattao and client of this server(tao-network-client). These two projects are created by me. Both tools are ready for download from svn repository on sourceforge.

Introduction

Calling each of these tool client of server is misunderstanding, because each of these tool can works both in server and client mode. Communication protocol is the same in both mode, the only difference between these two modes are in way connection is established. Exchanged messages are always the same, not dependent one of my solution is working as client or as sever.

Second mode was introduced to avoid unnecessary work on independent proxy application. It additionally allow to work by tao-network-client with many application written in libgreattao(Imagine one application was run another). In a result of adding second working mode, tao-network-client have two proxy mode:

- Libgreattao server proxy mode: tao-network-client is libgreattao application, so it can be run in proxy mode

- Second mode introduced by adding server mode to tao-network-client

First proxy mode allow to transport messages only from one libgreattao server.

The way it’s working

Entire protocol is designed to transfer messages associated with some libgreattao calls and to transfer files. Server sends icon, window class files or file created by application. It also sends command, like create a window of some class. Client sends messages requiring receive file and status of window creating operation

When server is working in server mode, it forks for each connection. When client is working in server mode, it run one thread for accept connection and runs additional thread for each connection request

Running server for application

To run application written in libgreattao as server, we need an certificate and a private key. If private key is is encrypted, we need to pass decrypt key as an parameter. Additionally we can type password, which will be required from client on connection step. We must give port number to listen on.

our_application --tao-network-port 1026 --tao-network-certificate-path /home/I/my_certificate.pem --tao-network-priv-key-path /home/I/my_private_key.pem --tao-network-priv-key-password OUR_KEY_TO_DECRYPT_PRIVATE_KEY --tao-network-password OUR_CONNECTION_PASSWORD

Two last parameters are optional. Password for connection can’t be bigger than 255 characters.

Running client

To run client, we need to single/double click(for example) on it. If no parameters are given, client ask for host name and port number. In the same case, in next step, client ask us for password, if server ask for it. We also run tao-network-client in command line, giving all needed arguments. This is example:

tao-netwrok-client --host localhost --port 1026 --password OUR_CONNECTION_PASSWORD

Client as proxy server for one application

You can run proxy server, using tao-nextwork-client. We run first type of proxy in this way:

tao-network-client --host locahost --port 1026 --password OUR_CONNECTION_PASSWORD --tao-network-port 1027 --tao-network-certificate-path /home/I/our_certificate.pem --tao-network-priv-key-path /home/I/our_private_key.pem --tao-network-priv-key-password OUR_PRIVATE_KEY_PASSWORD --tao-network-password OUR_PASSWORD_FOR_PROXY_SERVER

What was changed? We changed only port number, because proxy server can be ran on the same machine. We also assign different password for proxy server, because we had an fantasy. Certainly, we can skip two password, because:

- First – we will be asked for it as described before

- Second – tao-network-client is libgreattao application, so question for password of server application will be remote

Reverting roles

Imagine we would like to use many libgreattao applications with on client. In this situation, we need revert role of libgreattao process we want to use and tao-network-client. Servers will be clients and clients will be servers. We need also a way to establish remote connection to these application, so we start tao-network-client on server in second proxy mode and connects tao-network-client to it from our computer. In first proxy mode proxy server was a server for another tao-network-client and client for single instance of libgreattao application. In second proxy mode proxy instance is server for many libgreattao applications instance and server for one instance of libgreattao. Because in this mode proxy is still libgreattao server, it forks on each tao-network-client connection.

We can achieve our goal in this way:

tao-network-client --wait-on-port 1030 --path-to-certificate /home/I/our_certificate.pem --path-to-priv-key /home/I/our_private_key.pem --password-to-priv-key OUR_PRIVTE_KEY_PASSWORD --password PASSWORD_FOR_TAO_APPLICATION --tao-network-port 1027 --tao-network-certificate-path /home/I/our_certificate.pem --tao-network-priv-key-path /home/I/our_private_key.pem --tao-network-priv-key-password OUR_PASSWORD_FOR_PRIVATE_KEY --tao-network-password PASSWORD_FOR_TAO_NETWORK_CLIENT

In code placed above we had have one bug: we set port number, so we cannot connect many tao-network-client. The solution is rather simple. All we need is to not pass –wait-on-port parameter and pass –command instead. Example is showed below:

tao-network-client --path-to-certificate /home/I/our_certificate.pem --path-to-priv-key /home/I/our_private_key.pem --password-to-priv-key OUR_PRIVTE_KEY_PASSWORD --password PASSWORD_FOR_TAO_APPLICATION --tao-network-port 1027 --tao-network-certificate-path /home/I/our_certificate.pem --tao-network-priv-key-path /home/I/our_private_key.pem --tao-network-priv-key-password OUR_PASSWORD_FOR_PRIVATE_KEY --tao-network-password PASSWORD_FOR_TAO_NETWORK_CLIENT --tao-app-command-line --command our_tao_application

In this mode we don’t pass port number, so we can connects many clients, but we pass command to run instead. Command will be run on local machine, so this example is great for second proxy

Take a look at password argument. In two above examples, it is used to set password to authenticate to client instead of authenticate to server.

How to connect libgreattao application

In example below I demonstrate how to connect

TAO_CONNECT_TO_CLIENT_ON_HOST=host_name TAO_CONNECT_TO_CLIENT_ON_PORT=1030 TAO_NETWORK_PASSWORD=PASSWORD_FOR_TAO_APPLICATION our_application

Why we had use environment variables? The reason is simple: application can run another application and information should be passed to it. When our certificate are not valid(for example self signed or expired), our application will drop connection.To avoid this, we should pass additional environment variable, like below:

TAO_NETWORK_FORCE_CONNECT=1 TAO_CONNECT_TO_CLIENT_ON_HOST=host_name TAO_CONNECT_TO_CLIENT_ON_PORT=1030 our_application

Passing argument

You can pass parameters to tao application by prefix name of parameter with –network-option-, for example to tell proxy to connect to application listen on port 1026 and host localhost, we can do this in way showed below:

tao-network-client --network-option-host localhost --network-option-port 1026

Limits

We can pass limit to downloaded file size. We can limit single file size with putting option –max-file-len size_in_bytes. We can limit sum of file sizes by putting option –max-files-len size_in_bytes. While one of the limit was reached, tao_network_client will asks to continue connection.

Programming

- To develop custom client, you should use libgreatta/network.h

header - To support sending/receiving files selected in file dialogs, you should use functions tao_open_file, tao_close_file and tao_release_file

Ever wanted to be a Dj with open source touch?

There are plenty of Dj software available on Internet. Most popular I think are Traktor and VirtualDJ. Those are no brainier to choose and don’t support Linux. Because I’m old fart and I started doing my dang long time a go with Technics vinyl-players (and still play my gigs with them). They work as they have always worked great but I though that I need new geeky Dj system with digital vinyls because many interesting release doesn’t do vinyls anymore and I don’t like CD-format. Summarizing all of that I wanted something that what is open source and I can still attach my digital vinyls to it (so it should work with Serato or Traktor vinyls).

After doing little bit homework I popped up with XWax which is an open-source Digital Vinyl System (DVS) for Linux. It works great and believe me it’s geeky. Still it left me little bit blank because I liked to use some Dj controller to load music. XWax doesn’t support Dj controller at least I didn’t get mine working. So back to square one.

Then I crossed Mixxx and it looked very promising but Mixxx version 1.10 left much to hope for. After a short while they released version 1.11 which was better but I noted that plenty of MP4 format audio files didn’t work (most of the my music is encoded with Vorbis and wrapped with Ogg that works great but if you buy something they tend to favour MP4).

Make long story short. I get involved with Mixxx and it have very nice community, fixed non-working FFmpeg plug in and fixed handfull of Linux specific stuff. After making FFmpeg plug in working I noticed I have solved most of my digital Dj problems. Mixxx works with Linux… check, openSUSE.. thank you for asking yes, is open source… GPLv2, Dj controllers.. long list, Digital vinyls.. serato and traktor and have nice working skinnable interface.. check.

Only thing is that Mixxx is using Portaudio with Linux and Portaudio doesn’t play nice with Pulseaudio but I wrote patch for Portaudio and it’s currently in ‘works for me’-stage which means late Beta. If I ever got time I’ll give it a facelift, commit it to Github and generate little bit documentation and try to again get it to official Portaudio

But if you are open source Dj and like to test state-of-art version (which is light years ahead last one) of Mixxx I’ll recommend to test Mixxx version 1.12 beta. You should understand it’s Beta software and if you find bug please report it. But if you want to stick with stable Mixxx version 1.11 there is nothing wrong with that it’s also very capable application.

In openSUSE you can download it with zypper from Packman repos.

Irssi window_switcher.pl

Especially when using irssi via your smartphone it can be highly annoying to switch windows. Yes the /win $number thing will work. But who can remember all those numbers when you go past 20 windows? That’s where window_switcher.pl comes into play.

Setup

First download the file and place it into ~/.irssi/scripts/autorun/.

Then switch back to irssi and set up window_switcher. We will bind ctrl+o to window_switcher. The status bar item is placed in the front of the status bar, so we an still see it on small displays.

Introducing Portus: an authorization service and front-end for Docker registry

One of the perks of working at SUSE is hackweek, an entire week you can dedicate working on whatever project you want. Last week the 12th edition of hackweek took place. So I decided to spend it working on solving one of the problems many users have when running an on-premise instance of a Docker registry.

The Docker registry works like a charm, but it’s hard to have full control over the images you push to it. Also there’s no web interface that can provide a quick overview of registry’s contents.

So Artem, Federica and I created the Portus project (BTW “portus” is the Latin name for harbour).

Portus as an authorization service

The first goal of Portus is to allow users to have a better control over the contents of their private registries. It makes possible to write policies like:

- everybody can push and pull images to a certain namespace,

- everybody can pull images from a certain namespace but only certain users can push images to it,

- only certain users can pull and push to a certain namespace; making all the images inside of it invisible to unauthorzied users.

This is done implementing the token based authentication system supported by the latest version of the Docker registry.

Docker login and Portus authentication in action

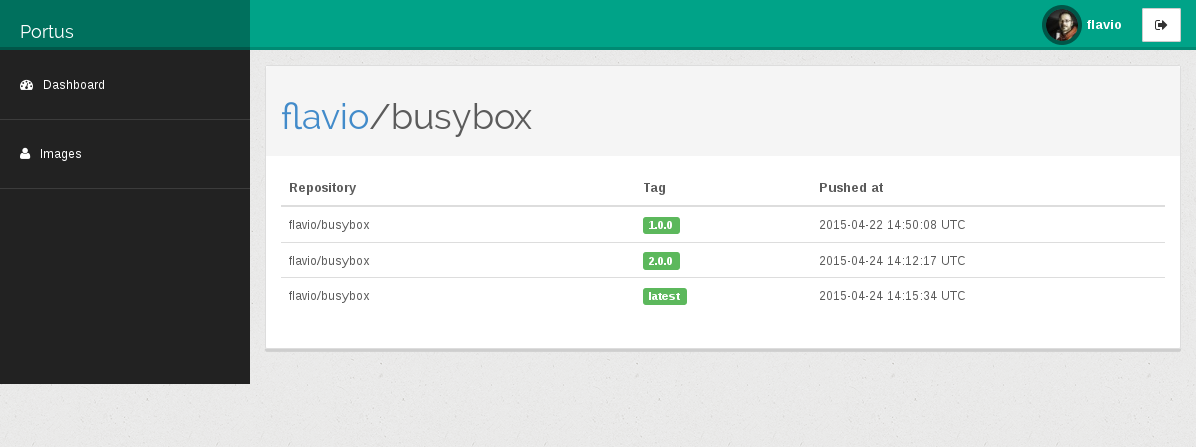

Portus as a front-end for Docker registry

Portus listens to the notifications sent by the Docker registry and uses them to populate its own database.

Using this data Portus can be used to navigate through all the namespaces and the repositories that have been pushed to the registry.

We also worked on a client library that can be used to fetch extra information from the registry (i.e. repositories’ manifests) to extend Portus’ knowledge.

The current status of development

Right now Portus has just the concept of users. When you sign up into Portus a private namespace with your username will be created. You are the only one with push and pull rights over it; nobody else will be able to mess with it. Also pushing and pulling to the “global” namespace is currently not allowed.

The user interface is still a work in progress. Right now you can browse all the namespaces and the repositories available on your registry. However user’s permissions are not taken into account while doing that.

If you want to play with Portus you can use the development environment managed by Vagrant. In the near future we are going to publish a Portus appliance and obviously a Docker image.

Please keep in mind that Portus is just the result of one week of work. A lot of things are missing but the foundations are solid.

Portus can be found on this repository on GitHub. Contributions (not only code, also proposals, bugs,…) are welcome!

Member

Member Diamond_gr

Diamond_gr